| ||

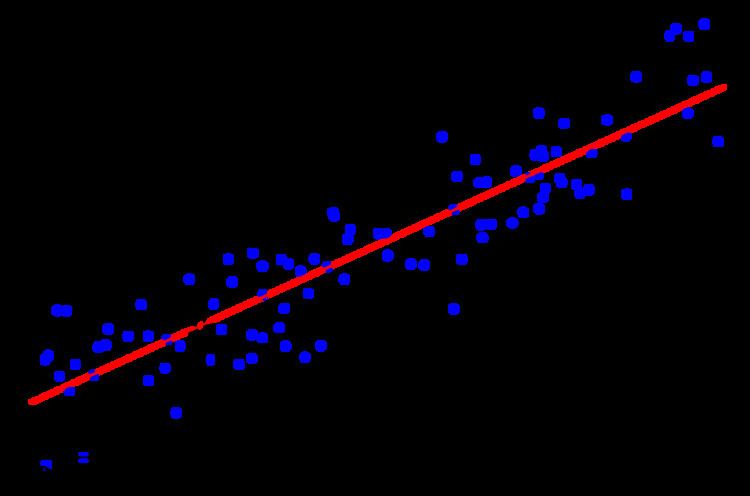

In statistics, simple linear regression is a linear regression model with a single explanatory variable. That is, it concerns two-dimensional sample points with one independent variable and one dependent variable (conventionally, the x and y coordinates in a Cartesian coordinate system) and finds a linear function (a non-vertical straight line) that, as accurately as possible, predicts the dependent variable values as a function of the independent variables. The adjective simple refers to the fact that the outcome variable is related to a single predictor.

Contents

- Fitting the regression line

- Linear regression without the intercept term

- Model cased properties

- Unbiasedness

- Confidence intervals

- Normality assumption

- Asymptotic assumption

- Numerical example

- Derivation of simple regression estimators

- References

It is common to make the additional hypothesis that the ordinary least squares method should be used to minimize the residuals. Under this hypothesis, the accuracy of a line through the sample points is measured by the sum of squared residuals (vertical distances between the points of the data set and the fitted line), and the goal is to make this sum as small as possible. Other regression methods that can be used in place of ordinary least squares include least absolute deviations (minimizing the sum of absolute values of residuals) and the Theil–Sen estimator (which chooses a line whose slope is the median of the slopes determined by pairs of sample points). Deming regression (total least squares) also finds a line that fits a set of two-dimensional sample points, but (unlike ordinary least squares, least absolute deviations, and median slope regression) it is not really an instance of simple linear regression, because it does not separate the coordinates into one dependent and one independent variable and could potentially return a vertical line as its fit.

The remainder of the article assumes an ordinary least squares regression. In this case, the slope of the fitted line is equal to the correlation between y and x corrected by the ratio of standard deviations of these variables. The intercept of the fitted line is such that it passes through the center of mass (x, y) of the data points.

Fitting the regression line

Suppose there are n data points {(xi, yi), i = 1, ..., n}. The function that describes x and y is:

The goal is to find the equation of the straight line

which would provide a "best" fit for the data points. Here the "best" will be understood as in the least-squares approach: a line that minimizes the sum of squared residuals of the linear regression model. In other words, α (the y-intercept) and β (the slope) solve the following minimization problem:

By simply expanding to get a quadratic expression in α and β, it can be shown that the values of α and β that minimize the objective function Q are

where rxy is the sample correlation coefficient between x and y; and sx and sy are the sample standard deviation of x and y. A horizontal bar over a quantity indicates the average value of that quantity. For example:

Substituting the above expressions for

yields

This shows that rxy is the slope of the regression line of the standardized data points (and that this line passes through the origin).

It is sometimes useful to calculate rxy from the data independently using this equation:

The coefficient of determination (R squared) is equal to

Linear regression without the intercept term

Sometimes it is appropriate to force the regression line to pass through the origin, because x and y are assumed to be proportional. For the model without the intercept term, y = βx, the OLS estimator for β simplifies to

Substituting (x − h, y − k) in place of (x, y) gives the regression through (h, k):

The last form above demonstrates how moving the line away from the center of mass of the data points affects the slope.

Model-cased properties

Description of the statistical properties of estimators from the simple linear regression estimates requires the use of a statistical model. The following is based on assuming the validity of a model under which the estimates are optimal. It is also possible to evaluate the properties under other assumptions, such as inhomogeneity, but this is discussed elsewhere.

Unbiasedness

The estimators

Confidence intervals

The formulas given in the previous section allow one to calculate the point estimates of α and β — that is, the coefficients of the regression line for the given set of data. However, those formulas don't tell us how precise the estimates are, i.e., how much the estimators

The standard method of constructing confidence intervals for linear regression coefficients relies on the normality assumption, which is justified if either:

- the errors in the regression are normally distributed (the so-called classic regression assumption), or

- the number of observations n is sufficiently large, in which case the estimator is approximately normally distributed.

The latter case is justified by the central limit theorem.

Normality assumption

Under the first assumption above, that of the normality of the error terms, the estimator of the slope coefficient will itself be normally distributed with mean β and variance

where

is the standard error of the estimator

This t-statistic has a Student's t-distribution with n − 2 degrees of freedom. Using it we can construct a confidence interval for β:

at confidence level (1 − γ), where

Similarly, the confidence interval for the intercept coefficient α is given by

at confidence level (1 − γ), where

The confidence intervals for α and β give us the general idea where these regression coefficients are most likely to be. For example, in the Okun's law regression shown at the beginning of the article the point estimates are

The 95% confidence intervals for these estimates are

In order to represent this information graphically, in the form of the confidence bands around the regression line, one has to proceed carefully and account for the joint distribution of the estimators. It can be shown that at confidence level (1 − γ) the confidence band has hyperbolic form given by the equation

Asymptotic assumption

The alternative second assumption states that when the number of points in the dataset is "large enough", the law of large numbers and the central limit theorem become applicable, and then the distribution of the estimators is approximately normal. Under this assumption all formulas derived in the previous section remain valid, with the only exception that the quantile t*n−2 of Student's t distribution is replaced with the quantile q* of the standard normal distribution. Occasionally the fraction 1/n−2 is replaced with 1/n. When n is large such a change does not alter the results appreciably.

Numerical example

This example concerns the data set from the ordinary least squares article. This data set gives average masses for women as a function of their height in a sample of American women of age 30–39. Although the OLS article argues that it would be more appropriate to run a quadratic regression for this data, the simple linear regression model is applied here instead.

There are n = 15 points in this data set. Hand calculations would be started by finding the following five sums:

These quantities would be used to calculate the estimates of the regression coefficients, and their standard errors.

The 0.975 quantile of Student's t-distribution with 13 degrees of freedom is t*13 = 2.1604, and thus the 95% confidence intervals for α and β are

The product-moment correlation coefficient might also be calculated:

This example also demonstrates that sophisticated calculations will not overcome the use of badly prepared data. The heights were originally given in inches, and have been converted to the nearest centimetre. Since the conversion factor is one inch to 2.54 cm, this is not a correct conversion. The original inches can be recovered by Round(x/0.0254) and then re-converted to metric: if this is done, the results become

Thus a seemingly small variation in the data has a real effect.

Derivation of simple regression estimators

We look for

To find a minimum take partial derivatives with respect to

Before taking partial derivative with respect to

Now, take the derivative with respect to

And finally substitute