| ||

Quantum mechanics (QM; also known as quantum physics or quantum theory), including quantum field theory, is a branch of physics which is the fundamental theory of nature at small scales and low energies of atoms and subatomic particles. Classical physics, the physics existing before quantum mechanics, derives from quantum mechanics as an approximation valid only at large (macroscopic) scales. Quantum mechanics differs from classical physics in that energy, momentum and other quantities are often restricted to discrete values (quantization), objects have characteristics of both particles and waves (wave-particle duality), and there are limits to the precision with which quantities can be known (Uncertainty principle).

Contents

- History

- Mathematical formulations

- Mathematically equivalent formulations of quantum mechanics

- Interactions with other scientific theories

- Quantum mechanics and classical physics

- Copenhagen interpretation of quantum versus classical kinematics

- General relativity and quantum mechanics

- Attempts at a unified field theory

- Philosophical implications

- Applications

- Electronics

- Cryptography

- Quantum computing

- Macroscale quantum effects

- Quantum theory

- Free particle

- Step potential

- Rectangular potential barrier

- Particle in a box

- Finite potential well

- Harmonic oscillator

- References

Quantum mechanics gradually arose from Max Planck's solution in 1900 to the black-body radiation problem (reported 1859) and Albert Einstein's 1905 paper which offered a quantum-based theory to explain the photoelectric effect (reported 1887). Early quantum theory was profoundly reconceived in the mid-1920s.

The reconceived theory is formulated in various specially developed mathematical formalisms. In one of them, a mathematical function, the wave function, provides information about the probability amplitude of position, momentum, and other physical properties of a particle.

Important applications of quantum theory include quantum chemistry, superconducting magnets, light-emitting diodes, and the laser, the transistor and semiconductors such as the microprocessor, medical and research imaging such as magnetic resonance imaging and electron microscopy, and explanations for many biological and physical phenomena.

History

Scientific inquiry into the wave nature of light began in the 17th and 18th centuries, when scientists such as Robert Hooke, Christiaan Huygens and Leonhard Euler proposed a wave theory of light based on experimental observations. In 1803, Thomas Young, an English polymath, performed the famous double-slit experiment that he later described in a paper titled On the nature of light and colours. This experiment played a major role in the general acceptance of the wave theory of light.

In 1838, Michael Faraday discovered cathode rays. These studies were followed by the 1859 statement of the black-body radiation problem by Gustav Kirchhoff, the 1877 suggestion by Ludwig Boltzmann that the energy states of a physical system can be discrete, and the 1900 quantum hypothesis of Max Planck. Planck's hypothesis that energy is radiated and absorbed in discrete "quanta" (or energy packets) precisely matched the observed patterns of black-body radiation.

In 1896, Wilhelm Wien empirically determined a distribution law of black-body radiation, known as Wien's law in his honor. Ludwig Boltzmann independently arrived at this result by considerations of Maxwell's equations. However, it was valid only at high frequencies and underestimated the radiance at low frequencies. Later, Planck corrected this model using Boltzmann's statistical interpretation of thermodynamics and proposed what is now called Planck's law, which led to the development of quantum mechanics.

Following Max Planck's solution in 1900 to the black-body radiation problem (reported 1859), Albert Einstein offered a quantum-based theory to explain the photoelectric effect (1905, reported 1887). Around 1900-1910, the atomic theory and the corpuscular theory of light first came to be widely accepted as scientific fact; these latter theories can be viewed as quantum theories of matter and electromagnetic radiation, respectively.

Among the first to study quantum phenomena in nature were Arthur Compton, C. V. Raman, and Pieter Zeeman, each of whom has a quantum effect named after him. Robert Andrews Millikan studied the photoelectric effect experimentally, and Albert Einstein developed a theory for it. At the same time, Ernest Rutherford experimentally discovered the nuclear model of the atom, for which Niels Bohr developed his theory of the atomic structure, which was later confirmed by the experiments of Henry Moseley. In 1913, Peter Debye extended Niels Bohr's theory of atomic structure, introducing elliptical orbits, a concept also introduced by Arnold Sommerfeld. This phase is known as old quantum theory.

According to Planck, each energy element (E) is proportional to its frequency (ν):

where h is Planck's constant.

Planck cautiously insisted that this was simply an aspect of the processes of absorption and emission of radiation and had nothing to do with the physical reality of the radiation itself. In fact, he considered his quantum hypothesis a mathematical trick to get the right answer rather than a sizable discovery. However, in 1905 Albert Einstein interpreted Planck's quantum hypothesis realistically and used it to explain the photoelectric effect, in which shining light on certain materials can eject electrons from the material. He won the 1921 Nobel Prize in Physics for this work.

Einstein further developed this idea to show that an electromagnetic wave such as light could also be described as a particle (later called the photon), with a discrete quantum of energy that was dependent on its frequency.

The foundations of quantum mechanics were established during the first half of the 20th century by Max Planck, Niels Bohr, Werner Heisenberg, Louis de Broglie, Arthur Compton, Albert Einstein, Erwin Schrödinger, Max Born, John von Neumann, Paul Dirac, Enrico Fermi, Wolfgang Pauli, Max von Laue, Freeman Dyson, David Hilbert, Wilhelm Wien, Satyendra Nath Bose, Arnold Sommerfeld, and others. The Copenhagen interpretation of Niels Bohr became widely accepted.

In the mid-1920s, developments in quantum mechanics led to its becoming the standard formulation for atomic physics. In the summer of 1925, Bohr and Heisenberg published results that closed the old quantum theory. Out of deference to their particle-like behavior in certain processes and measurements, light quanta came to be called photons (1926). From Einstein's simple postulation was born a flurry of debating, theorizing, and testing. Thus, the entire field of quantum physics emerged, leading to its wider acceptance at the Fifth Solvay Conference in 1927.

It was found that subatomic particles and electromagnetic waves are neither simply particle nor wave but have certain properties of each. This originated the concept of wave–particle duality.

By 1930, quantum mechanics had been further unified and formalized by the work of David Hilbert, Paul Dirac and John von Neumann with greater emphasis on measurement, the statistical nature of our knowledge of reality, and philosophical speculation about the 'observer'. It has since permeated many disciplines including quantum chemistry, quantum electronics, quantum optics, and quantum information science. Its speculative modern developments include string theory and quantum gravity theories. It also provides a useful framework for many features of the modern periodic table of elements, and describes the behaviors of atoms during chemical bonding and the flow of electrons in computer semiconductors, and therefore plays a crucial role in many modern technologies.

While quantum mechanics was constructed to describe the world of the very small, it is also needed to explain some macroscopic phenomena such as superconductors, and superfluids.

The word quantum derives from the Latin, meaning "how great" or "how much". In quantum mechanics, it refers to a discrete unit assigned to certain physical quantities such as the energy of an atom at rest (see Figure 1). The discovery that particles are discrete packets of energy with wave-like properties led to the branch of physics dealing with atomic and subatomic systems which is today called quantum mechanics. It underlies the mathematical framework of many fields of physics and chemistry, including condensed matter physics, solid-state physics, atomic physics, molecular physics, computational physics, computational chemistry, quantum chemistry, particle physics, nuclear chemistry, and nuclear physics. Some fundamental aspects of the theory are still actively studied.

Quantum mechanics is essential to understanding the behavior of systems at atomic length scales and smaller. If the physical nature of an atom were solely described by classical mechanics, electrons would not orbit the nucleus, since orbiting electrons emit radiation (due to circular motion) and would eventually collide with the nucleus due to this loss of energy. This framework was unable to explain the stability of atoms. Instead, electrons remain in an uncertain, non-deterministic, smeared, probabilistic wave–particle orbital about the nucleus, defying the traditional assumptions of classical mechanics and electromagnetism.

Quantum mechanics was initially developed to provide a better explanation and description of the atom, especially the differences in the spectra of light emitted by different isotopes of the same chemical element, as well as subatomic particles. In short, the quantum-mechanical atomic model has succeeded spectacularly in the realm where classical mechanics and electromagnetism falter.

Broadly speaking, quantum mechanics incorporates four classes of phenomena for which classical physics cannot account:

Mathematical formulations

In the mathematically rigorous formulation of quantum mechanics developed by Paul Dirac, David Hilbert, John von Neumann, and Hermann Weyl, the possible states of a quantum mechanical system are symbolized as unit vectors (called state vectors). Formally, these reside in a complex separable Hilbert space—variously called the state space or the associated Hilbert space of the system—that is well defined up to a complex number of norm 1 (the phase factor). In other words, the possible states are points in the projective space of a Hilbert space, usually called the complex projective space. The exact nature of this Hilbert space is dependent on the system—for example, the state space for position and momentum states is the space of square-integrable functions, while the state space for the spin of a single proton is just the product of two complex planes. Each observable is represented by a maximally Hermitian (precisely: by a self-adjoint) linear operator acting on the state space. Each eigenstate of an observable corresponds to an eigenvector of the operator, and the associated eigenvalue corresponds to the value of the observable in that eigenstate. If the operator's spectrum is discrete, the observable can attain only those discrete eigenvalues.

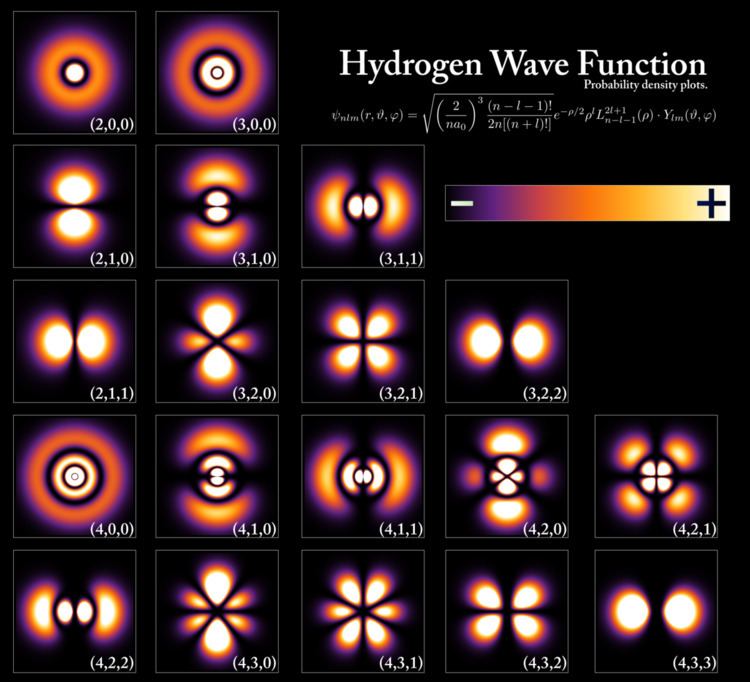

In the formalism of quantum mechanics, the state of a system at a given time is described by a complex wave function, also referred to as state vector in a complex vector space. This abstract mathematical object allows for the calculation of probabilities of outcomes of concrete experiments. For example, it allows one to compute the probability of finding an electron in a particular region around the nucleus at a particular time. Contrary to classical mechanics, one can never make simultaneous predictions of conjugate variables, such as position and momentum, to arbitrary precision. For instance, electrons may be considered (to a certain probability) to be located somewhere within a given region of space, but with their exact positions unknown. Contours of constant probability, often referred to as "clouds", may be drawn around the nucleus of an atom to conceptualize where the electron might be located with the most probability. Heisenberg's uncertainty principle quantifies the inability to precisely locate the particle given its conjugate momentum.

According to one interpretation, as the result of a measurement the wave function containing the probability information for a system collapses from a given initial state to a particular eigenstate. The possible results of a measurement are the eigenvalues of the operator representing the observable—which explains the choice of Hermitian operators, for which all the eigenvalues are real. The probability distribution of an observable in a given state can be found by computing the spectral decomposition of the corresponding operator. Heisenberg's uncertainty principle is represented by the statement that the operators corresponding to certain observables do not commute.

The probabilistic nature of quantum mechanics thus stems from the act of measurement. This is one of the most difficult aspects of quantum systems to understand. It was the central topic in the famous Bohr–Einstein debates, in which the two scientists attempted to clarify these fundamental principles by way of thought experiments. In the decades after the formulation of quantum mechanics, the question of what constitutes a "measurement" has been extensively studied. Newer interpretations of quantum mechanics have been formulated that do away with the concept of "wave function collapse" (see, for example, the relative state interpretation). The basic idea is that when a quantum system interacts with a measuring apparatus, their respective wave functions become entangled, so that the original quantum system ceases to exist as an independent entity. For details, see the article on measurement in quantum mechanics.

Generally, quantum mechanics does not assign definite values. Instead, it makes a prediction using a probability distribution; that is, it describes the probability of obtaining the possible outcomes from measuring an observable. Often these results are skewed by many causes, such as dense probability clouds. Probability clouds are approximate (but better than the Bohr model) whereby electron location is given by a probability function, the wave function eigenvalue, such that the probability is the squared modulus of the complex amplitude, or quantum state nuclear attraction. Naturally, these probabilities will depend on the quantum state at the "instant" of the measurement. Hence, uncertainty is involved in the value. There are, however, certain states that are associated with a definite value of a particular observable. These are known as eigenstates of the observable ("eigen" can be translated from German as meaning "inherent" or "characteristic").

In the everyday world, it is natural and intuitive to think of everything (every observable) as being in an eigenstate. Everything appears to have a definite position, a definite momentum, a definite energy, and a definite time of occurrence. However, quantum mechanics does not pinpoint the exact values of a particle's position and momentum (since they are conjugate pairs) or its energy and time (since they too are conjugate pairs); rather, it provides only a range of probabilities in which that particle might be given its momentum and momentum probability. Therefore, it is helpful to use different words to describe states having uncertain values and states having definite values (eigenstates). Usually, a system will not be in an eigenstate of the observable (particle) we are interested in. However, if one measures the observable, the wave function will instantaneously be an eigenstate (or "generalized" eigenstate) of that observable. This process is known as wave function collapse, a controversial and much-debated process that involves expanding the system under study to include the measurement device. If one knows the corresponding wave function at the instant before the measurement, one will be able to compute the probability of the wave function collapsing into each of the possible eigenstates. For example, the free particle in the previous example will usually have a wave function that is a wave packet centered around some mean position x0 (neither an eigenstate of position nor of momentum). When one measures the position of the particle, it is impossible to predict with certainty the result. It is probable, but not certain, that it will be near x0, where the amplitude of the wave function is large. After the measurement is performed, having obtained some result x, the wave function collapses into a position eigenstate centered at x.

The time evolution of a quantum state is described by the Schrödinger equation, in which the Hamiltonian (the operator corresponding to the total energy of the system) generates the time evolution. The time evolution of wave functions is deterministic in the sense that - given a wave function at an initial time - it makes a definite prediction of what the wave function will be at any later time.

During a measurement, on the other hand, the change of the initial wave function into another, later wave function is not deterministic, it is unpredictable (i.e., random). A time-evolution simulation can be seen here.

Wave functions change as time progresses. The Schrödinger equation describes how wave functions change in time, playing a role similar to Newton's second law in classical mechanics. The Schrödinger equation, applied to the aforementioned example of the free particle, predicts that the center of a wave packet will move through space at a constant velocity (like a classical particle with no forces acting on it). However, the wave packet will also spread out as time progresses, which means that the position becomes more uncertain with time. This also has the effect of turning a position eigenstate (which can be thought of as an infinitely sharp wave packet) into a broadened wave packet that no longer represents a (definite, certain) position eigenstate.

Some wave functions produce probability distributions that are constant, or independent of time—such as when in a stationary state of constant energy, time vanishes in the absolute square of the wave function. Many systems that are treated dynamically in classical mechanics are described by such "static" wave functions. For example, a single electron in an unexcited atom is pictured classically as a particle moving in a circular trajectory around the atomic nucleus, whereas in quantum mechanics it is described by a static, spherically symmetric wave function surrounding the nucleus (Fig. 1) (note, however, that only the lowest angular momentum states, labeled s, are spherically symmetric).

The Schrödinger equation acts on the entire probability amplitude, not merely its absolute value. Whereas the absolute value of the probability amplitude encodes information about probabilities, its phase encodes information about the interference between quantum states. This gives rise to the "wave-like" behavior of quantum states. As it turns out, analytic solutions of the Schrödinger equation are available for only a very small number of relatively simple model Hamiltonians, of which the quantum harmonic oscillator, the particle in a box, the dihydrogen cation, and the hydrogen atom are the most important representatives. Even the helium atom—which contains just one more electron than does the hydrogen atom—has defied all attempts at a fully analytic treatment.

There exist several techniques for generating approximate solutions, however. In the important method known as perturbation theory, one uses the analytic result for a simple quantum mechanical model to generate a result for a more complicated model that is related to the simpler model by (for one example) the addition of a weak potential energy. Another method is the "semi-classical equation of motion" approach, which applies to systems for which quantum mechanics produces only weak (small) deviations from classical behavior. These deviations can then be computed based on the classical motion. This approach is particularly important in the field of quantum chaos.

Mathematically equivalent formulations of quantum mechanics

There are numerous mathematically equivalent formulations of quantum mechanics. One of the oldest and most commonly used formulations is the "transformation theory" proposed by Paul Dirac, which unifies and generalizes the two earliest formulations of quantum mechanics - matrix mechanics (invented by Werner Heisenberg) and wave mechanics (invented by Erwin Schrödinger).

Especially since Werner Heisenberg was awarded the Nobel Prize in Physics in 1932 for the creation of quantum mechanics, the role of Max Born in the development of QM was overlooked until the 1954 Nobel award. The role is noted in a 2005 biography of Born, which recounts his role in the matrix formulation of quantum mechanics, and the use of probability amplitudes. Heisenberg himself acknowledges having learned matrices from Born, as published in a 1940 festschrift honoring Max Planck. In the matrix formulation, the instantaneous state of a quantum system encodes the probabilities of its measurable properties, or "observables". Examples of observables include energy, position, momentum, and angular momentum. Observables can be either continuous (e.g., the position of a particle) or discrete (e.g., the energy of an electron bound to a hydrogen atom). An alternative formulation of quantum mechanics is Feynman's path integral formulation, in which a quantum-mechanical amplitude is considered as a sum over all possible classical and non-classical paths between the initial and final states. This is the quantum-mechanical counterpart of the action principle in classical mechanics.

Interactions with other scientific theories

The rules of quantum mechanics are fundamental. They assert that the state space of a system is a Hilbert space and that observables of that system are Hermitian operators acting on that space—although they do not tell us which Hilbert space or which operators. These can be chosen appropriately in order to obtain a quantitative description of a quantum system. An important guide for making these choices is the correspondence principle, which states that the predictions of quantum mechanics reduce to those of classical mechanics when a system moves to higher energies or, equivalently, larger quantum numbers, i.e. whereas a single particle exhibits a degree of randomness, in systems incorporating millions of particles averaging takes over and, at the high energy limit, the statistical probability of random behaviour approaches zero. In other words, classical mechanics is simply a quantum mechanics of large systems. This "high energy" limit is known as the classical or correspondence limit. One can even start from an established classical model of a particular system, then attempt to guess the underlying quantum model that would give rise to the classical model in the correspondence limit.

When quantum mechanics was originally formulated, it was applied to models whose correspondence limit was non-relativistic classical mechanics. For instance, the well-known model of the quantum harmonic oscillator uses an explicitly non-relativistic expression for the kinetic energy of the oscillator, and is thus a quantum version of the classical harmonic oscillator.

Early attempts to merge quantum mechanics with special relativity involved the replacement of the Schrödinger equation with a covariant equation such as the Klein–Gordon equation or the Dirac equation. While these theories were successful in explaining many experimental results, they had certain unsatisfactory qualities stemming from their neglect of the relativistic creation and annihilation of particles. A fully relativistic quantum theory required the development of quantum field theory, which applies quantization to a field (rather than a fixed set of particles). The first complete quantum field theory, quantum electrodynamics, provides a fully quantum description of the electromagnetic interaction. The full apparatus of quantum field theory is often unnecessary for describing electrodynamic systems. A simpler approach, one that has been employed since the inception of quantum mechanics, is to treat charged particles as quantum mechanical objects being acted on by a classical electromagnetic field. For example, the elementary quantum model of the hydrogen atom describes the electric field of the hydrogen atom using a classical

Quantum field theories for the strong nuclear force and the weak nuclear force have also been developed. The quantum field theory of the strong nuclear force is called quantum chromodynamics, and describes the interactions of subnuclear particles such as quarks and gluons. The weak nuclear force and the electromagnetic force were unified, in their quantized forms, into a single quantum field theory (known as electroweak theory), by the physicists Abdus Salam, Sheldon Glashow and Steven Weinberg. These three men shared the Nobel Prize in Physics in 1979 for this work.

It has proven difficult to construct quantum models of gravity, the remaining fundamental force. Semi-classical approximations are workable, and have led to predictions such as Hawking radiation. However, the formulation of a complete theory of quantum gravity is hindered by apparent incompatibilities between general relativity (the most accurate theory of gravity currently known) and some of the fundamental assumptions of quantum theory. The resolution of these incompatibilities is an area of active research, and theories such as string theory are among the possible candidates for a future theory of quantum gravity.

Classical mechanics has also been extended into the complex domain, with complex classical mechanics exhibiting behaviors similar to quantum mechanics.

Quantum mechanics and classical physics

Predictions of quantum mechanics have been verified experimentally to an extremely high degree of accuracy. According to the correspondence principle between classical and quantum mechanics, all objects obey the laws of quantum mechanics, and classical mechanics is just an approximation for large systems of objects (or a statistical quantum mechanics of a large collection of particles). The laws of classical mechanics thus follow from the laws of quantum mechanics as a statistical average at the limit of large systems or large quantum numbers. However, chaotic systems do not have good quantum numbers, and quantum chaos studies the relationship between classical and quantum descriptions in these systems.

Quantum coherence is an essential difference between classical and quantum theories as illustrated by the Einstein–Podolsky–Rosen (EPR) paradox — an attack on a certain philosophical interpretation of quantum mechanics by an appeal to local realism. Quantum interference involves adding together probability amplitudes, whereas classical "waves" infer that there is an adding together of intensities. For microscopic bodies, the extension of the system is much smaller than the coherence length, which gives rise to long-range entanglement and other nonlocal phenomena characteristic of quantum systems. Quantum coherence is not typically evident at macroscopic scales, though an exception to this rule may occur at extremely low temperatures (i.e. approaching absolute zero) at which quantum behavior may manifest itself macroscopically. This is in accordance with the following observations:

Copenhagen interpretation of quantum versus classical kinematics

A big difference between classical and quantum mechanics is that they use very different kinematic descriptions.

In Niels Bohr's mature view, quantum mechanical phenomena are required to be experiments, with complete descriptions of all the devices for the system, preparative, intermediary, and finally measuring. The descriptions are in macroscopic terms, expressed in ordinary language, supplemented with the concepts of classical mechanics. The initial condition and the final condition of the system are respectively described by values in a configuration space, for example a position space, or some equivalent space such as a momentum space. Quantum mechanics does not admit a completely precise description, in terms of both position and momentum, of an initial condition or "state" (in the classical sense of the word) that would support a precisely deterministic and causal prediction of a final condition. In this sense, advocated by Bohr in his mature writings, a quantum phenomenon is a process, a passage from initial to final condition, not an instantaneous "state" in the classical sense of that word. Thus there are two kinds of processes in quantum mechanics: stationary and transitional. For a stationary process, the initial and final condition are the same. For a transition, they are different. Obviously by definition, if only the initial condition is given, the process is not determined. Given its initial condition, prediction of its final condition is possible, causally but only probabilistically, because the Schrödinger equation is deterministic for wave function evolution, but the wave function describes the system only probabilistically.

For many experiments, it is possible to think of the initial and final conditions of the system as being a particle. In some cases it appears that there are potentially several spatially distinct pathways or trajectories by which a particle might pass from initial to final condition. It is an important feature of the quantum kinematic description that it does not permit a unique definite statement of which of those pathways is actually followed. Only the initial and final conditions are definite, and, as stated in the foregoing paragraph, they are defined only as precisely as allowed by the configuration space description or its equivalent. In every case for which a quantum kinematic description is needed, there is always a compelling reason for this restriction of kinematic precision. An example of such a reason is that for a particle to be experimentally found in a definite position, it must be held motionless; for it to be experimentally found to have a definite momentum, it must have free motion; these two are logically incompatible.

Classical kinematics does not primarily demand experimental description of its phenomena. It allows completely precise description of an instantaneous state by a value in phase space, the Cartesian product of configuration and momentum spaces. This description simply assumes or imagines a state as a physically existing entity without concern about its experimental measurability. Such a description of an initial condition, together with Newton's laws of motion, allows a precise deterministic and causal prediction of a final condition, with a definite trajectory of passage. Hamiltonian dynamics can be used for this. Classical kinematics also allows the description of a process analogous to the initial and final condition description used by quantum mechanics. Lagrangian mechanics applies to this. For processes that need account to be taken of actions of a small number of Planck constants, classical kinematics is not adequate; quantum mechanics is needed.

General relativity and quantum mechanics

Even with the defining postulates of both Einstein's theory of general relativity and quantum theory being indisputably supported by rigorous and repeated empirical evidence, and while they do not directly contradict each other theoretically (at least with regard to their primary claims), they have proven extremely difficult to incorporate into one consistent, cohesive model.

Gravity is negligible in many areas of particle physics, so that unification between general relativity and quantum mechanics is not an urgent issue in those particular applications. However, the lack of a correct theory of quantum gravity is an important issue in physical cosmology and the search by physicists for an elegant "Theory of Everything" (TOE). Consequently, resolving the inconsistencies between both theories has been a major goal of 20th and 21st century physics. Many prominent physicists, including Stephen Hawking, have labored for many years in the attempt to discover a theory underlying everything. This TOE would combine not only the different models of subatomic physics, but also derive the four fundamental forces of nature - the strong force, electromagnetism, the weak force, and gravity - from a single force or phenomenon. While Stephen Hawking was initially a believer in the Theory of Everything, after considering Gödel's Incompleteness Theorem, he has concluded that one is not obtainable, and has stated so publicly in his lecture "Gödel and the End of Physics" (2002).

Attempts at a unified field theory

The quest to unify the fundamental forces through quantum mechanics is still ongoing. Quantum electrodynamics (or "quantum electromagnetism"), which is currently (in the perturbative regime at least) the most accurately tested physical theory in competition with general relativity, has been successfully merged with the weak nuclear force into the electroweak force and work is currently being done to merge the electroweak and strong force into the electrostrong force. Current predictions state that at around 1014 GeV the three aforementioned forces are fused into a single unified field. Beyond this "grand unification", it is speculated that it may be possible to merge gravity with the other three gauge symmetries, expected to occur at roughly 1019 GeV. However — and while special relativity is parsimoniously incorporated into quantum electrodynamics — the expanded general relativity, currently the best theory describing the gravitation force, has not been fully incorporated into quantum theory. One of those searching for a coherent TOE is Edward Witten, a theoretical physicist who formulated the M-theory, which is an attempt at describing the supersymmetrical based string theory. M-theory posits that our apparent 4-dimensional spacetime is, in reality, actually an 11-dimensional spacetime containing 10 spatial dimensions and 1 time dimension, although 7 of the spatial dimensions are - at lower energies - completely "compactified" (or infinitely curved) and not readily amenable to measurement or probing.

Another popular theory is Loop quantum gravity (LQG), a theory first proposed by Carlo Rovelli that describes the quantum properties of gravity. It is also a theory of quantum space and quantum time, because in general relativity the geometry of spacetime is a manifestation of gravity. LQG is an attempt to merge and adapt standard quantum mechanics and standard general relativity. The main output of the theory is a physical picture of space where space is granular. The granularity is a direct consequence of the quantization. It has the same nature of the granularity of the photons in the quantum theory of electromagnetism or the discrete levels of the energy of the atoms. But here it is space itself which is discrete. More precisely, space can be viewed as an extremely fine fabric or network "woven" of finite loops. These networks of loops are called spin networks. The evolution of a spin network over time is called a spin foam. The predicted size of this structure is the Planck length, which is approximately 1.616×10−35 m. According to theory, there is no meaning to length shorter than this (cf. Planck scale energy). Therefore, LQG predicts that not just matter, but also space itself, has an atomic structure.

Philosophical implications

Since its inception, the many counter-intuitive aspects and results of quantum mechanics have provoked strong philosophical debates and many interpretations. Even fundamental issues, such as Max Born's basic rules concerning probability amplitudes and probability distributions, took decades to be appreciated by society and many leading scientists. Richard Feynman once said, "I think I can safely say that nobody understands quantum mechanics." According to Steven Weinberg, "There is now in my opinion no entirely satisfactory interpretation of quantum mechanics."

The Copenhagen interpretation — due largely to Niels Bohr and Werner Heisenberg — remains most widely accepted amongst physicists, some 75 years after its enunciation. According to this interpretation, the probabilistic nature of quantum mechanics is not a temporary feature which will eventually be replaced by a deterministic theory, but instead must be considered a final renunciation of the classical idea of "causality." It is also believed therein that any well-defined application of the quantum mechanical formalism must always make reference to the experimental arrangement, due to the conjugate nature of evidence obtained under different experimental situations.

Albert Einstein, himself one of the founders of quantum theory, did not accept some of the more philosophical or metaphysical interpretations of quantum mechanics, such as rejection of determinism and of causality. He is famously quoted as saying, in response to this aspect, "God does not play with dice". He rejected the concept that the state of a physical system depends on the experimental arrangement for its measurement. He held that a state of nature occurs in its own right, regardless of whether or how it might be observed. In that view, he is supported by the currently accepted definition of a quantum state, which remains invariant under arbitrary choice of configuration space for its representation, that is to say, manner of observation. He also held that underlying quantum mechanics there should be a theory that thoroughly and directly expresses the rule against action at a distance; in other words, he insisted on the principle of locality. He considered, but rejected on theoretical grounds, a particular proposal for hidden variables to obviate the indeterminism or acausality of quantum mechanical measurement. He considered that quantum mechanics was a currently valid but not a permanently definitive theory for quantum phenomena. He thought its future replacement would require profound conceptual advances, and would not come quickly or easily. The Bohr-Einstein debates provide a vibrant critique of the Copenhagen Interpretation from an epistemological point of view. In arguing for his views, he produced a series of objections, the most famous of which has become known as the Einstein–Podolsky–Rosen paradox.

John Bell showed that this "EPR" paradox led to experimentally testable differences between quantum mechanics and theories that rely on added hidden variables. Experiments have been performed confirming the accuracy of quantum mechanics, thereby demonstrating that quantum mechanics cannot be improved upon by addition of hidden variables. Alain Aspect's initial experiments in 1982, and many subsequent experiments since, have definitively verified quantum entanglement.

Entanglement, as demonstrated in Bell-type experiments, does not, however, violate causality, since no transfer of information happens. Quantum entanglement forms the basis of quantum cryptography, which is proposed for use in high-security commercial applications in banking and government.

The Everett many-worlds interpretation, formulated in 1956, holds that all the possibilities described by quantum theory simultaneously occur in a multiverse composed of mostly independent parallel universes. This is not accomplished by introducing some "new axiom" to quantum mechanics, but on the contrary, by removing the axiom of the collapse of the wave packet. All of the possible consistent states of the measured system and the measuring apparatus (including the observer) are present in a real physical - not just formally mathematical, as in other interpretations - quantum superposition. Such a superposition of consistent state combinations of different systems is called an entangled state. While the multiverse is deterministic, we perceive non-deterministic behavior governed by probabilities, because we can only observe the universe (i.e., the consistent state contribution to the aforementioned superposition) that we, as observers, inhabit. Everett's interpretation is perfectly consistent with John Bell's experiments and makes them intuitively understandable. However, according to the theory of quantum decoherence, these "parallel universes" will never be accessible to us. The inaccessibility can be understood as follows: once a measurement is done, the measured system becomes entangled with both the physicist who measured it and a huge number of other particles, some of which are photons flying away at the speed of light towards the other end of the universe. In order to prove that the wave function did not collapse, one would have to bring all these particles back and measure them again, together with the system that was originally measured. Not only is this completely impractical, but even if one could theoretically do this, it would have to destroy any evidence that the original measurement took place (including the physicist's memory). In light of these Bell tests, Cramer (1986) formulated his transactional interpretation. Relational quantum mechanics appeared in the late 1990s as the modern derivative of the Copenhagen Interpretation.

Applications

Quantum mechanics has had enormous success in explaining many of the features of our universe. Quantum mechanics is often the only tool available that can reveal the individual behaviors of the subatomic particles that make up all forms of matter (electrons, protons, neutrons, photons, and others). Quantum mechanics has strongly influenced string theories, candidates for a Theory of Everything (see reductionism).

Quantum mechanics is also critically important for understanding how individual atoms combine covalently to form molecules. The application of quantum mechanics to chemistry is known as quantum chemistry. Relativistic quantum mechanics can, in principle, mathematically describe most of chemistry. Quantum mechanics can also provide quantitative insight into ionic and covalent bonding processes by explicitly showing which molecules are energetically favorable to which others and the magnitudes of the energies involved. Furthermore, most of the calculations performed in modern computational chemistry rely on quantum mechanics.

In many aspects modern technology operates at a scale where quantum effects are significant.

Electronics

Many modern electronic devices are designed using quantum mechanics. Examples include the laser, the transistor (and thus the microchip), the electron microscope, and magnetic resonance imaging (MRI). The study of semiconductors led to the invention of the diode and the transistor, which are indispensable parts of modern electronics systems, computer and telecommunication devices. Another application is the light emitting diode which is a high-efficiency source of light.

Many electronic devices operate under effect of quantum tunneling. It even exists in the simple light switch. The switch would not work if electrons could not quantum tunnel through the layer of oxidation on the metal contact surfaces. Flash memory chips found in USB drives use quantum tunneling to erase their memory cells. Some negative differential resistance devices also utilizes quantum tunneling effect, such as resonant tunneling diode. Unlike classical diodes, its current is carried by resonant tunneling through two potential barriers (see right figure). Its negative resistance behavior can only be understood with quantum mechanics: As the confined state moves close to Fermi level, tunnel current increases. As it moves away, current decreases. Quantum mechanics is vital to understanding and designing such electronic devices.

Cryptography

Researchers are currently seeking robust methods of directly manipulating quantum states. Efforts are being made to more fully develop quantum cryptography, which will theoretically allow guaranteed secure transmission of information.

Quantum computing

A more distant goal is the development of quantum computers, which are expected to perform certain computational tasks exponentially faster than classical computers. Instead of using classical bits, quantum computers use qubits, which can be in superpositions of states. Another active research topic is quantum teleportation, which deals with techniques to transmit quantum information over arbitrary distances.

Macroscale quantum effects

While quantum mechanics primarily applies to the smaller atomic regimes of matter and energy, some systems exhibit quantum mechanical effects on a large scale. Superfluidity, the frictionless flow of a liquid at temperatures near absolute zero, is one well-known example. So is the closely related phenomenon of superconductivity, the frictionless flow of an electron gas in a conducting material (an electric current) at sufficiently low temperatures. The fractional quantum hall effect is a topological ordered state which corresponds to patterns of long-range quantum entanglement. States with different topological orders (or different patterns of long range entanglements) cannot change into each other without a phase transition.

Quantum theory

Quantum theory also provides accurate descriptions for many previously unexplained phenomena, such as black-body radiation and the stability of the orbitals of electrons in atoms. It has also given insight into the workings of many different biological systems, including smell receptors and protein structures. Recent work on photosynthesis has provided evidence that quantum correlations play an essential role in this fundamental process of plants and many other organisms. Even so, classical physics can often provide good approximations to results otherwise obtained by quantum physics, typically in circumstances with large numbers of particles or large quantum numbers. Since classical formulas are much simpler and easier to compute than quantum formulas, classical approximations are used and preferred when the system is large enough to render the effects of quantum mechanics insignificant.

Free particle

For example, consider a free particle. In quantum mechanics, there is wave–particle duality, so the properties of the particle can be described as the properties of a wave. Therefore, its quantum state can be represented as a wave of arbitrary shape and extending over space as a wave function. The position and momentum of the particle are observables. The Uncertainty Principle states that both the position and the momentum cannot simultaneously be measured with complete precision. However, one can measure the position (alone) of a moving free particle, creating an eigenstate of position with a wave function that is very large (a Dirac delta) at a particular position x, and zero everywhere else. If one performs a position measurement on such a wave function, the resultant x will be obtained with 100% probability (i.e., with full certainty, or complete precision). This is called an eigenstate of position—or, stated in mathematical terms, a generalized position eigenstate (eigendistribution). If the particle is in an eigenstate of position, then its momentum is completely unknown. On the other hand, if the particle is in an eigenstate of momentum, then its position is completely unknown. In an eigenstate of momentum having a plane wave form, it can be shown that the wavelength is equal to h/p, where h is Planck's constant and p is the momentum of the eigenstate.

Step potential

The potential in this case is given by:

The solutions are superpositions of left- and right-moving waves:

where the wave vectors are related to the energy via

with coefficients A and B determined from the boundary conditions and by imposing a continuous derivative on the solution.

Each term of the solution can be interpreted as an incident, reflected, or transmitted component of the wave, allowing the calculation of transmission and reflection coefficients. Notably, in contrast to classical mechanics, incident particles with energies greater than the potential step are partially reflected.

Rectangular potential barrier

This is a model for the quantum tunneling effect which plays an important role in the performance of modern technologies such as flash memory and scanning tunneling microscopy. Quantum tunneling is central to physical phenomena involved in superlattices.

Particle in a box

The particle in a one-dimensional potential energy box is the most mathematically simple example where restraints lead to the quantization of energy levels. The box is defined as having zero potential energy everywhere inside a certain region, and infinite potential energy everywhere outside that region. For the one-dimensional case in the

With the differential operator defined by

the previous equation is evocative of the classic kinetic energy analogue,

with state

The general solutions of the Schrödinger equation for the particle in a box are

or, from Euler's formula,

The infinite potential walls of the box determine the values of C, D, and k at x = 0 and x = L where ψ must be zero. Thus, at x = 0,

and D = 0. At x = L,

in which C cannot be zero as this would conflict with the Born interpretation. Therefore, since sin(kL) = 0, kL must be an integer multiple of π,

The quantization of energy levels follows from this constraint on k, since

Finite potential well

A finite potential well is the generalization of the infinite potential well problem to potential wells having finite depth.

The finite potential well problem is mathematically more complicated than the infinite particle-in-a-box problem as the wave function is not pinned to zero at the walls of the well. Instead, the wave function must satisfy more complicated mathematical boundary conditions as it is nonzero in regions outside the well.

Harmonic oscillator

As in the classical case, the potential for the quantum harmonic oscillator is given by

This problem can either be treated by directly solving the Schrödinger equation, which is not trivial, or by using the more elegant "ladder method" first proposed by Paul Dirac. The eigenstates are given by

where Hn are the Hermite polynomials

and the corresponding energy levels are

This is another example illustrating the quantification of energy for bound states.