In probability theory and statistics, the Dirichlet process (DP) is one of the most popular Bayesian nonparametric models. It was introduced by Thomas Ferguson as a prior over probability distributions.

A Dirichlet process D P ( s , G 0 ) is completely defined by its parameters: G 0 (the base distribution or base measure) is an arbitrary distribution and s (the concentration parameter) is a positive real number (it is often denoted as α ). According to the Bayesian paradigma these parameters should be chosen based on the available prior information on the domain.

The question is: how should we choose the prior parameters ( s , G 0 ) of the DP, in particular the infinite dimensional one G 0 , in case of lack of prior information?

To address this issue, the only prior that has been proposed so far is the limiting DP obtained for s → 0 , which has been introduced under the name of Bayesian bootstrap by Rubin; in fact it can be proven that the Bayesian bootstrap is asymptotically equivalent to the frequentist bootstrap introduced by Bradley Efron. The limiting Dirichlet process s → 0 has been criticized on diverse grounds. From an a-priori point of view, the main criticism is that taking s → 0 is far from leading to a noninformative prior. Moreover, a-posteriori, it assigns zero probability to any set that does not include the observations.

The imprecise Dirichlet process has been proposed to overcome these issues. The basic idea is to fix s > 0 but do not choose any precise base measure G 0 .

More precisely, the imprecise Dirichlet process (IDP) is defined as follows:

I D P : { D P ( s , G 0 ) : G 0 ∈ P } where P is the set of all probability measures. In other words, the IDP is the set of all Dirichlet processes (with a fixed s > 0 ) obtained by letting the base measure G 0 to span the set of all probability measures.

Let P a probability distribution on ( X , B ) (here X is a standard Borel space with Borel σ -field B ) and assume that P ∼ D P ( s , G 0 ) . Then consider a real-valued bounded function f defined on ( X , B ) . It is well known that the expectation of E [ f ] with respect to the Dirichlet process is

E [ E ( f ) ] = E [ ∫ f d P ] = ∫ f d E [ P ] = ∫ f d G 0 . One of the most remarkable properties of the DP priors is that the posterior distribution of P is again a DP. Let X 1 , … , X n be an independent and identically distributed sample from P and P ∼ D p ( s , G 0 ) , then the posterior distribution of P given the observations is

P ∣ X 1 , … , X n ∼ D p ( s + n , G n ) , with G n = s s + n G 0 + 1 s + n ∑ i = 1 n δ X i , where δ X i is an atomic probability measure (Dirac's delta) centered at X i . Hence, it follows that E [ E ( f ) ∣ X 1 , … , X n ] = ∫ f d G n . Therefore, for any fixed G 0 , we can exploit the previous equations to derive prior and posterior expectations.

In the IDP G 0 can span the set of all distributions P . This implies that we will get a different prior and posterior expectation of E ( f ) for any choice of G 0 . A way to characterize inferences for the IDP is by computing lower and upper bounds for the expectation of E ( f ) w.r.t. G 0 ∈ P . A-priori these bounds are:

E _ [ E ( f ) ] = inf G 0 ∈ P ∫ f d G 0 = inf f , E ¯ [ E ( f ) ] = sup G 0 ∈ P ∫ f d G 0 = sup f , the lower (upper) bound is obtained by a probability measure that puts all the mass on the infimum (supremum) of f , i.e., G 0 = δ X 0 with X 0 = arg inf f (or respectively with X 0 = arg sup f ). From the above expressions of the lower and upper bounds, it can be observed that the range of E [ E ( f ) ] under the IDP is the same as the original range of f . In other words, by specifying the IDP, we are not giving any prior information on the value of the expectation of f . A-priori, IDP is therefore a model of prior (near)-ignorance for E ( f ) .

A-posteriori, IDP can learn from data. The posterior lower and upper bounds for the expectation of E ( f ) are in fact given by:

E _ [ E ( f ) ∣ X 1 , … , X n ] = inf G 0 ∈ P ∫ f d G n = s s + n inf f + ∫ f ( X ) 1 s + n ∑ i = 1 n δ X i ( d X ) = s s + n inf f + n s + n ∑ i = 1 n f ( X i ) n , E ¯ [ E ( f ) ∣ X 1 , … , X n ] = sup G 0 ∈ P ∫ f d G n = s s + n sup f + ∫ f ( X ) 1 s + n ∑ i = 1 n δ X i ( d X ) = s s + n sup f + n s + n ∑ i = 1 n f ( X i ) n . It can be observed that the posterior inferences do not depend on G 0 . To define the IDP, the modeler has only to choose s (the concentration parameter). This explains the meaning of the adjective near in prior near-ignorance, because the IDP requires by the modeller the elicitation of a parameter. However, this is a simple elicitation problem for a nonparametric prior, since we only have to choose the value of a positive scalar (there are not infinitely many parameters left in the IDP model).

Finally, observe that for n → ∞ , IDP satisfies

E _ [ E ( f ) ∣ X 1 , … , X n ] , E ¯ [ E ( f ) ∣ X 1 , … , X n ] → S ( f ) , where S ( f ) = lim n → ∞ 1 n ∑ i = 1 n f ( X i ) . In other words, the IDP is consistent.

The IDP is completely specified by s , which is the only parameter left in the prior model. Since the value of s determines how quickly lower and upper posterior expectations converge at the increase of the number of observations, s can be chosen so to match a certain convergence rate. The parameter s can also be chosen to have some desirable frequentist properties (e.g., credible intervals to be calibrated frequentist intervals, hypothesis tests to be calibrated for the Type I error, etc.), see Example: median test

Let X 1 , … , X n be i.i.d. real random variables with cumulative distribution function F ( x ) .

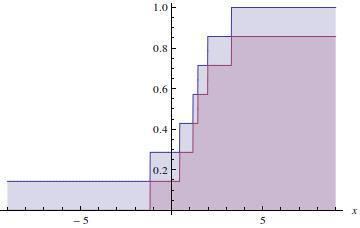

Since F ( x ) = E [ I ( ∞ , x ] ] , where I ( ∞ , x ] is the indicator function, we can use IDP to derive inferences about F ( x ) . The lower and upper posterior mean of F ( x ) are

E _ [ F ( x ) ∣ X 1 , … , X n ] = E _ [ E ( I ( ∞ , x ] ) ∣ X 1 , … , X n ] = n s + n ∑ i = 1 n I ( ∞ , x ] ( X i ) n = n s + n F ^ ( x ) , E ¯ [ F ( x ) ∣ X 1 , … , X n ] = E ¯ [ E ( I ( ∞ , x ] ) ∣ X 1 , … , X n ] = s s + n + n s + n ∑ i = 1 n I ( ∞ , x ] ( X i ) n = s s + n + n s + n F ^ ( x ) . where F ^ ( x ) is the empirical distribution function. Here, to obtain the lower we have exploited the fact that inf I ( ∞ , x ] = 0 and for the upper that sup I ( ∞ , x ] = 1 .

Note that, for any precise choice of G 0 (e.g., normal distribution N ( x ; 0 , 1 ) ), the posterior expectation of F ( x ) will be included between the lower and upper bound.

IDP can also be used for hypothesis testing, for instance to test the hypothesis F ( 0 ) < 0.5 , i.e., the median of F is greater than zero. By considering the partition ( − ∞ , 0 ] , ( 0 , ∞ ) and the property of the Dirichlet process, it can be shown that the posterior distribution of F ( 0 ) is

F ( 0 ) ∼ B e t a ( α 0 + n < 0 , β 0 + n − n < 0 ) where n < 0 is the number of observations that are less than zero,

α 0 = s ∫ − ∞ 0 d G 0 and

β 0 = s ∫ 0 ∞ d G 0 . By exploiting this property, it follows that

P _ [ F ( 0 ) < 0.5 ∣ X 1 , … , X n ] = ∫ 0 0.5 B e t a ( θ ; s + n < 0 , n − n < 0 ) d θ = I 1 / 2 ( s + n < 0 , n − n < 0 ) , P ¯ [ F ( 0 ) < 0.5 ∣ X 1 , … , X n ] = ∫ 0 0.5 B e t a ( θ ; n < 0 , s + n − n < 0 ) d θ = I 1 / 2 ( s + n < 0 , n − n < 0 ) . where I x ( α , β ) is the regularized incomplete beta function. We can thus perform the hypothesis test

P _ [ F ( 0 ) < 0.5 ∣ X 1 , … , X n ] > 1 − γ , P ¯ [ F ( 0 ) < 0.5 ∣ X 1 , … , X n ] > 1 − γ , (with 1 − γ = 0.95 for instance) and then

- if both the inequalities are satisfied we can declare that F ( 0 ) < 0.5 with probability larger than 1 − γ ;

- if only one of the inequality is satisfied (which has necessarily to be the one for the upper), we are in an indeterminate situation, i.e., we cannot decide;

- if both are not satisfied, we can declare that the probability that F ( 0 ) < 0.5 is lower than the desired probability of 1 − γ .

IDP returns an indeterminate decision when the decision is prior dependent (that is when it would depend on the choice of G 0 ).

By exploting the relationship between the cumulative distribution function of the Beta distribution, and the cumulative distribution function of a random variable Z from a binomial distribution, where the "probability of success" is p and the sample size is n:

F ( k ; n , p ) = Pr ( Z ≤ k ) = I 1 − p ( n − k , k + 1 ) = 1 − I p ( k + 1 , n − k ) , we can show that the median test derived with th IDP for any choice of s ≥ 1 encompasses the one-sided frequentist sign test as a test for the median. It can in fact be verified that for s = 1 the p -value of the sign test is equal to 1 − P _ [ F ( 0 ) < 0.5 ∣ X 1 , … , X n ] . Thus, if P _ [ F ( 0 ) < 0.5 ∣ X 1 , … , X n ] > 0.95 then the p -value is less than 0.05 and, thus, they two tests have the same power.

Dirichlet processes are frequently used in Bayesian nonparametric statistics. The Imprecise Dirichlet Process can be employed instead of the Dirichlet processes in any application in which prior information is lacking (it is therefore important to model this state of prior ignorance).

In this respect, the Imprecise Dirichlet Process has been used for nonparametric hypothesis testing, see the Imprecise Dirichlet Process statistical package. Based on the Imprecise Dirichlet Process, Bayesian nonparametric near-ignorance versions of the following classical nonparametric estimators have been derived: the Wilcoxon rank sum test and the Wilcoxon signed-rank test.

A Bayesian nonparametric near-ignorance model presents several advantages with respect to a traditional approach to hypothesis testing.

- The Bayesian approach allows us to formulate the hypothesis test as a decision problem. This means that we can verify the evidence in favor of the null hypothesis and not only rejecting it and take decisions which minimize the expected loss.

- Because of the nonparametric prior near-ignorance, IDP based tests allows us to start the hypothesis test with very weak prior assumptions, much in the direction of letting data speak for themselves.

- Although the IDP test shares several similarities with a standard Bayesian approach, at the same time it embodies a significant change of paradigm when it comes to take decisions. In fact the IDP based tests have the advantage of producing an indeterminate outcome when the decision is prior-dependent. In other words, the IDP test suspends the judgment when the option which minimizes the expected loss changes depending on the Dirichlet Process base measure we focus on.

- It has been empirically verified that when the IDP test is indeterminate, the frequentist tests are virtually behaving as random guessers. This surprising result has practical consequences in hypothesis testing. Assume that we are trying to compare the effects of two medical treatments (Y is better than X) and that, given the available data, the IDP test is indeterminate. In such a situation the frequentist test always issues a determinate response (for instance I can tell that Y is better than X), but it turns out that its response is completely random, like if we were tossing of a coin. On the other side, the IDP test acknowledges the impossibility of making a decision in these cases. Thus, by saying "I do not know", the IDP test provides a richer information to the analyst. The analyst could for instance use this information to collect more data.

For categorical variables, i.e., when X has a finite number of elements, it is known that the Dirichlet process reduces to a Dirichlet distribution. In this case, the Imprecise Dirichlet Process reduces to the Imprecise Dirichlet model proposed by Walley as a model for prior (near)-ignorance for chances.