| ||

In probability theory, Dirichlet processes (after Peter Gustav Lejeune Dirichlet) are a family of stochastic processes whose realizations are probability distributions. In other words, a Dirichlet process is a probability distribution whose range is itself a set of probability distributions. It is often used in Bayesian inference to describe the prior knowledge about the distribution of random variables—how likely it is that the random variables are distributed according to one or another particular distribution.

Contents

- Introduction

- Formal definition

- Alternative views

- Use in Dirichlet mixture models

- Example 1

- Example 2

- The Chinese restaurant process

- The stick breaking process

- The Plya urn scheme

- Applications of the Dirichlet process

- Related distributions

- References

The Dirichlet process is specified by a base distribution

The Dirichlet process can also be seen as the infinite-dimensional generalization of the Dirichlet distribution. In the same way as the Dirichlet distribution is the conjugate prior for the categorical distribution, the Dirichlet process is the conjugate prior for infinite, nonparametric discrete distributions. A particularly important application of Dirichlet processes is as a prior probability distribution in infinite mixture models.

The Dirichlet process was formally introduced by Thomas Ferguson in 1973 and has since been applied in data mining and machine learning, among others for natural language processing, computer vision and bioinformatics.

Introduction

Dirichlet processes are usually used when modeling data that tends to repeat previous values in a "rich get richer" fashion. Specifically, suppose that the generation of values

- Draw

X 1 H . - For

n > 1 :

a) With probability

b) With probability

At the same time, another common model for data is that the observations

The

- Draw a distribution

P fromD P ( H , α ) - Draw observations

X 1 , X 2 … independently fromP .

In practice, however, drawing a concrete distribution

Formal definition

Given a measurable set S, a base probability distribution H and a positive real number

where

Alternative views

There are several equivalent views of the Dirichlet process. Besides the definition above, the Dirichlet process can be defined implicitly through de Finetti's theorem as described in the first section; this is often called the Chinese restaurant process. A third alternative is the stick-breaking process, which defines the Dirichlet process constructively by writing a distribution sampled from the process as

Use in Dirichlet mixture models

To understand what Dirichlet processes are and the problem they solve we consider the example of data clustering. It is a common situation that data points are assumed to be distributed in a hierarchical fashion where each data point belongs to a (randomly chosen) cluster and the members of a cluster are further distributed randomly within that cluster.

Example 1

For example, we might be interested in how people will vote on a number of questions in an upcoming election. A reasonable model for this situation might be to classify each voter as a liberal, a conservative or a moderate and then model the event that a voter says “Yes” to any particular question as a Bernoulli random variable with probability dependent on which political cluster they belong to. By looking at how votes were cast in previous years on similar pieces of legislation one could fit a predictive model using a simple clustering algorithm such as k-means. That algorithm, however, requires knowing in advance the number of clusters that generated the data. In many situations it is not possible to determine this ahead of time, and even when we can reasonably assume a number of clusters we would still like to be able to check this assumption. For example, in the voting example above the division into liberal, conservative and moderate might not be finely tuned enough; attributes such as a religion, class or race could also be critical for modeling voter behavior.

Example 2

As another example, we might be interested in modeling the velocities of galaxies using a simple model assuming that the velocities are clustered, for instance by assuming each velocity is distributed according to the normal distribution

We consider this example in more detail. A first naive model is to presuppose that there are

That is, we assume that the data belongs to

Instead of imagining that each data point is first assigned a cluster and then drawn from the distribution associated to that cluster we now think of each observation being associated with parameter

We would now like to extend this model to work without pre-specifying a fixed number of clusters

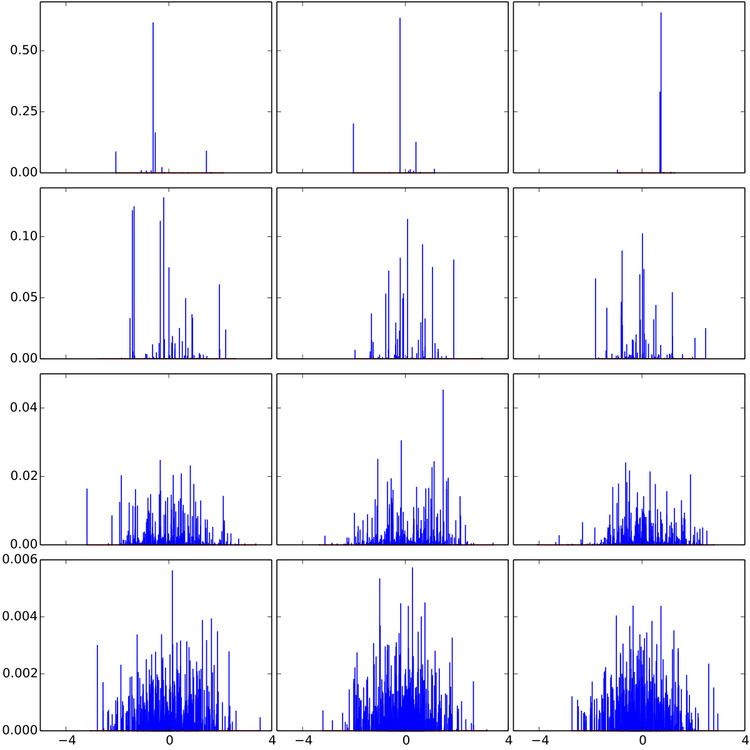

With this in hand we can better understand the computational merits of the Dirichlet process. Suppose that we wanted to draw

Fitting the model described above based on observed data

The Chinese restaurant process

As shown above, a simple distribution, the so-called Chinese restaurant process, results from considering the conditional distribution of one component assignment given all previous ones in a Dirichlet distribution mixture model with

Suppose that

where

- Even if

S is an uncountable set, there is a nonzero probability that two samples will have exactly the same value. Samples from a Dirichlet process are discrete. - The Dirichlet process exhibits a self-reinforcing property; the more often a given value has been sampled in the past, the more likely it is to be sampled again.

The name "Chinese restaurant process" is derived from the following analogy: imagine an infinitely large restaurant containing an infinite number of tables, and able to serve an infinite number of dishes. The restaurant in question operates a somewhat unusual seating policy whereby new diners are seated either at a currently occupied table with probability proportional to the number of guests already seated there, or at an empty table with probability proportional to a constant. Guests who sit at an occupied table must order the same dish as those currently seated, whereas guests allocated a new table are served a new dish at random. The distribution of dishes after

The stick-breaking process

A third approach to the Dirichlet process is the so-called stick-breaking process view. Remember that draws from a Dirichlet process are distributions over a set

where

The locations

where

The smaller

The Pólya urn scheme

Yet another way to visualize the Dirichlet process and Chinese restaurant process is as a modified Pólya urn scheme. Imagine that we start with an urn filled with

- Each time we need an observation, we draw a ball from the urn.

- If the ball is black, we generate a new (non-black) color uniformly, label a new ball this color, drop the new ball into the urn along with the ball we drew, and return the color we generated.

- Otherwise, label a new ball with the color of the ball we drew, drop the new ball into the urn along with the ball we drew, and return the color we observed.

The resulting distribution over colors is the same as the distribution over tables in the Chinese restaurant process. Furthermore, when we draw a black ball, if rather than generating a new color, we instead pick a random value from a base distribution

Applications of the Dirichlet process

Dirichlet processes are frequently used in Bayesian nonparametric statistics. "Nonparametric" here does not mean a parameter-less model, rather a model in which representations grow as more data are observed. Bayesian nonparametric models have gained considerable popularity in the field of machine learning because of the above-mentioned flexibility, especially in unsupervised learning. In a Bayesian nonparametric model, the prior and posterior distributions are not parametric distributions, but stochastic processes. The fact that the Dirichlet distribution is a probability distribution on the simplex of sets of non-negative numbers that sum to one makes it a good candidate to model distributions over distributions or distributions over functions. Additionally, the nonparametric nature of this model makes it an ideal candidate for clustering problems where the distinct number of clusters is unknown beforehand.

As draws from a Dirichlet process are discrete, an important use is as a prior probability in infinite mixture models. In this case,

The infinite nature of these models also lends them to natural language processing applications, where it is often desirable to treat the vocabulary as an infinite, discrete set.

The Dirichlet Process can also be used for nonparametric hypothesis testing, i.e. to develop Bayesian nonparametric versions of the classical nonparametric hypothesis tests, e.g. sign test, Wilcoxon rank sum test, Wilcoxon signed-rank test, etc. For instance, Bayesian nonparametric versions of the Wilcoxon rank sum test and the Wilcoxon signed-rank test have been developed by using the imprecise Dirichlet process, a prior ignorance Dirichlet process.