| ||

In statistics, a probit model is a type of regression where the dependent variable can only take two values, for example married or not married. The word is a portmanteau, coming from probability + unit. The purpose of the model is to estimate the probability that an observation with particular characteristics will fall into a specific one of the categories; moreover, if estimated probabilities greater than 1/2 are treated as classifying an observation into a predicted category, the probit model is a type of binary classification model.

Contents

- Conceptual framework

- Maximum likelihood estimation

- Berksons minimum chi square method

- Gibbs sampling

- Model evaluation

- References

A probit model is a popular specification for an ordinal or a binary response model. As such it treats the same set of problems as does logistic regression using similar techniques. The probit model, which employs a probit link function, is most often estimated using the standard maximum likelihood procedure, such an estimation being called a probit regression.

Probit models were introduced by Chester Bliss in 1934; a fast method for computing maximum likelihood estimates for them was proposed by Ronald Fisher as an appendix to Bliss' work in 1935.

Conceptual framework

Suppose a response variable Y is binary, that is it can have only two possible outcomes which we will denote as 1 and 0. For example Y may represent presence/absence of a certain condition, success/failure of some device, answer yes/no on a survey, etc. We also have a vector of regressors X, which are assumed to influence the outcome Y. Specifically, we assume that the model takes the form

where Pr denotes probability, and Φ is the Cumulative Distribution Function (CDF) of the standard normal distribution. The parameters β are typically estimated by maximum likelihood.

It is possible to motivate the probit model as a latent variable model. Suppose there exists an auxiliary random variable

where ε ~ N(0, 1). Then Y can be viewed as an indicator for whether this latent variable is positive:

The use of the standard normal distribution causes no loss of generality compared with using an arbitrary mean and standard deviation because adding a fixed amount to the mean can be compensated by subtracting the same amount from the intercept, and multiplying the standard deviation by a fixed amount can be compensated by multiplying the weights by the same amount.

To see that the two models are equivalent, note that

Maximum likelihood estimation

Suppose data set

The estimator

Asymptotic distribution for

where

and φ = Φ' is the Probability Density Function (PDF) of standard normal distribution.

Berkson's minimum chi-square method

This method can be applied only when there are many observations of response variable

Suppose among n observations

Denote

Then Berkson's minimum chi-square estimator is a generalized least squares estimator in a regression of

It can be shown that this estimator is consistent (as n→∞ and T fixed), asymptotically normal and efficient. Its advantage is the presence of a closed-form formula for the estimator. However, it is only meaningful to carry out this analysis when individual observations are not available, only their aggregated counts

Gibbs sampling

Gibbs sampling of a probit model is possible because regression models typically use normal prior distributions over the weights, and this distribution is conjugate with the normal distribution of the errors (and hence of the latent variables Y*). The model can be described as

From this, we can determine the full conditional densities needed:

The result for β is given in the article on Bayesian linear regression, although specified with different notation.

The only trickiness is in the last two equations. The notation rtnorm() for generating truncated-normal samples.

Model evaluation

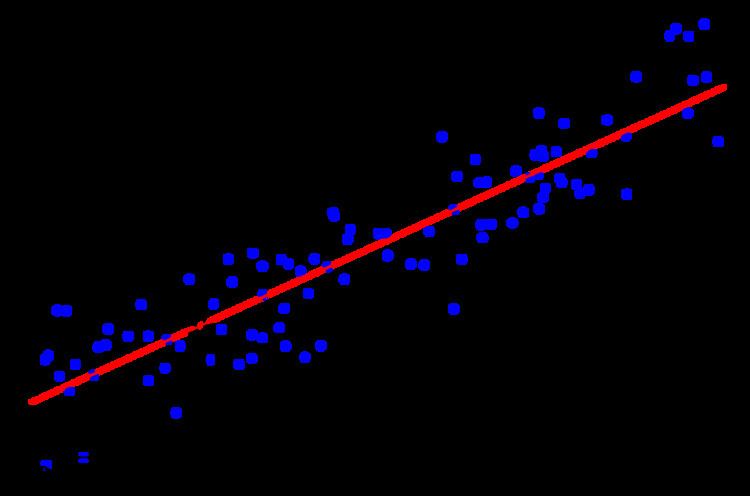

The suitability of an estimated binary model can be evaluated by counting the number of true observations equaling 1, and the number equaling zero, for which the model assigns a correct predicted classification by treating any estimated probability above 1/2 (or, below 1/2), as an assignment of a prediction of 1 (or, of 0). See here for details.