| ||

In statistical decision theory, where we are faced with the problem of estimating a deterministic parameter (vector)

Contents

Problem setup

Consider the problem of estimating a deterministic (not Bayesian) parameter

Unfortunately in general the risk cannot be minimized, since it depends on the unknown parameter

Definition

Definition : An estimator

Least favorable distribution

Logically, an estimator is minimax when it is the best in the worst case. Continuing this logic, a minimax estimator should be a Bayes estimator with respect to a prior least favorable distribution of

Definition: A prior distribution

Theorem 1: If

-

δ π is minimax. - If

δ π is a unique Bayes estimator, it is also the unique minimax estimator. -

π is least favorable.

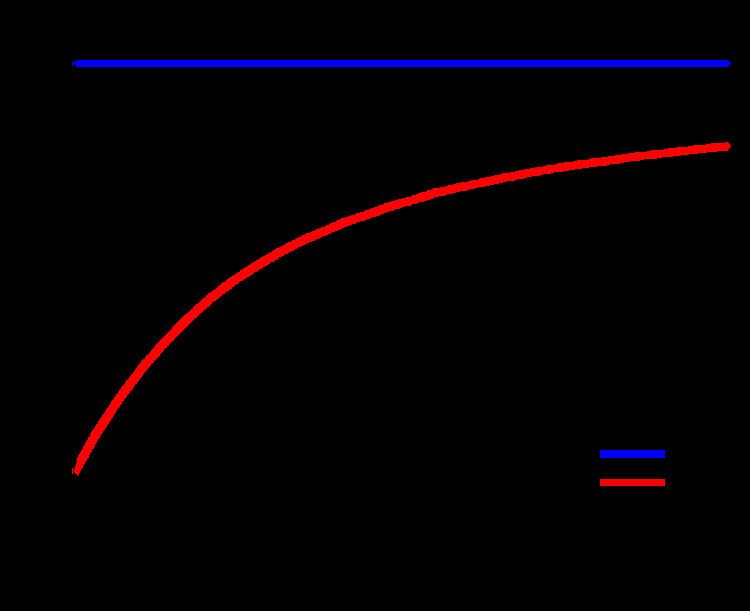

Corollary: If a Bayes estimator has constant risk, it is minimax. Note that this is not a necessary condition.

Example 1, Unfair coin: Consider the problem of estimating the "success" rate of a Binomial variable,

with constant Bayes risk

and, according to the Corollary, is minimax.

Definition: A sequence of prior distributions

Theorem 2: If there are a sequence of priors

-

δ is minimax. - The sequence

π n is least favorable.

Notice that no uniqueness is guaranteed here. For example, the ML estimator from the previous example may be attained as the limit of Bayes estimators with respect to a uniform prior,

Example 2: Consider the problem of estimating the mean of

The risk is constant, but the ML estimator is actually not a Bayes estimator, so the Corollary of Theorem 1 does not apply. However, the ML estimator is the limit of the Bayes estimators with respect to the prior sequence

Some examples

In general it is difficult, often even impossible to determine the minimax estimator. Nonetheless, in many cases a minimax estimator has been determined.

Example 3, Bounded Normal Mean: When estimating the Mean of a Normal Vector

where

Asymptotic minimax estimator

The difficulty of determining the exact minimax estimator has motivated the study of estimators of asymptotic minimax --- an estimator

For many estimation problems, especially in the non-parametric estimation setting, various approximate minimax estimators have been established. The design of approximate minimax estimator is intimately related to the geometry, such as the metric entropy number, of

Relationship to Robust Optimization

Robust optimization is an approach to solve optimization problems under uncertainty in the knowledge of underlying parameters,. For instance, the MMSE Bayesian estimation of a parameter requires the knowledge of parameter correlation function. If the knowledge of this correlation function is not perfectly available, a popular minimax robust optimization approach is to define a set characterizing the uncertainty about the correlation function, and then pursuing a minimax optimization over the uncertainty set and the estimator respectively. Similar minimax optimizations can be pursued to make estimators robust to certain imprecisely known parameters. For instance, a recent study dealing with such techniques in the area of signal processing can be found in.

In R. Fandom Noubiap and W. Seidel (2001) an algorithm for calculating a Gamma-minimax decision rule has been developed, when Gamma is given by a finite number of generalized moment conditions. Such a decision rule minimizes the maximum of the integrals of the risk function with respect to all distributions in Gamma. Gamma-minimax decision rules are of interest in robustness studies in Bayesian statistics.