| ||

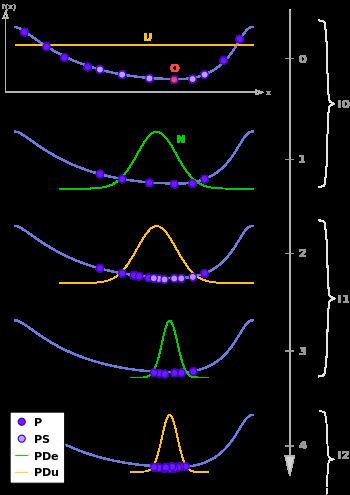

Estimation of distribution algorithms (EDAs), sometimes called probabilistic model-building genetic algorithms (PMBGAs), are stochastic optimization methods that guide the search for the optimum by building and sampling explicit probabilistic models of promising candidate solutions. Optimization is viewed as a series of incremental updates of a probabilistic model, starting with the model encoding the uniform distribution over admissible solutions and ending with the model that generates only the global optima.

Contents

- Estimation of distribution algorithms EDAs

- Univariate factorizations

- Univariate marginal distribution algorithm UMDA

- Population based incremental learning PBIL

- Compact genetic algorithm cGA

- Bivariate factorizations

- Mutual information maximizing input clustering MIMIC

- Bivariate marginal distribution algorithm BMDA

- Multivariate factorizations

- Extended compact genetic algorithm eCGA

- Bayesian optimization algorithm BOA

- Linkage tree Genetic Algorithm LTGA

- Other

- References

EDAs belong to the class of evolutionary algorithms. The main difference between EDAs and most conventional evolutionary algorithms is that evolutionary algorithms generate new candidate solutions using an implicit distribution defined by one or more variation operators, whereas EDAs use an explicit probability distribution encoded by a Bayesian network, a multivariate normal distribution, or another model class. Similarly as other evolutionary algorithms, EDAs can be used to solve optimization problems defined over a number of representations from vectors to LISP style S expressions, and the quality of candidate solutions is often evaluated using one or more objective functions.

The general procedure of an EDA is outlined in the following:

Using explicit probabilistic models in optimization allowed EDAs to feasibly solve optimization problems that were notoriously difficult for most conventional evolutionary algorithms and traditional optimization techniques, such as problems with high levels of epistasis. Nonetheless, the advantage of EDAs is also that these algorithms provide an optimization practitioner with a series of probabilistic models that reveal a lot of information about the problem being solved. This information can in turn be used to design problem-specific neighborhood operators for local search, to bias future runs of EDAs on a similar problem, or to create an efficient computational model of the problem.

For example, if the population is represented by bit strings of length 4, the EDA can represent the population of promising solution using a single vector of four probabilities (p1, p2, p3, p4) where each component of p defines the probability of that position being a 1. Using this probability vector it is possible to create an arbitrary number of candidate solutions.

Estimation of distribution algorithms (EDAs)

This section describes the models built by some well known EDAs of different levels of complexity. It is always assumed a population

Univariate factorizations

The most simple EDAs assume that decision variables are independent, i.e.

Such factorizations are used in many different EDAs, next we describe some of them.

Univariate marginal distribution algorithm (UMDA)

The UMDA is a simple EDA that uses an operator

Every UMDA step can be described as follows

Population-based incremental learning (PBIL)

The PBIL, represents the population implicitly by its model, from which it samples new solutions and updates the model. At each generation,

where

Compact genetic algorithm (cGA)

The CGA, also relies on the implicit populations defined by univariate distributions. At each generation

where,

Bivariate factorizations

Although univariate models can be computed efficiently, in many cases they are not representative enough to provide better performance than GAs. In order to overcome such a drawback, the use of bivariate factorizations was proposed in the EDA community, in which dependencies between pairs of variables could be modeled. A bivariate factorization can be defined as follows, where

Bivariate and multivariate distributions are usually represented as Probabilistic Graphical Models (graphs), in which edges denote statistical dependencies (or conditional probabilities) and vertices denote variables. To learn the structure of a PGM from data linkage-learning is employed.

Mutual information maximizing input clustering (MIMIC)

The MIMIC factorizes the joint probability distribution in a chain-like model representing successive dependencies between variables. It finds a permutation of the decision variables,

New solutions are sampled from the leftmost to the rightmost variable, the first is generated independently and the others according to conditional probabilities. Since the estimated distribution must be recomputed each generation, MIMIC uses concrete populations in the following way

Bivariate marginal distribution algorithm (BMDA)

The BMDA factorizes the joint probability distribution in bivariate distributions. First, a randomly chosen variable is added as a node in a graph, the most dependent variable to one of those in the graph is chosen among those not yet in the graph, this procedure is repeated until no remaining variable depends on any variable in the graph (verified according to a threshold value).

The resulting model is a forest with multiple trees rooted at nodes

Each step of BMDA is defined as follows

Multivariate factorizations

The next stage of EDAs development was the use of multivariate factorizations. In this case, the joint probability distribution is usually factorized in a number of components of limited size

The learning of PGMs encoding multivariate distributions is a computationally expensive task, therefore, it is usual for EDAs to estimate multivariate statistics from bivariate statistics. Such relaxation allows PGM to be built in polynomial time in

Extended compact genetic algorithm (eCGA)

The ECGA was one of the first EDA to employ multivariate factorizations, in which high-order dependencies among decision variables can be modeled. Its approach factorizes the joint probability distribution in the product of multivariate marginal distributions. Assume

The ECGA popularized the term linkage-learning as denoting procedures that identify linkage sets. Its linkage-learning procedure relies on two measures: (1) the Model Complexity (MC) and (2) the Compressed Population Complexity (CPC). The MC quantifies the model representation size in terms of number of bits required to store all the marginal probabilities

The CPC, on the other hand, quantifies the data compression in terms of entropy of the marginal distribution over all partitions, where

The linkage-learning in ECGA works as follows: (1) Insert each variable in a cluster, (2) compute CCC = MC + CPC of the current linkage sets, (3) verify the increase on CCC provided by joining pairs of clusters, (4) effectively joins those clusters with highest CCC improvement. This procedure is repeated until no CCC improvements are possible and produces a linkage model

Bayesian optimization algorithm (BOA)

The BOA uses Bayesian networks to model and sample promising solutions. Bayesian networks are directed acyclic graphs, with nodes representing variables and edges representing conditional probabilities between pair of variables. The value of a variable

The Bayesian network structure, on the other hand, must be built iteratively (linkage-learning). It starts with a network without edges and, at each step, adds the edge which better improves some scoring metric (e.g. Bayesian information criterion (BIC) or Bayesian-Dirichlet metric with likelihood equivalence (BDe)). The scoring metric evaluates the network structure according to its accuracy in modeling the selected population. From the built network, BOA samples new promising solutions as follows: (1) it computes the ancestral ordering for each variable, each node being preceded by its parents; (2) each variable is sampled conditionally to its parents. Given such scenario, every BOA step can be defined as

Linkage-tree Genetic Algorithm (LTGA)

The LTGA differs from most EDA in the sense it does not explicitly model a probabilisty distribution but only a linkage model, called linkage-tree. A linkage

The linkage-tree learning procedure is a hierarchical clustering algorithm, which work as follows. At each step the two closest clusters

The LTGA uses

The LTGA does not implement typical selection operators, instead, selection is performed during recombination. Similar ideas have been usually applied into local-search heuristics and, in this sense, the LTGA can be seen as an hybrid method. In summary, one step of the LTGA is defined as