| ||

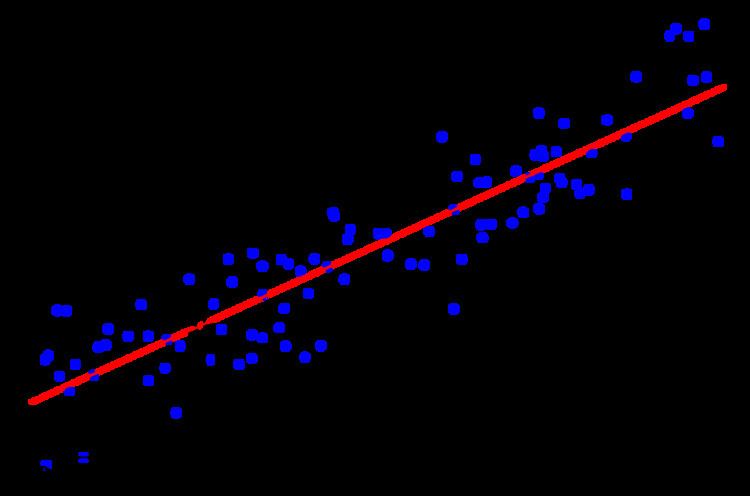

Quantile regression is a type of regression analysis used in statistics and econometrics. Whereas the method of least squares results in estimates that approximate the conditional mean of the response variable given certain values of the predictor variables, quantile regression aims at estimating either the conditional median or other quantiles of the response variable.

Contents

- Advantages and applications

- Mathematics

- History

- Quantiles

- Example

- Intuition

- Sample quantile

- Conditional quantile and quantile regression

- Computation

- Asymptotic properties

- Equivariance

- Scale equivariance

- Shift equivariance

- Equivariance to reparameterization of design

- Invariance to monotone transformations

- Bayesian methods for Quantile Regression

- Censored Quantile Regression

- Implementations

- References

Advantages and applications

Quantile regression is desired if conditional quantile functions are of interest. One advantage of quantile regression, relative to the ordinary least squares regression, is that the quantile regression estimates are more robust against outliers in the response measurements. However, the main attraction of quantile regression goes beyond that. Different measures of central tendency and statistical dispersion can be useful to obtain a more comprehensive analysis of the relationship between variables.

In ecology, quantile regression has been proposed and used as a way to discover more useful predictive relationships between variables in cases where there is no relationship or only a weak relationship between the means of such variables. The need for and success of quantile regression in ecology has been attributed to the complexity of interactions between different factors leading to data with unequal variation of one variable for different ranges of another variable.

Another application of quantile regression is in the areas of growth charts, where percentile curves are commonly used to screen for abnormal growth.

Quantile regression has also been applied to analyze hospital wait-times, where an analysis of extreme quantiles is of more interest than an analysis of the mean wait-time.

Mathematics

The mathematical forms arising from quantile regression are distinct from those arising in the method of least squares. The method of least squares leads to a consideration of problems in an inner product space, involving projection onto subspaces, and thus the problem of minimizing the squared errors can be reduced to a problem in numerical linear algebra. Quantile regression does not have this structure, and instead leads to problems in linear programming that can be solved by the simplex method.

History

The idea of estimating a median regression slope, a major theorem about minimizing sum of the absolute deviances and a geometrical algorithm for constructing median regression was proposed in 1760 by Ruđer Josip Bošković, a Jesuit Catholic priest from Dubrovnik. Median regression computations for larger data sets are quite tedious compared to the least squares method, for which reason it has historically generated a lack of popularity among statisticians, until the widespread adoption of computers in the latter part of the 20th century.

Quantiles

Let

where

Define the loss function as

This can be shown by setting the derivative of the expected loss function to 0 and letting

This equation reduces to

and then to

Hence

Example

Let

Since

Suppose that u is increased by 1 unit. Then the expected loss will be changed by

and any change in u will increase the expected loss. Thus u=5 is the median. The Table below shows the expected loss (divided by

Intuition

Consider

In order to minimize the expected loss, we move the value of q a little bit to see whether the expect loss will rise or fall. Suppose we increase q by 1 unit. Then the change of expected loss would be

The first term of the equation is

In order to minimize the expected loss function, we would increase (decrease) L(q) if q is smaller (larger) than the median, until q reaches the median. The idea behind the minimization is to count the number of points (weighted with the density) that are larger or smaller than q and then move q to a point where q is larger than

Sample quantile

The

Conditional quantile and quantile regression

Suppose the

Solving the sample analog gives the estimator of

Computation

The minimization problem can be reformulated as a linear programming problem

where

Simplex methods or interior point methods can be applied to solve the linear programming problem.

Asymptotic properties

For

where

Direct estimation of the asymptotic variance-covariance matrix is not always satisfactory. Inference for quantile regression parameters can be made with the regression rank-score tests or with the bootstrap methods.

Equivariance

See invariant estimator for background on invariance or see equivariance.

Scale equivariance

For any

Shift equivariance

For any

Equivariance to reparameterization of design

Let

Invariance to monotone transformations

If

Example (1):

Let

Bayesian methods for Quantile Regression

Because quantile regression does not normally assume a parametric likelihood for the conditional distributions of Y|X, the Bayesian methods work with a working likelihood. A convenient choice is the asymmetric Laplacian likelihood, because the mode of the resulting posterior under a flat prior is the usual quantile regression estimates. The posterior inference, however, must be interpreted with care. Yang and He showed that one can have asymptotically valid posterior inference if the working likelihood is chosen to be the empirical likelihood.

Censored Quantile Regression

If the response variable is subject to censoring, the conditional mean is not identifiable without additional distributional assumptions, but the conditional quantile is often identifiable. For recent work on censored quantile regression, see: Portnoy and Wang and Wang

Example (2):

Let

For random censoring on the response variables, the censored quantile regression of Portnoy (2003) provides consistent estimates of all identifiable quantile functions based on reweighting each censored point appropriately.

Implementations

Numerous statistical software packages include implementations of quantile regression:

quantregquantreg by Roger Koenker, but also gbm, quantregForest and qrnnproc quantreg (ver. 9.2) and proc quantselect (ver. 9.3).qreg command.--loss_function quantile.--QuantRegQuantileRegression.m[1] hosted at the MathematicaForPrediction project at GitHub.