Support x ∈ (0, 1) | Mean no analytical solution | |

| ||

Notation P ( N ( μ , σ 2 ) ) {\displaystyle P({\mathcal {N}}(\mu ,\,\sigma ^{2}))} Parameters σ > 0 — squared scale (real),μ ∈ R — location PDF 1 σ 2 π e − ( logit ( x ) − μ ) 2 2 σ 2 1 x ( 1 − x ) {\displaystyle {\frac {1}{\sigma {\sqrt {2\pi }}}}\,e^{-{\frac {(\operatorname {logit} (x)-\mu )^{2}}{2\sigma ^{2}}}}{\frac {1}{x(1-x)}}} CDF 1 2 [ 1 + erf ( logit ( x ) − μ 2 σ 2 ) ] {\displaystyle {\frac {1}{2}}{\Big [}1+\operatorname {erf} {\Big (}{\frac {\operatorname {logit} (x)-\mu }{\sqrt {2\sigma ^{2}}}}{\Big )}{\Big ]}} | ||

In probability theory, a logit-normal distribution is a probability distribution of a random variable whose logit has a normal distribution. If Y is a random variable with a normal distribution, and P is the logistic function, then X = P(Y) has a logit-normal distribution; likewise, if X is logit-normally distributed, then Y = logit(X)= log (X/(1-X)) is normally distributed. It is also known as the logistic normal distribution, which often refers to a multinomial logit version (e.g.).

Contents

- Probability density function

- Moments

- Mode

- Multivariate generalization

- Use in statistical analysis

- Relationship with the Dirichlet distribution

- References

A variable might be modeled as logit-normal if it is a proportion, which is bounded by zero and one, and where values of zero and one never occur.

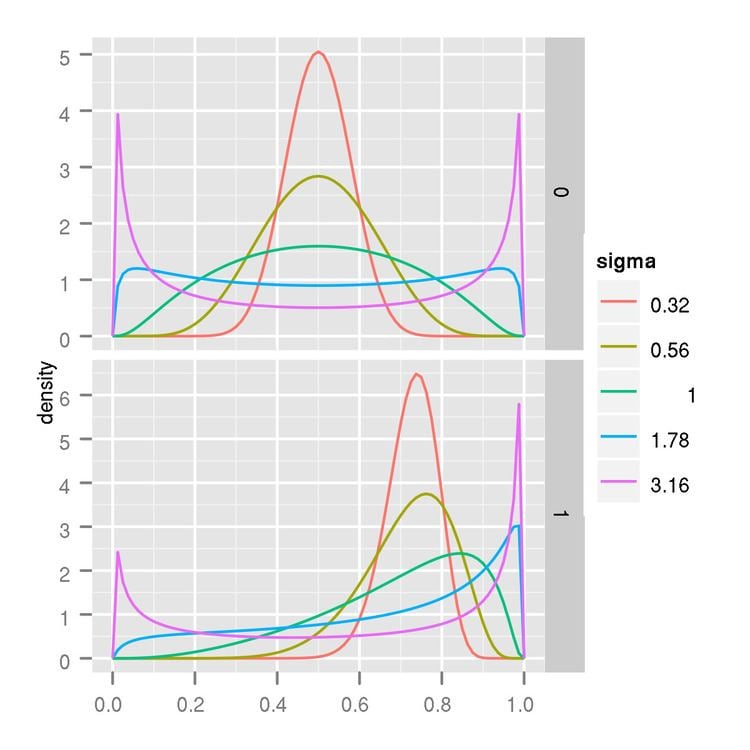

Probability density function

The probability density function (PDF) of a logit-normal distribution, for 0 ≤ x ≤ 1, is:

where μ and σ are the mean and standard deviation of the variable’s logit (by definition, the variable’s logit is normally distributed).

The density obtained by changing the sign of μ is symmetrical, in that it is equal to f(1-x;-μ,σ), shifting the mode to the other side of 0.5 (the midpoint of the (0,1) interval).

Moments

The moments of the logit-normal distribution have no analytic solution. However, they can be estimated by numerical integration.

Mode

When the derivative of the density equals 0 then the location of the mode x satisfies the following equation:

Multivariate generalization

The logistic normal distribution is a generalization of the logit–normal distribution to D-dimensional probability vectors by taking a logistic transformation of a multivariate normal distribution.

Probability density function

The probability density function is:

where

The unique inverse mapping is given by:

This is the case of a vector x which components sum up to one. In the case of x with sigmoidal elements, that is, when

we have

where the log and the division in the argument are taken element-wise. This is because the Jacobian matrix of the transformation is diagonal with elements

Use in statistical analysis

The logistic normal distribution is a more flexible alternative to the Dirichlet distribution in that it can capture correlations between components of probability vectors. It also has the potential to simplify statistical analyses of compositional data by allowing one to answer questions about log-ratios of the components of the data vectors. One is often interested in ratios rather than absolute component values.

The probability simplex is a bounded space, making standard techniques that are typically applied to vectors in

Relationship with the Dirichlet distribution

The Dirichlet and logistic normal distributions are never exactly equal for any choice of parameters. However, Aitchison described a method for approximating a Dirichlet with a logistic normal such that their Kullback–Leibler divergence (KL) is minimized:

This is minimized by:

Using moment properties of the Dirichlet distribution, the solution can be written in terms of the digamma

This approximation is particularly accurate for large