| ||

Learning to rank or machine-learned ranking (MLR) is the application of machine learning, typically supervised, semi-supervised or reinforcement learning, in the construction of ranking models for information retrieval systems. Training data consists of lists of items with some partial order specified between items in each list. This order is typically induced by giving a numerical or ordinal score or a binary judgment (e.g. "relevant" or "not relevant") for each item. The ranking model's purpose is to rank, i.e. produce a permutation of items in new, unseen lists in a way which is "similar" to rankings in the training data in some sense.

Contents

In information retrieval

Ranking is a central part of many information retrieval problems, such as document retrieval, collaborative filtering, sentiment analysis, and online advertising.

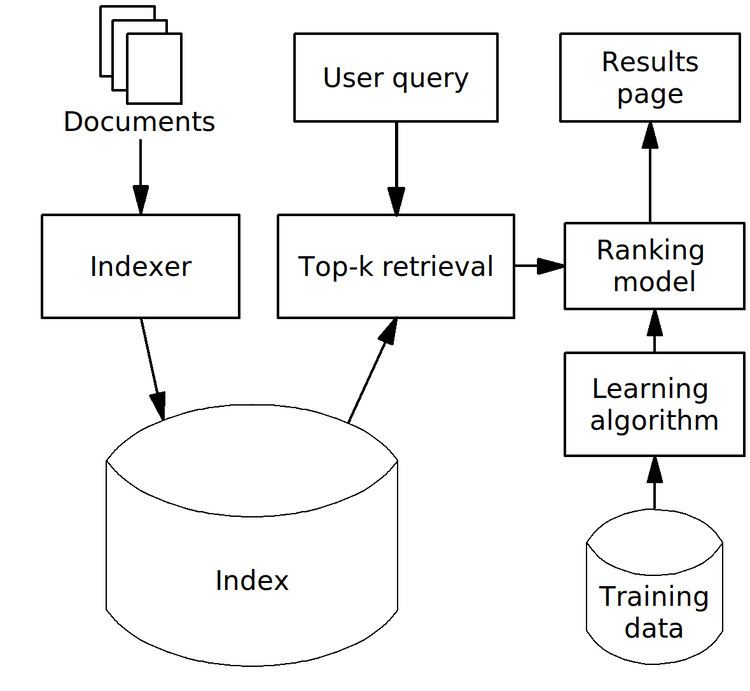

A possible architecture of a machine-learned search engine is shown in the figure to the right.

Training data consists of queries and documents matching them together with relevance degree of each match. It may be prepared manually by human assessors (or raters, as Google calls them), who check results for some queries and determine relevance of each result. It is not feasible to check relevance of all documents, and so typically a technique called pooling is used — only the top few documents, retrieved by some existing ranking models are checked. Alternatively, training data may be derived automatically by analyzing clickthrough logs (i.e. search results which got clicks from users), query chains, or such search engines' features as Google's SearchWiki.

Training data is used by a learning algorithm to produce a ranking model which computes relevance of documents for actual queries.

Typically, users expect a search query to complete in a short time (such as a few hundred milliseconds for web search), which makes it impossible to evaluate a complex ranking model on each document in the corpus, and so a two-phase scheme is used. First, a small number of potentially relevant documents are identified using simpler retrieval models which permit fast query evaluation, such as the vector space model, boolean model, weighted AND, or BM25. This phase is called top-

In other areas

Learning to rank algorithms have been applied in areas other than information retrieval:

Feature vectors

For convenience of MLR algorithms, query-document pairs are usually represented by numerical vectors, which are called feature vectors. Such an approach is sometimes called bag of features and is analogous to the bag of words model and vector space model used in information retrieval for representation of documents.

Components of such vectors are called features, factors or ranking signals. They may be divided into three groups (features from document retrieval are shown as examples):

Some examples of features, which were used in the well-known LETOR dataset:

Selecting and designing good features is an important area in machine learning, which is called feature engineering.

Evaluation measures

There are several measures (metrics) which are commonly used to judge how well an algorithm is doing on training data and to compare performance of different MLR algorithms. Often a learning-to-rank problem is reformulated as an optimization problem with respect to one of these metrics.

Examples of ranking quality measures:

DCG and its normalized variant NDCG are usually preferred in academic research when multiple levels of relevance are used. Other metrics such as MAP, MRR and precision, are defined only for binary judgements.

Recently, there have been proposed several new evaluation metrics which claim to model user's satisfaction with search results better than the DCG metric:

Both of these metrics are based on the assumption that the user is more likely to stop looking at search results after examining a more relevant document, than after a less relevant document.

Approaches

Tie-Yan Liu of Microsoft Research Asia has analyzed existing algorithms for learning to rank problems in his paper "Learning to Rank for Information Retrieval". He categorized them into three groups by their input representation and loss function:

Pointwise approach

In this case it is assumed that each query-document pair in the training data has a numerical or ordinal score. Then learning-to-rank problem can be approximated by a regression problem — given a single query-document pair, predict its score.

A number of existing supervised machine learning algorithms can be readily used for this purpose. Ordinal regression and classification algorithms can also be used in pointwise approach when they are used to predict score of a single query-document pair, and it takes a small, finite number of values.

Pairwise approach

In this case learning-to-rank problem is approximated by a classification problem — learning a binary classifier that can tell which document is better in a given pair of documents. The goal is to minimize average number of inversions in ranking.

Listwise approach

These algorithms try to directly optimize the value of one of the above evaluation measures, averaged over all queries in the training data. This is difficult because most evaluation measures are not continuous functions with respect to ranking model's parameters, and so continuous approximations or bounds on evaluation measures have to be used.

List of methods

A partial list of published learning-to-rank algorithms is shown below with years of first publication of each method:

Note: as most supervised learning algorithms can be applied to pointwise case, only those methods which are specifically designed with ranking in mind are shown above.

History

Norbert Fuhr introduced the general idea of MLR in 1992, describing learning approaches in information retrieval as a generalization of parameter estimation; a specific variant of this approach (using polynomial regression) had been published by him three years earlier. Bill Cooper proposed logistic regression for the same purpose in 1992 and used it with his Berkeley research group to train a successful ranking function for TREC. Manning et al. suggest that these early works achieved limited results in their time due to little available training data and poor machine learning techniques.

Several conferences, such as NIPS, SIGIR and ICML had workshops devoted to the learning-to-rank problem since mid-2000s (decade).

Practical usage by search engines

Commercial web search engines began using machine learned ranking systems since the 2000s (decade). One of the first search engines to start using it was AltaVista (later its technology was acquired by Overture, and then Yahoo), which launched a gradient boosting-trained ranking function in April 2003.

Bing's search is said to be powered by RankNet algorithm, which was invented at Microsoft Research in 2005.

In November 2009 a Russian search engine Yandex announced that it had significantly increased its search quality due to deployment of a new proprietary MatrixNet algorithm, a variant of gradient boosting method which uses oblivious decision trees. Recently they have also sponsored a machine-learned ranking competition "Internet Mathematics 2009" based on their own search engine's production data. Yahoo has announced a similar competition in 2010.

As of 2008, Google's Peter Norvig denied that their search engine exclusively relies on machine-learned ranking. Cuil's CEO, Tom Costello, suggests that they prefer hand-built models because they can outperform machine-learned models when measured against metrics like click-through rate or time on landing page, which is because machine-learned models "learn what people say they like, not what people actually like".

In January 2017 the technology was included in the open source search engine Apache Solr™, thus making machine learned search rank widely accessible also for enterprise search.