| ||

Id3 algorithm how it works

In decision tree learning, ID3 (Iterative Dichotomiser 3) is an algorithm invented by Ross Quinlan used to generate a decision tree from a dataset. ID3 is the precursor to the C4.5 algorithm, and is typically used in the machine learning and natural language processing domains.

Contents

- Id3 algorithm how it works

- Id3 algorithm

- Algorithm

- Summary

- Pseudocode

- Properties

- Usage

- Entropy

- Information gain

- References

Id3 algorithm

Algorithm

The ID3 algorithm begins with the original set

Recursion on a subset may stop in one of these cases:

Throughout the algorithm, the decision tree is constructed with each non-terminal node representing the selected attribute on which the data was split, and terminal nodes representing the class label of the final subset of this branch.

Summary

- Calculate the entropy of every attribute using the data set

S - Split the set

S into subsets using the attribute for which the resulting entropy (after splitting) is minimum (or, equivalently, information gain is maximum) - Make a decision tree node containing that attribute

- Recurse on subsets using remaining attributes.

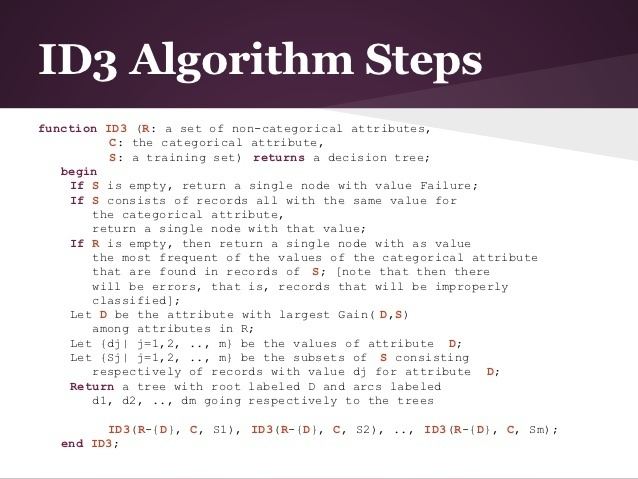

Pseudocode

ID3 (Examples, Target_Attribute, Attributes) Create a root node for the tree If all examples are positive, Return the single-node tree Root, with label = +. If all examples are negative, Return the single-node tree Root, with label = -. If number of predicting attributes is empty, then Return the single node tree Root, with label = most common value of the target attribute in the examples. Otherwise Begin A ← The Attribute that best classifies examples. Decision Tree attribute for Root = A. For each possible value, vi, of A, Add a new tree branch below Root, corresponding to the test A = vi. Let Examples(vi) be the subset of examples that have the value vi for A If Examples(vi) is empty Then below this new branch add a leaf node with label = most common target value in the examples Else below this new branch add the subtree ID3 (Examples(vi), Target_Attribute, Attributes – {A}) End Return RootProperties

ID3 does not guarantee an optimal solution; it can get stuck in local optima. It uses a greedy approach by selecting the best attribute to split the dataset on each iteration. One improvement that can be made on the algorithm can be to use backtracking during the search for the optimal decision tree.

ID3 can overfit to the training data. To avoid overfitting, smaller decision trees should be preferred over larger ones. This algorithm usually produces small trees, but it does not always produce the smallest possible tree.

ID3 is harder to use on continuous data. If the values of any given attribute is continuous, then there are many more places to split the data on this attribute, and searching for the best value to split by can be time consuming.

Usage

The ID3 algorithm is used by training on a dataset

Entropy

Entropy

Where,

When

In ID3, entropy is calculated for each remaining attribute. The attribute with the smallest entropy is used to split the set

Information gain

Information gain

Where,

In ID3, information gain can be calculated (instead of entropy) for each remaining attribute. The attribute with the largest information gain is used to split the set