| ||

Empirical risk minimization (ERM) is a principle in statistical learning theory which defines a family of learning algorithms and is used to give theoretical bounds on the performance of learning algorithms.

Contents

Background

Consider the following situation, which is a general setting of many supervised learning problems. We have two spaces of objects

To put it more formally, we assume that there is a joint probability distribution

We also assume that we are given a non-negative real-valued loss function

A loss function commonly used in theory is the 0-1 loss function:

The ultimate goal of a learning algorithm is to find a hypothesis

Empirical risk minimization

In general, the risk

Empirical risk minimization principle states that the learning algorithm should choose a hypothesis

Thus the learning algorithm defined by the ERM principle consists in solving the above optimization problem.

Computational complexity

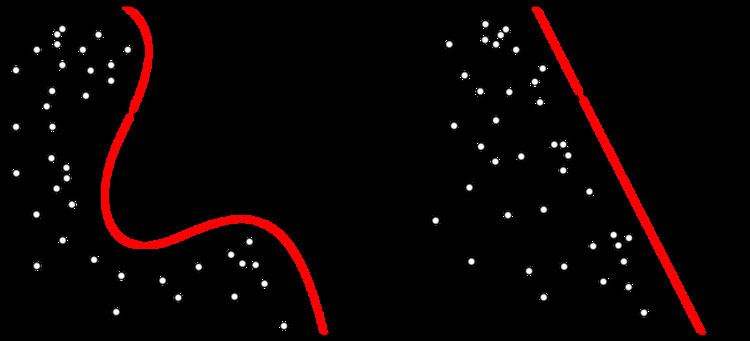

Empirical risk minimization for a classification problem with 0-1 loss function is known to be an NP-hard problem even for such relatively simple class of functions as linear classifiers. Though, it can be solved efficiently when minimal empirical risk is zero, i.e. data is linearly separable.

In practice, machine learning algorithms cope with that either by employing a convex approximation to 0-1 loss function (like hinge loss for SVM), which is easier to optimize, or by posing assumptions on the distribution