| ||

Additive white Gaussian noise (AWGN) is a basic noise model used in Information theory to mimic the effect of many random processes that occur in nature. The modifiers denote specific characteristics:

Contents

- Channel capacity

- Channel capacity and sphere packing

- Achievability

- Coding theorem converse

- Effects in time domain

- Effects in phasor domain

- References

Wideband noise comes from many natural sources, such as the thermal vibrations of atoms in conductors (referred to as thermal noise or Johnson-Nyquist noise), shot noise, black body radiation from the earth and other warm objects, and from celestial sources such as the Sun. The central limit theorem of probability theory indicates that the summation of many random processes will tend to have distribution called Gaussian or Normal.

AWGN is often used as a channel model in which the only impairment to communication is a linear addition of wideband or white noise with a constant spectral density (expressed as watts per hertz of bandwidth) and a Gaussian distribution of amplitude. The model does not account for fading, frequency selectivity, interference, nonlinearity or dispersion. However, it produces simple and tractable mathematical models which are useful for gaining insight into the underlying behavior of a system before these other phenomena are considered.

The AWGN channel is a good model for many satellite and deep space communication links. It is not a good model for most terrestrial links because of multipath, terrain blocking, interference, etc. However, for terrestrial path modeling, AWGN is commonly used to simulate background noise of the channel under study, in addition to multipath, terrain blocking, interference, ground clutter and self interference that modern radio systems encounter in terrestrial operation.

Channel capacity

The AWGN channel is represented by a series of outputs

The capacity of the channel is infinite unless the noise n is nonzero, and the

where

Where

But

Evaluating the differential entropy of a Gaussian gives:

Because

From this bound, we infer from a property of the differential entropy that

Therefore, the channel capacity is given by the highest achievable bound on the mutual information:

Where

Thus the channel capacity

Channel capacity and sphere packing

Suppose that we are sending messages through the channel with index ranging from

A rate is said to be achievable if there is a sequence of codes so that the maximum probability of error tends to zero as

Consider a codeword of length

Each codeword vector has an associated sphere of received codeword vectors which are decoded to it and each such sphere must map uniquely onto a codeword. Because these spheres therefore must not intersect, we are faced with the problem of sphere packing. How many distinct codewords can we pack into our

By this argument, the rate R can be no more than

Achievability

In this section, we show achievability of the upper bound on the rate from the last section.

A codebook, known to both encoder and decoder, is generated by selecting codewords of length n, i.i.d. Gaussian with variance

Received messages are decoded to a message in the codebook which is uniquely jointly typical. If there is no such message or if the power constraint is violated, a decoding error is declared.

Let

- Event

U :the power of the received message is larger thanP . - Event

V : the transmitted and received codewords are not jointly typical. - Event

E j ( X n ( j ) , Y n ) is inA ϵ ( n ) i ≠ j , which is to say that the incorrect codeword is jointly typical with the received vector.

An error therefore occurs if

Therefore, as n approaches infinity,

Coding theorem converse

Here we show that rates above the capacity

Suppose that the power constraint is satisfied for a codebook, and further suppose that the messages follow a uniform distribution. Let

Making use of Fano's inequality gives:

Let

Let

Where the sum is over all input messages

And, if

Therefore,

We may apply Jensen's equality to

Because each codeword individually satisfies the power constraint, the average also satisfies the power constraint. Therefore,

Which we may apply to simplify the inequality above and get:

Therefore, it must be that

Effects in time domain

In serial data communications, the AWGN mathematical model is used to model the timing error caused by random jitter (RJ).

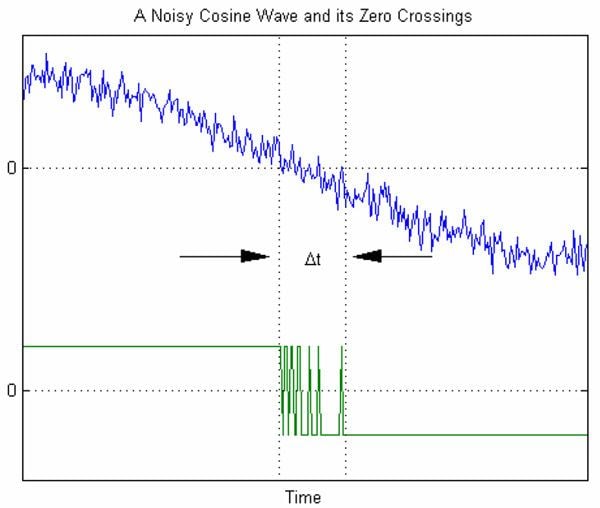

The graph to the right shows an example of timing errors associated with AWGN. The variable Δt represents the uncertainty in the zero crossing. As the amplitude of the AWGN is increased, the signal-to-noise ratio decreases. This results in increased uncertainty Δt.

When affected by AWGN, The average number of either positive going or negative going zero-crossings per second at the output of a narrow bandpass filter when the input is a sine wave is:

Where

Effects in phasor domain

In modern communication systems, bandlimited AWGN cannot be ignored. When modeling bandlimited AWGN in the phasor domain, statistical analysis reveals that the amplitudes of the real and imaginary contributions are independent variables which follow the Gaussian distribution model. When combined, the resultant phasor's magnitude is a Rayleigh distributed random variable while the phase is uniformly distributed from 0 to 2π.

The graph to the right shows an example of how bandlimited AWGN can affect a coherent carrier signal. The instantaneous response of the Noise Vector cannot be precisely predicted, however its time-averaged response can be statistically predicted. As shown in the graph, we confidently predict that the noise phasor will reside inside the 1σ circle about 38% of the time; the noise phasor will reside inside the 2σ circle about 86% of the time; and the noise phasor will reside inside the 3σ circle about 98% of the time.