| ||

What are 100 year floods

A one-hundred-year flood is a flood event that has a 1% probability of occurring in any given year. The 100-year flood is also referred to as the 1% flood, since its annual exceedance probability is 1%. For river systems, the 100-year flood is generally expressed as a flowrate. Based on the expected 100-year flood flow rate, the flood water level can be mapped as an area of inundation. The resulting floodplain map is referred to as the 100-year floodplain. Estimates of the 100-year flood flowrate and other streamflow statistics for any stream in the United States are available. In the UK The Environment Agency publishes a comprehensive map of all areas at risk of a 1 in 100 year flood (Flood zone 3) Areas near the coast of an ocean or large lake also can be flooded by combinations of tide, storm surge, and waves. Maps of the riverine or coastal 100-year floodplain may figure importantly in building permits, environmental regulations, and flood insurance.

Contents

- What are 100 year floods

- Probability

- Statistical assumptions

- Probability uncertainty

- Observed intervals between floods

- Regulatory use

- References

Probability

A common misunderstanding exists that a 100-year flood is likely to occur only once in a 100-year period. In fact, there is approximately a 63.4% chance of one or more 100-year floods occurring in any 100-year period. On the Danube River at Passau, Germany, the actual intervals between 100-year floods during 1501 to 2013 ranged from 37 to 192 years. The probability Pe that one or more floods occurring during any period will exceed a given flood threshold can be expressed, using the binomial distribution, as

where T is the threshold return period (e.g. 100-yr, 50-yr, 25-yr, and so forth), and n is the number of years in the period. The probability of exceedance Pe is also described as the natural, inherent, or hydrologic risk of failure. However, the expected value of the number of 100-year floods occurring in any 100-year period is 1.

Ten-year floods have a 10% chance of occurring in any given year (Pe =0.10); 500-year have a 0.2% chance of occurring in any given year (Pe =0.002); etc. The percent chance of an X-year flood occurring in a single year can be calculated by dividing 100 by X. A similar analysis is commonly applied to coastal flooding or rainfall data. The recurrence interval of a storm is rarely identical to that of an associated riverine flood, because of rainfall timing and location variations among different drainage basins.

The field of extreme value theory was created to model rare events such as 100-year floods for the purposes of civil engineering. This theory is most commonly applied to the maximum or minimum observed stream flows of a given river. In desert areas where there are only ephemeral washes, this method is applied to the maximum observed rainfall over a given period of time (24-hours, 6-hours, or 3-hours). The extreme value analysis only considers the most extreme event observed in a given year. So, between the large spring runoff and a heavy summer rain storm, whichever resulted in more runoff would be considered the extreme event, while the smaller event would be ignored in the analysis (even though both may have been capable of causing terrible flooding in their own right).

Statistical assumptions

There are a number of assumptions which are made to complete the analysis which determines the 100-year flood. First, the extreme events observed in each year must be independent from year-to-year. In other words, the maximum river flow rate from 1984 cannot be found to be significantly correlated with the observed flow rate in 1985. 1985 cannot be correlated with 1986, and so forth. The second assumption is that the observed extreme events must come from the same probability distribution function. The third assumption is that the probability distribution relates to the largest storm (rainfall or river flow rate measurement) that occurs in any one year. The fourth assumption is that the probability distribution function is stationary, meaning that the mean (average), standard deviation and max/min values are not increasing or decreasing over time. This concept is referred to as stationarity.

The first assumption is often but not always valid and should be tested on a case by case basis. The second assumption is often valid if the extreme events are observed under similar climate conditions. For example, if the extreme events on record all come from late summer thunder storms (as is the case in the southwest U.S.), or from snow pack melting (as is the case in north-central U.S.), then this assumption should be valid. If, however, there are some extreme events taken from thunder storms, others from snow pack melting, and others from hurricanes, then this assumption is most likely not valid. The third assumption is only a problem when trying to forecast a low, but maximum flow event (for example, an event smaller than a 2-year flood). Since this is not typically a goal in extreme analysis, or in civil engineering design, then the situation rarely presents itself. The final assumption about stationarity is difficult to test from data for a single site because of the large uncertainties in even the longest flood records (see next section). More broadly, substantial evidence of climate change strongly suggests that the probability distribution is also changing and that managing flood risks in the future will become even more difficult. The simplest implication of this is that not all of the historical data are, or can be, considered valid as input into the extreme event analysis.

Probability uncertainty

When these assumptions are violated there is an unknown amount of uncertainty introduced into the reported value of what the 100-year flood means in terms of rainfall intensity, or flood depth. When all of the inputs are known the uncertainty can be measured in the form of a confidence interval. For example, one might say there is a 95% chance that the 100-year flood is greater than X, but less than Y.

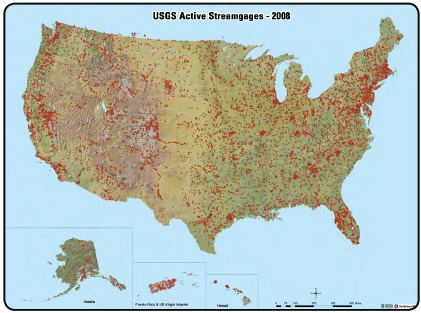

Direct statistical analysis to estimate the 100-year riverine flood is possible only at the relatively few locations where an annual series of maximum instantaneous flood discharges has been recorded. In the United States as of 2014, taxpayers have supported such records for at least 60 years at fewer than 2,600 locations, for at least 90 years at fewer than 500, and for at least 120 years at only 11. For comparison, the total area of the nation is about 3,800,000 square miles (9,800,000 km2), so there are perhaps 3,000 stream reaches that drain watersheds of 1,000 square miles (2,600 km2) and 300,000 reaches that drain 10 square miles (26 km2). In urban areas, 100-year flood estimates are needed for watersheds as small as 1 square mile (2.6 km2). For reaches without sufficient data for direct analysis, 100-year flood estimates are derived from indirect statistical analysis of flood records at other locations in a hydrologically similar region or from other hydrologic models. Similarly for coastal floods, tide gauge data exist for only about 1,450 sites worldwide, of which only about 950 have added information to the global data center since January 2010.

Much longer records of flood elevations exist at a few locations around the world, such as the Danube River at Passau, Germany, but they must be evaluated carefully for accuracy and completeness before any statistical interpretation.

For an individual stream reach, the uncertainties in any analysis can be large, so 100-year flood estimates have large individual uncertainties for most stream reaches. For the largest recorded flood at any specific location, or any potentially larger event, the recurrence interval always is poorly known. Spatial variability adds more uncertainty, because a flood peak observed at different locations on the same stream during the same event commonly represents a different recurrence interval at each location. If an extreme storm drops enough rain on one branch of a river to cause a 100-year flood, but no rain falls over another branch, the flood wave downstream from their junction might have a recurrence interval of only 10 years. Conversely, a storm that produces a 25-year flood simultaneously in each branch might form a 100-year flood downstream. During a time of flooding, news accounts necessarily simplify the story by reporting the greatest damage and largest recurrence interval estimated at any location. The public can easily and incorrectly conclude that the recurrence interval applies to all stream reaches in the flood area.

Observed intervals between floods

Peak elevations of 14 floods as early as 1501 on the Danube River at Passau, Germany, reveal great variability in the actual intervals between floods. Flood events greater than the 50-year flood occurred at intervals of 4 to 192 years since 1501, and the 50-year flood of 2002 was followed only 11 years later by a 500-year flood. Only half of the intervals between 50- and 100-year floods were within 50 percent of the nominal average interval. Similarly, the intervals between 5-year floods during 1955 to 2007 ranged from 5 months to 16 years, and only half were within 2.5 to 7.5 years.

Regulatory use

In the United States, the 100-year flood provides the risk basis for flood insurance rates. Complete information on the National Flood Insurance Program is available here. A regulatory flood or base flood is routinely established for river reaches through a science-based rule making process targeted to a 100-year flood at the historical average recurrence interval. In addition to historical flood data, the process accounts for previously established regulatory values, the effects of flood-control reservoirs, and changes in land use in the watershed. Coastal flood hazards have been mapped by a similar approach that includes the relevant physical processes. Most areas where serious floods can occur in the United States have been mapped consistently in this manner. On average nationwide, those 100-year flood estimates are well sufficient for the purposes of the National Flood Insurance Program and offer reasonable estimates of future flood risk, if the future is like the past. Approximately 3% of the U.S. population lives in areas subject to the 1% annual chance coastal flood hazard.