| ||

Pregroup grammar (PG) is a grammar formalism intimately related to categorial grammars. Much like categorial grammar (CG), PG is a kind of type logical grammar. Unlike CG, however, PG does not have a distinguished function type. Rather, PG uses inverse types combined with its monoidal operation.

Contents

Definition of a pregroup

A pregroup is a partially ordered algebra

The contraction and expansion relations are sometimes called Ajdukiewicz laws.

From this, it can be proven that the following equations hold:

The symbol

Definition of a pregroup grammar

A pregroup grammar consists of a lexicon of words (and possibly morphemes) L, a set of atomic types T which freely generates a pregroup, and an relation

Examples

Some simple, intuitive examples using English as the language to model demonstrate the core principles behind pregroups and their use in linguistic domains.

Let L = {John, Mary, the, dog, cat, met, barked, at}, let T = {N, S, N0}, and let the following typing relation holds:

A sentence S that has type T is said to be grammatical if

by first using contraction on

A more complex example proves that the dog barked at the cat is grammatical:

Semantics of pregroup grammars

Because of the lack of function types in PG, the usual method of giving a semantics via the λ-calculus or via function denotations is not available in any obvious way. Instead, two different methods exist, one purely formal method that corresponds to the λ-calculus, and one denotational method analogous to (a fragment of) the tensor mathematics of quantum mechanics.

Purely formal semantics

The purely formal semantics for PG consists of a logical language defined according to the following rules:

Some examples of terms are f(x), g(a,h(x,y)),

The usual conventions regarding α conversion apply.

For a given language, we give an assignment I that maps typed words to typed closed terms in a way that respects the pregroup structure of the types. For the English fragment given above we might therefore have the following assignment (with the obvious, implicit set of atomic terms and function symbols):

where E is the type of entities in the domain, and T is the type of truth values.

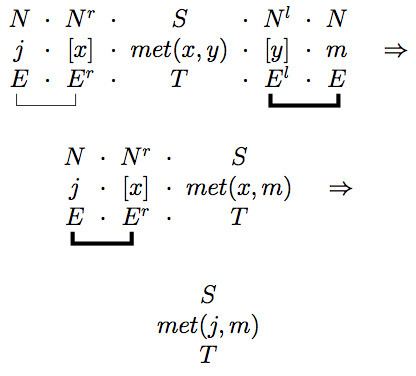

Together with this core definition of the semantics of PG, we also have a reduction rules that are employed in parallel with the type reductions. Placing the syntactic types at the top and semantics below, we have

For example, applying this to the types and semantics for the sentence

For the sentence