| ||

Kernel regression is a non-parametric technique in statistics to estimate the conditional expectation of a random variable. The objective is to find a non-linear relation between a pair of random variables X and Y.

Contents

- NadarayaWatson kernel regression

- Derivation

- PriestleyChao kernel estimator

- GasserMller kernel estimator

- Example

- Script for example

- Related

- Statistical implementation

- References

In any nonparametric regression, the conditional expectation of a variable

where

Nadaraya–Watson kernel regression

Nadaraya and Watson, both in 1964, proposed to estimate

where

Derivation

Using the kernel density estimation for the joint distribution f(x,y) and f(x) with a kernel K,

we obtain the Nadaraya–Watson estimator.

Priestley–Chao kernel estimator

Gasser–Müller kernel estimator

where

Example

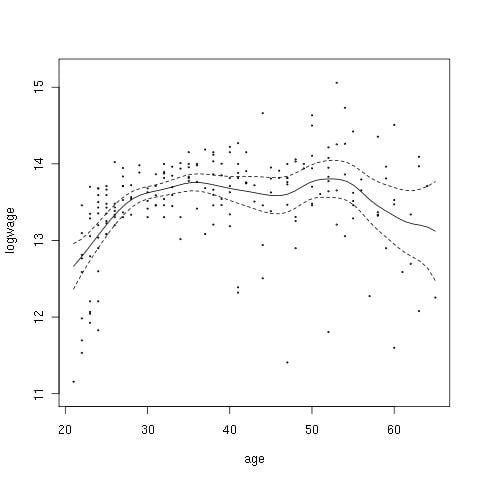

This example is based upon Canadian cross-section wage data consisting of a random sample taken from the 1971 Canadian Census Public Use Tapes for male individuals having common education (grade 13). There are 205 observations in total.

The figure to the right shows the estimated regression function using a second order Gaussian kernel along with asymptotic variability bounds

Script for example

The following commands of the R programming language use the npreg() function to deliver optimal smoothing and to create the figure given above. These commands can be entered at the command prompt via cut and paste.

Related

According to David Salsburg, the algorithms used in kernel regression were independently developed and used in fuzzy systems: "Coming up with almost exactly the same computer algorithm, fuzzy systems and kernel density-based regressions appear to have been developed completely independently of one another."

Statistical implementation

npreg of the np package can perform kernel regression.