| ||

In information theory, joint entropy is a measure of the uncertainty associated with a set of variables.

Contents

Definition

The joint Shannon entropy (in bits) of two variables

where

For more than two variables

where

Greater than individual entropies

The joint entropy of a set of variables is greater than or equal to all of the individual entropies of the variables in the set.

Less than or equal to the sum of individual entropies

The joint entropy of a set of variables is less than or equal to the sum of the individual entropies of the variables in the set. This is an example of subadditivity. This inequality is an equality if and only if

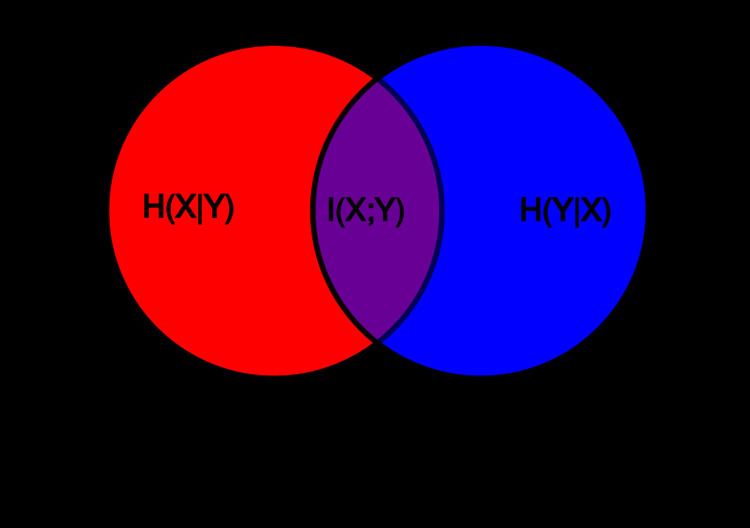

Relations to other entropy measures

Joint entropy is used in the definition of conditional entropy

and

In quantum information theory, the joint entropy is generalized into the joint quantum entropy.