| ||

High-dynamic-range video (HDR video) describes high dynamic range (HDR) video that is greater than standard dynamic range (SDR) video which uses a conventional gamma curve. SDR video, when using a conventional gamma curve and a bit depth of 8-bits per sample, has a dynamic range of about 6 stops (64:1). When HDR content is displayed on a 2,000 cd/m2 display with a bit depth of 10-bits per sample it has a dynamic range of 200,000:1 or 17.6 stops.

Contents

Technology

In February and April 1990, Georges Cornuéjols introduced the first real-time HDR camera combining two successively or simultaneously-captured images.

In 1991 the first commercial video camera using consumer-grade sensors and cameras was introduced that performed real-time capturing of multiple images with different exposures, and producing an HDR video image, by Hymatom, licensee of Cornuéjols.

Also in 1991, Cornuéjols introduced the principle of non linear image accumulation HDR+ to increase the camera sensitivity: in low-light environments, several successive images are accumulated, increasing the signal-to-noise ratio.

Later, in the early 2000s, several scholarly research efforts used consumer-grade sensors and cameras. A few companies such as RED and Arri have been developing digital sensors capable of a higher dynamic range. RED EPIC-X can capture time-sequential HDRx images with a user-selectable 1–3 stops of additional highlight latitude in the "x" channel. The "x" channel can be merged with the normal channel in post production software. The Arri Alexa camera uses a dual gain architecture to generate an HDR image from two exposures captured at the same time.

With the advent of low-cost consumer digital cameras, many amateurs began posting tone mapped HDR time-lapse videos on the Internet, essentially a sequence of still photographs in quick succession. In 2010 the independent studio Soviet Montage produced an example of HDR video from disparately exposed video streams using a beam splitter and consumer grade HD video cameras. Similar methods have been described in the academic literature in 2001 and 2007.

Modern movies have often been filmed with cameras featuring a higher dynamic range, and legacy movies can be upgraded even if manual intervention would be needed for some frames (as when old black-and-white films are upgraded to color). Also, special effects, especially those in which real and synthetic footage are seamlessly mixed, require both HDR shooting and rendering. HDR video is also needed in applications that demand high accuracy for capturing temporal aspects of changes in the scene. This is important in monitoring of some industrial processes such as welding, in predictive driver assistance systems in automotive industry, in surveillance video systems, and other applications. HDR video can be also considered to speed image acquisition in applications that need a large number of static HDR images are, for example in image-based methods in computer graphics.

OpenEXR was created in 1999 by Industrial Light and Magic (ILM) and released in 2003 as an open source software library. OpenEXR is used for film and television production.

In 2008 with the release of some TV sets with enhanced dynamic range, broadcasting HDR video may become important, but will depend on standardization issues. For this particular application, enhancing current SDR/LDR video signal to HDR by intelligent TV sets seems may be a viable near-term solution. In 2016 TV sets were released that had support for HDR conversion of SDR video with company specific names such as Samsung's HDR+ and Technicolor SA's HDR Intelligent Tone Management.

Academy Color Encoding System (ACES) was created by the Academy of Motion Picture Arts and Sciences and released in December 2014. ACES is a complete workflow system that supports both HDR and wide color gamut (WCG).

Video interfaces that support at least one HDR Format include HDMI 2.0a which was released on April 8, 2015 and DisplayPort 1.4 which was released on March 1, 2016. On December 12, 2016, HDMI announced that Hybrid Log-Gamma (HLG) support had been added to the HDMI 2.0b standard. HDMI 2.1 was officially announced on January 4, 2017, and added support for Dynamic HDR which is dynamic metadata that allows for changes on a scene-by-scene or frame-by-frame basis. Society of Motion Picture and Television Engineers (SMPTE) has created a standard for dynamic metadata which is SMPTE ST 2094 or Dynamic Metadata for Color Volume Transform (DMCVT). SMPTE ST 2094 was published in 2016 as six parts and includes four applications from Dolby, Philips, Samsung, and Technicolor.

HDR10

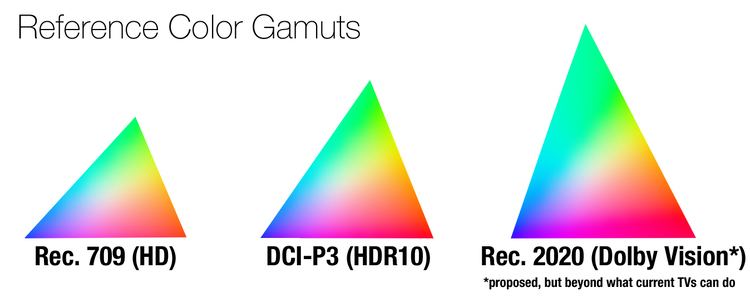

On August 27, 2015, the Consumer Technology Association announced the HDR10 Media Profile, more commonly known as HDR10, which uses the Rec. 2020 color space, a bit depth of 10-bits, and SMPTE ST-2084 Perceptual Quantizer (PQ), a non-linear electro-optical transfer function (EOTF) by Dolby Laboratories. It uses SMPTE ST 2086 "Mastering Display Color Volume" static metadata to send color calibration data of the mastering display, as well as MaxFALL (Maximum Frame Average Light Level) and MaxCLL (Maximum Content Light Level) static values. HDR10 is an open standard supported by a wide variety of companies, which includes TV manufacturers such as LG, Samsung, Sharp, Sony, and Vizio, as well as Microsoft and Sony Interactive Entertainment, which support HDR10 on their PlayStation 4 and Xbox One video game console platforms (the latter exclusive to the Xbox One S hardware revision released 2016).

Dolby Vision

Dolby Vision is a HDR format from Dolby Laboratories that can be optionally supported by Ultra HD Blu-ray discs and streaming video services. Dolby Vision is a proprietary format and Dolby SVP of Business Giles Baker has stated that the royalty cost for Dolby Vision is less than $3 per TV. Dolby Vision includes Perceptual Quantizier (SMPTE ST 2084) electro-optical transfer function, up to 4K resolution, color depth of up to 12-bits, up to 10,000-nit maximum brightness (mastered to 4,000-nit in practice), and wide-gamut color space (ITU-R Rec. 2020 and 2100). It can encode mastering display colorimetry information using static metadata (SMPTE ST 2086) and provide dynamic metadata (SMPTE ST 2094) for each scene. Examples of Ultra HD (UHD) TVs that support Dolby Vision include LG, TCL, and Vizio, although their displays are only capable of 10-bit color and 800 to 1000 nits luminance.

Hybrid Log-Gamma

Hybrid Log-Gamma (HLG) is a HDR standard that was jointly developed by the BBC and NHK. The HLG standard is royalty-free and is compatible with SDR displays. HLG is supported by HDMI 2.0b, HEVC, and VP9. HLG is supported by video services such as Freeview Play and YouTube.

SL-HDR1

SL-HDR1 is a HDR standard that was jointly developed by STMicroelectronics, Philips International B.V., CableLabs, and Technicolor R&D France. It was standardised as ETSI TS 103 433 in August 2016. SL-HDR1 provides direct backwards compatibility by using metadata to reconstruct a HDR signal from a SDR video stream which can be delivered using SDR distribution networks and services already in place. SL-HDR1 allows for HDR rendering on HDR devices and SDR rendering on SDR devices using a single layer video stream. The HDR reconstruction metadata can be added either to HEVC or AVC using a supplemental enhancement information (SEI) message.

ITU-R Rec. 2100

Rec. 2100 is a technical recommendation by ITU-R for production and distribution of HDR content using 1080p or UHD resolution, 8-bit or 10-bit color, HLG or PQ transfer functions, and wide-gamut Rec. 2020 color space.

UHD Phase A

UHD Phase A are guidelines from the Ultra HD Forum for distribution of SDR and HDR content using Full HD 1080p and 4K UHD resolutions. It requires color depth of 10-bits per sample, a color gamut of Rec. 709 or Rec. 2020, a frame rate of up to 60 fps, a display resolution of 1080p or 2160p, and either standard dynamic range (SDR) or high dynamic range that uses Hybrid Log-Gamma (HLG) or Perceptual Quantizer (PQ) transfer functions. UHD Phase A defines HDR as having a dynamic range of at least 13 stops (213=8192:1) and WCG as a color gamut that is wider than Rec. 709. UHD Phase A consumer devices are compatible with HDR10 requirements and will be able to process Rec. 2020 color space and HLG or PQ at 10 bits.

History

On April 8, 2015, The HDMI Forum released version 2.0a of the HDMI Specification to enable transmission of HDR. The Specification references CEA-861.3, which in turn references the Perceptual Quantizer (PQ) which was standardized as SMPTE ST 2084. The previous HDMI 2.0 version already supported the Rec. 2020 color space.

On June 24, 2015, Amazon Video was the first streaming service to offer HDR video using HDR10 Media Profile video.

On November 17, 2015, Vudu announced that they had started offering titles in Dolby Vision.

On March 1, 2016, the Blu-ray Disc Association released Ultra HD Blu-ray with mandatory support for HDR10 Media Profile video and optional support for Dolby Vision.

On April 9, 2016, Netflix started offering both HDR10 Media Profile video and Dolby Vision.

On July 6, 2016, the International Telecommunication Union (ITU) announced Rec. 2100 that defines two HDR transfer functions which are HLG and PQ.

On July 29, 2016, SKY Perfect JSAT Group announced that on October 4 they will start the world's first 4K HDR broadcasts using HLG.

On September 9, 2016, Google announced Android TV 7.0 which supports Dolby Vision, HDR10, and HLG.

On September 26, 2016, Roku announced that the Roku Premiere+ and Roku Ultra will support HDR using HDR10.

On November 7, 2016, Google announced that YouTube will start streaming HDR videos which can be encoded with HLG or PQ.

On November 17, 2016, the Digital Video Broadcasting (DVB) Steering Board approved UHD-1 Phase 2 with a HDR solution which supports Hybrid Log-Gamma (HLG) and Perceptual Quantizer (PQ). The specification has been published as DVB Bluebook A157 and will be published by the ETSI as TS 101 154 v2.3.1.

On January 2, 2017, LG Electronics USA announced that all of LG’s SUPER UHD TV models now support a variety of HDR technologies, including Dolby Vision, HDR10, and HLG (Hybrid Log Gamma), and are ready to support Advanced HDR by Technicolor.