| ||

Graphics Core Next (GCN) is the codename for both a series of microarchitectures as well as for an instruction set. GCN was developed by AMD for their GPUs as the successor to TeraScale microarchitecture/instruction set. The first product featuring GCN was launched in 2011.

Contents

- Instruction set

- Microarchitectures

- Graphics Command Processor

- Asynchronous Compute Engine

- Scheduler

- Geometric processor

- Compute Units

- CU Scheduler

- SIMD Vector Unit

- Audio and video acceleration SIP blocks

- Unified virtual memory

- Heterogeneous System Architecture HSA

- Hardware Schedulers

- Primitive Discard Accelerator

- Graphics Core Next Southern Islands HD 7700HD 8000Rx 200Rx 300Rx 400 Series

- ZeroCore Power

- Chips

- GCN 2nd Generation Sea Islands HD 7790 HD 8770 R7 260260X R9 290290X R9 295X2 R7 360 R9 390390X Series

- GCN 3rd Generation Volcanic Islands R9 285 R9 380380X and FuryNano Series

- GCN 4th Generation Arctic Islands RX 400 Series

- GCN 5th Generation Vega

- GCN 6th Generation Navi

- References

GCN is used in 28 nm and 14 nm graphics chips in the Radeon HD 7700–7900, HD 8000, R9 240–290, R9 300, and Radeon 400 series of AMD graphics cards. GCN is also used in the AMD Accelerated Processing Units code-named "Temash", "Kabini", "Kaveri", "Carrizo", "Beema" and "Mullins", as well as in Liverpool (PlayStation 4) and Durango (Xbox One).

GCN is a RISC SIMD (or rather SIMT) microarchitecture contrasting the VLIW SIMD architecture of TeraScale. GCN requires considerably more transistors than TeraScale, but offers advantages for GPGPU computation. It makes the compiler simpler and should also lead to better utilization. GCN implements HyperZ.

Instruction set

The GCN instruction set is owned by AMD as well as the X86-64 instruction set. The GCN instruction set has been developed specifically for GPUs (and GPGPU) and e.g. has no micro-operation for division.

Documentation is available:

An LLVM code generator (i.e. a compiler back-end) is available for the GCN instruction set. It is used e.g. by Mesa 3D.

MIAOW is an open-source RTL implementation of the AMD Southern Islands GPGPU instruction set (aka Graphics Core Next).

In November 2015, AMD announced the "Boltzmann Initiative". The AMD Boltzmann Initiative shall enable the porting of CUDA-based applications to a common C++ programming model.

At the "Super Computing 15" AMD showed their Heterogeneous Compute Compiler (HCC), a headless Linux driver and HSA runtime infrastructure for cluster-class, High Performance Computing (HPC) and the Heterogeneous-compute Interface for Portability (HIP) tool for porting CUDA-based applications to a common C++ programming model.

Microarchitectures

As of February 2017 the family of microarchitectures implementing the identically called instruction set "Graphics Core Next" has seen four iterations. The differences in the instruction set are rather minimal, and microarchitectures also do not differentiate too much from one another.

Graphics Command Processor

The "Graphics Command Processor" is a functional unit of the GCN microarchicture. Among other tasks, it is responsible for Asynchronous Shaders. The short video AMD Asynchronous Shaders visualizes the differences between "multi thread", "preemption" and "Asynchronous Shaders".

Asynchronous Compute Engine

The Asynchronous Compute Engine (ACE) is a distinct functional block serving computing purposes. It purpose is similar to that of the Graphics Command Processor.

Scheduler

Since the third iteration of GCN, the hardware contains two schedulers: One to schedule wavefronts during shader execution (CU Scheduler, see below) and a new one to schedule execution of draw and compute queues. The latter helps performance by executing compute operations when the CUs are underutilized because of graphics commands limited by fixed function pipeline speed or bandwidth limited. This functionality is known as Async Compute.

For a given shader, the gpu drivers also need to select a good instruction order, in order to minimize latency. This is done on cpu, and is sometimes referred as "Scheduling".

Geometric processor

The geometry processor contains the Geometry Assembler, the Tesselator and the Vertex Assembler.

The GCN Tesselator of the Geometry processor is capable of doing tessellation in hardware as defined by Direct3D 11 and OpenGL 4.5 (see AMD 21-01-2017) in ).

The GCN Tesselator is AMD's most current SIP block, earlier units were ATI TruForm and hardware tessellation in TeraScale.

Compute Units

Each Compute Unit consists of a CU Scheduler, a Branch & Message Unit, 4 SIMD Vector Units (each 16-lane wide), 4 64KiB VGPR files, 1 scalar unit, a 4 KiB GPR file, a local data share of 64 KiB, 4 Texture Filter Units, 16 Texture Fetch Load/Store Units and a 16 KiB L1 Cache. Four Compute units are wired to share an Instruction Cache 16 KiB in size and a scalar data cache 32KiB in size. These are backed by the L2 cache. A SIMD-VU operates on 16 elements at a time (per cycle), while a SU can operate on one a time (one/cycle). In addition the SU handles some other operations like branching.

Every SIMD-VU has some private memory where it stores its registers. There are two types of registers: scalar registers (s0, s1, etc.), which hold 4 bytes number each, and vector registers (v0, v1, etc.), which represent a set of 64 4 bytes numbers each. When you operate on the vector registers, every operation is done in parallel on the 64 numbers. Every time you do some work with them, you actually work with 64 inputs. For example, you work on 64 different pixels at a time (for each of them the inputs are slightly different, and thus you get slightly different color at the end).

Every SIMD-VU has room for 512 scalar registers and 256 vector registers.

CU Scheduler

The CU scheduler is the hardware functional block choosing for the SIMD-VU which wavefronts to execute. It picks one SIMD-VU per cycle for scheduling. This is not to be confused with other schedulers, in hardware or software.

In all GCN-GPUs, a “wavefront” consists of 64 threads, and in all Nvidia GPUs a “warp” consists of 32 threads.

AMD's solution is, to attribute multiple wavefronts to each SIMD-VU. The hardware distributes the registers to the different wavefronts, and when one wavefront is waiting on some result, which lies in memory, the CU Scheduler decides to make the SIMD-VU work on another wavefront. Wavefronts are attributed per SIMD-VU. SIMD-VUs do not exchange wavefronts. At max 10 wavefronts can be attributed per SIMD-VU (thus 40 per CU).

AMD CodeXL shows tables with the relationship between number of SGPRs and VGPRs to the number of wavefronts, but basically for SGPRS it is min(104, 512/numwavefronts) and VGPRS 256/numwavefronts.

Note that in conjunction with the SSE instructions this concept of most basic level of parallelism is often called a "vector width". The vector width is characterized by the total number of bits in it.

SIMD Vector Unit

Each SIMD Vector Unit has:

Each SIMD-VU has 10 wavefront instruction buffer, and it takes 4 cycles to execute one wavefront.

Audio and video acceleration SIP blocks

Many implementations of GCN are typically accompanied by several of AMD's other ASIC blocks. Including but not limited to the Unified Video Decoder, Video Coding Engine, and AMD TrueAudio.

Unified virtual memory

In a preview in 2011, AnandTech wrote about the unified virtual memory, supported by Graphics Core Next.

Heterogeneous System Architecture (HSA)

Some of the specific HSA features implemented in the hardware need support from the operating system's kernel (its subsystems) and/or from specific device drivers. For example, in July 2014 AMD published a set of 83 patches to be merged into Linux kernel mainline 3.17 for supporting their Graphics Core Next-based Radeon graphics cards. The special driver titled "HSA kernel driver" resides in the directory /drivers/gpu/hsa while the DRM-graphics device drivers reside in /drivers/gpu/drm and augments the already existent DRM driver for Radeon cards. This very first implementation focuses on a single "Kaveri" APU and works alongside the existing Radeon kernel graphics driver (kgd).

Hardware Schedulers

They are used to perform scheduling and offload the assignment of compute queues to the ACEs from the driver to hardware by buffering these queues until there is at least one empty queue in at least one ACE, causing the HWS to immediately assign buffered queues to the ACEs until all queues are full or there are no more queues to safely assign. Part of the scheduling work performed includes prioritized queues which allow critical tasks to run at a higher priority than other tasks without requiring the lower priority tasks to be preempted to run the high priority task, therefore allowing the tasks to run concurrently with the high priority tasks scheduled to hog the GPU as much as possible while letting other tasks use the resources that the high priority tasks are not using. These are essentially Asynchronous Compute Engines that lack dispatch controllers. They were first introduced in the fourth generation GCN microarchitecture, but were present in the third generation GCN microarchitecture for internal testing purposes. A driver update has enabled the hardware schedulers in third generation GCN parts for production use.

Primitive Discard Accelerator

This unit discards degenerate triangles before they enter the vertex shader and triangles that do not cover any fragments before they enter the fragment shader. This unit was introduced with the fourth generation GCN microarchitecture.

Graphics Core Next (Southern Islands, HD 7700+/HD 8000/Rx 200/Rx 300/Rx 400 Series)

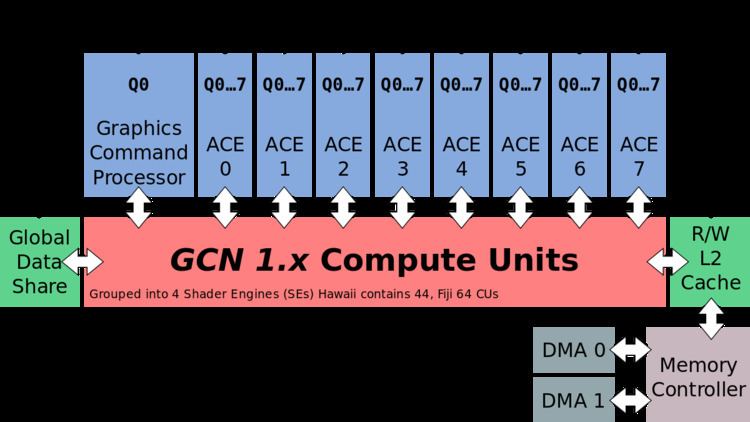

The Graphics Core Next (codenamed "Southern Islands") microarchitecture combines 64 shader processors with 4 TMUs and 1 ROP to a compute unit (CU). There are Asynchronous Compute Engines (ACE) controlling computation and dispatching.

ZeroCore Power

ZeroCore Power is a long idle power saving technology, shutting off functional units of the GPU when not in use. AMD ZeroCore Power technology supplements AMD PowerTune.

Chips

Discrete GPUs (Southern Islands family):

GCN 2nd Generation (Sea Islands, HD 7790, HD 8770, R7 260/260X, R9 290/290X, R9 295X2, R7 360, R9 390/390X Series)

GCN 2nd generation was introduced with Radeon HD 7790 and is also found in Radeon HD 8770, R7 260/260X, R9 290/290X, R9 295X2, R7 360, R9 390/390X, as well as Steamroller-based Desktop Kaveri APUs and Mobile Kaveri APUs and in the Puma-based "Beema" and "Mullins" APUs. It has multiple advantages over the original GCN, including AMD TrueAudio and a revised version of AMD PowerTune technology.

GCN 2nd generation introduced an entity called "Shader Engine" (SE). A Shader Engine comprises one geometry processor, up to 11 CUs (Hawaii chip), rasterizers, ROPs, and L1 cache. Not part of a Shader Engine is the Graphics Command Processor, the 8 ACEs, the L2 cache and memory controllers as well as the audio and video accelerators, the display controllers, the 2 DMA controllers and the PCIe interface.

The A10-7850K "Kaveri" contains 8 CUs (compute units) and 8 Asynchronous Compute Engines (ACEs) for independent scheduling and work item dispatching.

At AMD Developer Summit (APU) in November 2013 Michael Mantor presented the Radeon R9 290X.

Chips

Discrete GPUs (Sea Islands family):

Integrated into APUs:

GCN 3rd Generation (Volcanic Islands, R9 285, R9 380/380X and Fury/Nano Series)

GCN 3rd generation was introduced in 2014 with the Radeon R9 285 and R9 M295X, which have the "Tonga" GPU. It features improved tessellation performance, lossless delta color compression in order to reduce memory bandwidth usage, an updated and more efficient instruction set, a new high quality scaler for video, and a new multimedia engine (video encoder/decoder). Delta color compression is supported in Mesa. However, its double precision performance is worse compared to previous generation.

Chips

Discrete GPUs:

Integrated into APUs:

GCN 4th Generation (Arctic Islands, RX 400 Series)

GPUs of the Arctic Islands-family were introduced in Q2 of 2016 with AMD Radeon 400 series branded graphics cards, based upon the Polaris architecture. All Polaris-based chips are produced on the 14 nm FinFET process. The fourth generation GCN instruction set architecture is compatible with the third generation. It is an optimization for 14 nm FinFET process enabling higher GPU clock speeds than with the 3rd GCN generation.

Chips

Discrete GPUs:

Discrete (stand alone) GPU chips (dGPU)

Integrated into APUs:

GCN 5th Generation (Vega)

AMD began releasing details of their next generation of GCN Architecture in January 2017. The new design is expected to increase instructions per clock, higher clock speeds, support for HBM2, and a larger memory address space. Additionally, the new chips are expected to include improvements in the Rasterisation and Render output units. The shaders are heavily modified from the previous generations to support packed math for 8-bit, 16-bit, and 32-bit numbers. With this there is a significant performance advantage when lower precision is acceptable (for example: processing two half-precision numbers at the same rate as a single single precision number).

It has been suspected that Nvidia introduced tile-based rasterization and binning with Maxwell, and that this was the biggest reason of Maxwell's efficiency increase. In January, AnandTech assumed that Vega would finally catch up with Nvidia regarding energy efficiency optimizations due to the new "Draw Stream Binning Rasterizer" to be introduced with Vega.

GCN 6th Generation (Navi)

Navi is expected in 2018 and will offer "Next Generation Memory" as well as improved scalability.