| ||

In evolutionary computation, differential evolution (DE) is a method that optimizes a problem by iteratively trying to improve a candidate solution with regard to a given measure of quality. Such methods are commonly known as metaheuristics as they make few or no assumptions about the problem being optimized and can search very large spaces of candidate solutions. However, metaheuristics such as DE do not guarantee an optimal solution is ever found.

Contents

DE is used for multidimensional real-valued functions but does not use the gradient of the problem being optimized, which means DE does not require for the optimization problem to be differentiable as is required by classic optimization methods such as gradient descent and quasi-newton methods. DE can therefore also be used on optimization problems that are not even continuous, are noisy, change over time, etc.

DE optimizes a problem by maintaining a population of candidate solutions and creating new candidate solutions by combining existing ones according to its simple formulae, and then keeping whichever candidate solution has the best score or fitness on the optimization problem at hand. In this way the optimization problem is treated as a black box that merely provides a measure of quality given a candidate solution and the gradient is therefore not needed.

DE is originally due to Storn and Price. Books have been published on theoretical and practical aspects of using DE in parallel computing, multiobjective optimization, constrained optimization, and the books also contain surveys of application areas. Excellent surveys on the multi-faceted research aspects of DE can be found in journal articles like.

Algorithm

A basic variant of the DE algorithm works by having a population of candidate solutions (called agents). These agents are moved around in the search-space by using simple mathematical formulae to combine the positions of existing agents from the population. If the new position of an agent is an improvement it is accepted and forms part of the population, otherwise the new position is simply discarded. The process is repeated and by doing so it is hoped, but not guaranteed, that a satisfactory solution will eventually be discovered.

Formally, let

Let

Parameter selection

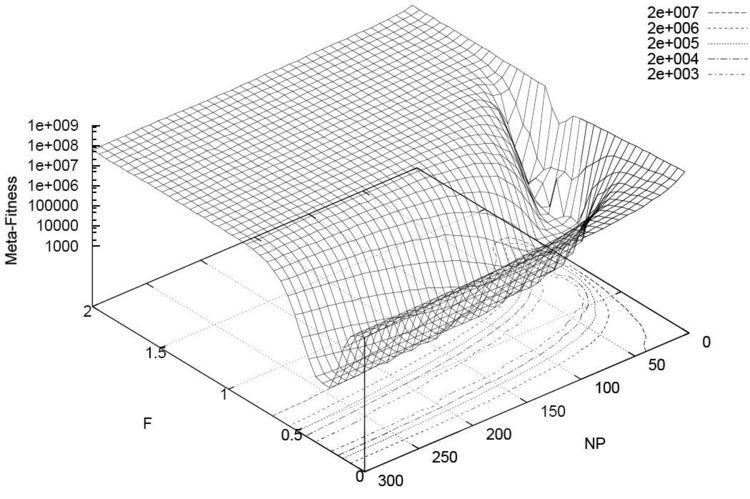

The choice of DE parameters

Variants

Variants of the DE algorithm are continually being developed in an effort to improve optimization performance. Many different schemes for performing crossover and mutation of agents are possible in the basic algorithm given above, see e.g. More advanced DE variants are also being developed with a popular research trend being to perturb or adapt the DE parameters during optimization, see e.g. Price et al., Liu and Lampinen, Qin and Suganthan, Civicioglu and Brest et al. There are also some work in making a hybrid optimization method using DE combined with other optimizers.

Sample code

The following is a specific pseudocode implementation of differential evolution, written similar to the Java language. For more generalized pseudocode, please see the listing in the Algorithm section above.