| ||

Computational sociology

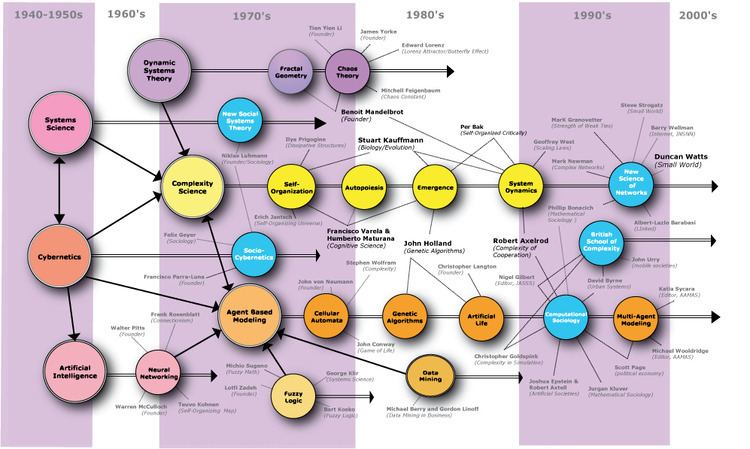

Computational sociology is a branch of sociology that uses computationally intensive methods to analyze and model social phenomena. Using computer simulations, artificial intelligence, complex statistical methods, and analytic approaches like social network analysis, computational sociology develops and tests theories of complex social processes through bottom-up modeling of social interactions.

Contents

- Computational sociology

- Systems theory and structural functionalism

- Macrosimulation and microsimulation

- Cellular automata and agent based modeling

- Data mining and social network analysis

- Computational content analysis

- Journals and academic publications

- Associations conferences and workshops

- Academic programs departments and degrees

- USA

- Europe

- Asia

- References

It involves the understanding of social agents, the interaction among these agents, and the effect of these interactions on the social aggregate. Although the subject matter and methodologies in social science differ from those in natural science or computer science, several of the approaches used in contemporary social simulation originated from fields such as physics and artificial intelligence. Some of the approaches that originated in this field have been imported into the natural sciences, such as measures of network centrality from the fields of social network analysis and network science.

In relevant literature, computational sociology is often related to the study of social complexity. Social complexity concepts such as complex systems, non-linear interconnection among macro and micro process, and emergence, have entered the vocabulary of computational sociology. A practical and well-known example is the construction of a computational model in the form of an "artificial society", by which researchers can analyze the structure of a social system.

Systems theory and structural functionalism

In the post-war era, Vannevar Bush's differential analyser, John von Neumann's cellular automata, Norbert Wiener's cybernetics, and Claude Shannon's information theory became influential paradigms for modeling and understanding complexity in technical systems. In response, scientists in disciplines such as physics, biology, electronics, and economics began to articulate a general theory of systems in which all natural and physical phenomena are manifestations of interrelated elements in a system that has common patterns and properties. Following Émile Durkheim's call to analyze complex modern society sui generis, post-war structural functionalist sociologists such as Talcott Parsons seized upon these theories of systematic and hierarchical interaction among constituent components to attempt to generate grand unified sociological theories, such as the AGIL paradigm. Sociologists such as George Homans argued that sociological theories should be formalized into hierarchical structures of propositions and precise terminology from which other propositions and hypotheses could be derived and operationalized into empirical studies. Because computer algorithms and programs had been used as early as 1956 to test and validate mathematical theorems, such as the four color theorem, social scientists and systems dynamicists anticipated that similar computational approaches could "solve" and "prove" analogously formalized problems and theorems of social structures and dynamics.

Macrosimulation and microsimulation

By the late 1960s and early 1970s, social scientists used increasingly available computing technology to perform macro-simulations of control and feedback processes in organizations, industries, cities, and global populations. These models used differential equations to predict population distributions as holistic functions of other systematic factors such as inventory control, urban traffic, migration, and disease transmission. Although simulations of social systems received substantial attention in the mid-1970s after the Club of Rome published reports predicting global environmental catastrophe based upon the predictions of global economy simulations, the inflammatory conclusions also temporarily discredited the nascent field by demonstrating the extent to which results of the models are highly sensitive to the specific quantitative assumptions (backed by little evidence, in the case of the Club of Rome) made about the model's parameters. As a result of increasing skepticism about employing computational tools to make predictions about macro-level social and economic behavior, social scientists turned their attention toward micro-simulation models to make forecasts and study policy effects by modeling aggregate changes in state of individual-level entities rather than the changes in distribution at the population level. However, these micro-simulation models did not permit individuals to interact or adapt and were not intended for basic theoretical research.

Cellular automata and agent-based modeling

The 1970s and 1980s were also a time when physicists and mathematicians were attempting to model and analyze how simple component units, such as atoms, give rise to global properties, such as complex material properties at low temperatures, in magnetic materials, and within turbulent flows. Using cellular automata, scientists were able to specify systems consisting of a grid of cells in which each cell only occupied some finite states and changes between states were solely governed by the states of immediate neighbors. Along with advances in artificial intelligence and microcomputer power, these methods contributed to the development of "chaos theory" and "complexity theory" which, in turn, renewed interest in understanding complex physical and social systems across disciplinary boundaries. Research organizations explicitly dedicated to the interdisciplinary study of complexity were also founded in this era: the Santa Fe Institute was established in 1984 by scientists based at Los Alamos National Laboratory and the BACH group at the University of Michigan likewise started in the mid-1980s.

This cellular automata paradigm gave rise to a third wave of social simulation emphasizing agent-based modeling. Like micro-simulations, these models emphasized bottom-up designs but adopted four key assumptions that diverged from microsimulation: autonomy, interdependency, simple rules, and adaptive behavior. Agent-based models are less concerned with predictive accuracy and instead emphasize theoretical development. In 1981, mathematician and political scientist Robert Axelrod and evolutionary biologist W.D. Hamilton published a major paper in Science titled "The Evolution of Cooperation" which used an agent-based modeling approach to demonstrate how social cooperation based upon reciprocity can be established and stabilized in a Prisoner's dilemma game when agents followed simple rules of self-interest. Axelrod and Hamilton demonstrated that individual agents following a simple rule set of (1) cooperate on the first turn and (2) thereafter replicate the partner's previous action were able to develop "norms" of cooperation and sanctioning in the absence of canonical sociological constructs such as demographics, values, religion, and culture as preconditions or mediators of cooperation. Throughout the 1990s, scholars like William Sims Bainbridge, Kathleen Carley, Michael Macy, and John Skvoretz developed multi-agent-based models of generalized reciprocity, prejudice, social influence, and organizational information processing. In 1999, Nigel Gilbert published the first textbook on Social Simulation: Simulation for the social scientist and established its most relevant journal: the Journal of Artificial Societies and Social Simulation.

Data mining and social network analysis

Independent from developments in computational models of social systems, social network analysis emerged in the 1970s and 1980s from advances in graph theory, statistics, and studies of social structure as a distinct analytical method and was articulated and employed by sociologists like James S. Coleman, Harrison White, Linton Freeman, J. Clyde Mitchell, Mark Granovetter, Ronald Burt, and Barry Wellman. The increasing pervasiveness of computing and telecommunication technologies throughout the 1980s and 1990s demanded analytical techniques, such as network analysis and multilevel modeling, that could scale to increasingly complex and large data sets. The most recent wave of computational sociology, rather than employing simulations, uses network analysis and advanced statistical techniques to analyze large-scale computer databases of electronic proxies for behavioral data. Electronic records such as email and instant message records, hyperlinks on the World Wide Web, mobile phone usage, and discussion on Usenet allow social scientists to directly observe and analyze social behavior at multiple points in time and multiple levels of analysis without the constraints of traditional empirical methods such as interviews, participant observation, or survey instruments. Continued improvements in machine learning algorithms likewise have permitted social scientists and entrepreneurs to use novel techniques to identify latent and meaningful patterns of social interaction and evolution in large electronic datasets.

The automatic parsing of textual corpora has enabled the extraction of actors and their relational networks on a vast scale, turning textual data into network data. The resulting networks, which can contain thousands of nodes, are then analysed by using tools from Network theory to identify the key actors, the key communities or parties, and general properties such as robustness or structural stability of the overall network, or centrality of certain nodes. This automates the approach introduced by quantitative narrative analysis, whereby subject-verb-object triplets are identified with pairs of actors linked by an action, or pairs formed by actor-object.

Computational content analysis

Content analysis has been a traditional part of social sciences and media studies for a long time. The automation of content analysis has allowed a "big data" revolution to take place in that field, with studies in social media and newspaper content that include millions of news items. Gender bias, readability, content similarity, reader preferences, and even mood have been analyzed based on text mining methods over millions of documents. The analysis of readability, gender bias and topic bias was demonstrated in Flaounas et al. showing how different topics have different gender biases and levels of readability; the possibility to detect mood shifts in a vast population by analysing Twitter content was demonstrated as well.

In 2008, Yukihiko Yoshida did a study called "Leni Riefenstahl and German expressionism: research in Visual Cultural Studies using the trans-disciplinary semantic spaces of specialized dictionaries". The study took databases of images tagged with connotative and denotative keywords (a search engine) and found Riefenstahl's imagery had the same qualities as imagery tagged "degenerate" in the title of the exhibition "Degenerate Art" in Germany at 1937.

The analysis of vast quantities of historical newspaper content has been pioneered by Dzogang et al., which showed how periodic structures can be automatically discovered in historical newspapers. A similar analysis was performed on social media, again revealing strongly periodic structures.

Journals and academic publications

The most relevant journal of the discipline is the Journal of Artificial Societies and Social Simulation.