| ||

Branch and bound (BB, B&B, or BnB) is an algorithm design paradigm for discrete and combinatorial optimization problems, as well as mathematical optimization. A branch-and-bound algorithm consists of a systematic enumeration of candidate solutions by means of state space search: the set of candidate solutions is thought of as forming a rooted tree with the full set at the root. The algorithm explores branches of this tree, which represent subsets of the solution set. Before enumerating the candidate solutions of a branch, the branch is checked against upper and lower estimated bounds on the optimal solution, and is discarded if it cannot produce a better solution than the best one found so far by the algorithm.

Contents

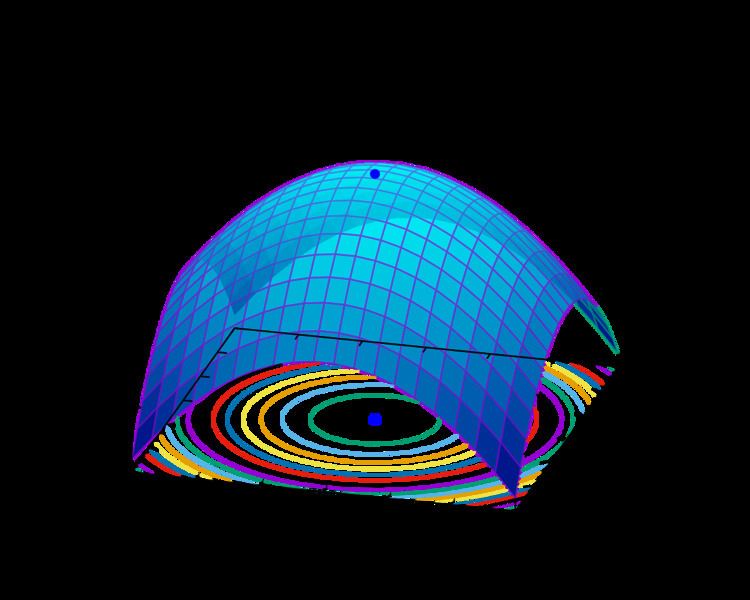

The algorithm depends on the efficient estimation of the lower and upper bounds of a region/branch of the search space and approaches exhaustive enumeration as the size (n-dimensional volume) of the region tends to zero.

The method was first proposed by A. H. Land and A. G. Doig in 1960 for discrete programming, and has become the most commonly used tool for solving NP-hard optimization problems. The name "branch and bound" first occurred in the work of Little et al. on the traveling salesman problem.

Overview

The goal of a branch-and-bound algorithm is to find a value x that maximizes or minimizes the value of a real-valued function f(x), called an objective function, among some set S of admissible, or candidate solutions. The set S is called the search space, or feasible region. The rest of this section assumes that minimization of f(x) is desired; this assumption comes without loss of generality, since one can find the maximum value of f(x) by finding the minimum of g(x) = −f(x). A B&B algorithm operates according to two principles:

Turning these principles into a concrete algorithm for a specific optimization problem requires some kind of data structure that represents sets of candidate solutions. Such a representation is called an instance of the problem. Denote the set of candidate solutions of an instance I by SI. The instance representation has to come with three operations:

Using these operations, a B&B algorithm performs a top-down recursive search through the tree of instances formed by the branch operation. Upon visiting an instance I, it checks whether bound(I) is greater than the lower bound for some other instance that it already visited; if so, I may be safely discarded from the search and the recursion stops. This pruning step is usually implemented by maintaining a global variable that records the minimum lower bound seen among all instances examined so far.

Generic version

The following is the skeleton of a generic branch and bound algorithm for minimizing an arbitrary objective function f. To obtain an actual algorithm from this, one requires a bounding function g, that computes lower bounds of f on nodes of the search tree, as well as a problem-specific branching rule.

- Using a heuristic, find a solution xh to the optimization problem. Store its value, B = f(xh). (If no heuristic is available, set B to infinity.) B will denote the best solution found so far, and will be used as an upper bound on candidate solutions.

- Initialize a queue to hold a partial solution with none of the variables of the problem assigned.

- Loop until the queue is empty:

- Take a node N off the queue.

- If N represents a single candidate solution x and f(x) < B, then x is the best solution so far. Record it and set B ← f(x).

- Else, branch on N to produce new nodes Ni. For each of these:

- If g(Ni) > B, do nothing; since the lower bound on this node is greater than the upper bound of the problem, it will never lead to the optimal solution, and can be discarded.

- Else, store Ni on the queue.

Several different queue data structures can be used. A stack (LIFO queue) will yield a depth-first algorithm. A best-first branch and bound algorithm can be obtained by using a priority queue that sorts nodes on their g-value. The depth-first variant is recommended when no good heuristic is available for producing an initial solution, because it quickly produces full solutions, and therefore upper bounds.

Improvements

When

Applications

This approach is used for a number of NP-hard problems

Branch-and-bound may also be a base of various heuristics. For example, one may wish to stop branching when the gap between the upper and lower bounds becomes smaller than a certain threshold. This is used when the solution is "good enough for practical purposes" and can greatly reduce the computations required. This type of solution is particularly applicable when the cost function used is noisy or is the result of statistical estimates and so is not known precisely but rather only known to lie within a range of values with a specific probability.

Relation to other algorithms

Nau et al. present a generalization of branch and bound that also subsumes the A*, B* and alpha-beta search algorithms from artificial intelligence.