In the unconstrained minimization problem, the Wolfe conditions are a set of inequalities for performing inexact line search, especially in quasi-Newton methods, first published by Philip Wolfe in 1969.

In these methods the idea is to find

for some smooth f : R n → R . Each step often involves approximately solving the subproblem

where x k is the current best guess, p k ∈ R n is a search direction, and α ∈ R is the step length.

The inexact line searches provide an efficient way of computing an acceptable step length α that reduces the objective function 'sufficiently', rather than minimizing the objective function over α ∈ R + exactly. A line search algorithm can use Wolfe conditions as a requirement for any guessed α , before finding a new search direction p k .

Armijo rule and curvature

A step length α k is said to satisfy the Wolfe conditions, restricted to the direction p k , if the following two inequalities hold:

i)

f ( x k + α k p k ) ≤ f ( x k ) + c 1 α k p k T ∇ f ( x k ) ,ii)

p k T ∇ f ( x k + α k p k ) ≥ c 2 p k T ∇ f ( x k ) ,

with 0 < c 1 < c 2 < 1 . (In examining condition (ii), recall that to ensure that p k is a descent direction, we have p k T ∇ f ( x k ) < 0 .)

c 1 is usually chosen to be quite small while c 2 is much larger; Nocedal gives example values of c 1 = 10 − 4 and c 2 = 0.9 for Newton or quasi-Newton methods and c 2 = 0.1 for the nonlinear conjugate gradient method. Inequality i) is known as the Armijo rule and ii) as the curvature condition; i) ensures that the step length α k decreases f 'sufficiently', and ii) ensures that the slope has been reduced sufficiently.

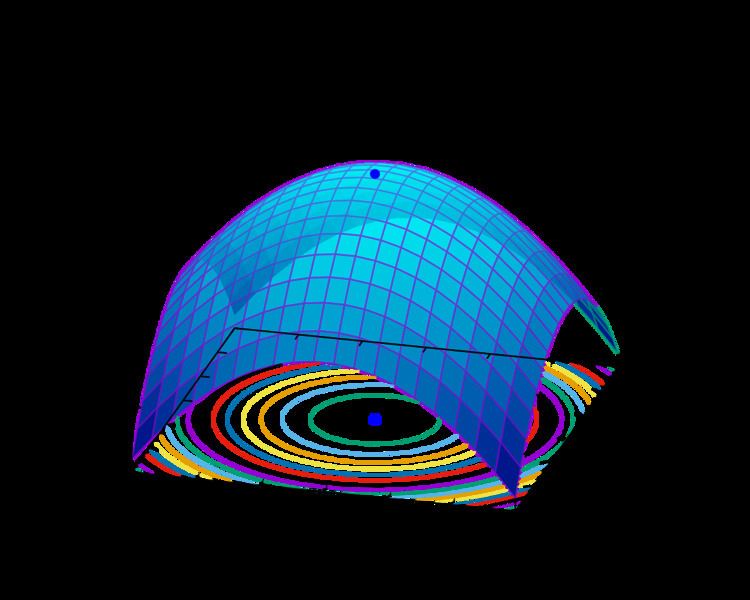

Denote a univariate function ϕ restricted to the direction p k as ϕ ( α ) = f ( x k + α p k ) . The Wolfe conditions can result in a value for the step length that is not close to a minimizer of ϕ . If we modify the curvature condition to the following,

iii)

| p k T ∇ f ( x k + α k p k ) | ≤ c 2 | p k T ∇ f ( x k ) | then i) and iii) together form the so-called strong Wolfe conditions, and force α k to lie close to a critical point of ϕ .

The principal reason for imposing the Wolfe conditions in an optimization algorithm where x k + 1 = x k + α p k is to ensure convergence of the gradient to zero. In particular, if the cosine of the angle between p k and the gradient,

is bounded away from zero and the i) and ii) conditions hold, then ∇ f ( x k ) → 0 .

An additional motivation, in the case of a quasi-Newton method, is that if p k = − B k − 1 ∇ f ( x k ) , where the matrix B k is updated by the BFGS or DFP formula, then if B k is positive definite ii) implies B k + 1 is also positive definite.