| ||

Uncertainty quantification (UQ) is the science of quantitative characterization and reduction of uncertainties in both computational and real world applications. It tries to determine how likely certain outcomes are if some aspects of the system are not exactly known. An example would be to predict the acceleration of a human body in a head-on crash with another car: even if we exactly knew the speed, small differences in the manufacturing of individual cars, how tightly every bolt has been tightened, etc., will lead to different results that can only be predicted in a statistical sense.

Contents

- The uq module for uncertainty quantification another first from numeca

- Two types of uncertainty quantification problems

- Forward uncertainty propagation

- Inverse uncertainty quantification

- Bias correction only

- Parameter calibration only

- Bias correction and parameter calibration

- Selective methodologies for uncertainty quantification

- Methodologies for forward uncertainty propagation

- Frequentist

- Bayesian

- Known issues

- References

Many problems in the natural sciences and engineering are also rife with sources of uncertainty. Computer experiments on computer simulations are the most common approach to study problems in uncertainty quantification.

The uq module for uncertainty quantification another first from numeca

Two types of uncertainty quantification problems

There are two major types of problems in uncertainty quantification: one is the forward propagation of uncertainty (where the various sources of uncertainty are propagated through the model to predict the overall uncertainty in the system response) and the other is the inverse assessment of model uncertainty and parameter uncertainty (where the model parameters are calibrated simultaneously using test data). There has been a proliferation of research on the former problem and a majority of uncertainty analysis techniques were developed for it. On the other hand, the latter problem is drawing increasing attention in the engineering design community, since uncertainty quantification of a model and the subsequent predictions of the true system response(s) are of great interest in designing robust systems.

Forward uncertainty propagation

Uncertainty propagation is the quantification of uncertainties in system output(s) propagated from uncertain inputs. It focuses on the influence on the outputs from the parametric variability listed in the sources of uncertainty. The targets of uncertainty propagation analysis can be:

Inverse uncertainty quantification

Given some experimental measurements of a system and some computer simulation results from its mathematical model, inverse uncertainty quantification estimates the discrepancy between the experiment and the mathematical model (which is called bias correction), and estimates the values of unknown parameters in the model if there are any (which is called parameter calibration or simply calibration). Generally this is a much more difficult problem than forward uncertainty propagation; however it is of great importance since it is typically implemented in a model updating process. There are several scenarios in inverse uncertainty quantification:

Bias correction only

Bias correction quantifies the model inadequacy, i.e. the discrepancy between the experiment and the mathematical model. The general model updating formula for bias correction is:

where

Parameter calibration only

Parameter calibration estimates the values of one or more unknown parameters in a mathematical model. The general model updating formulation for calibration is:

where

Bias correction and parameter calibration

It considers an inaccurate model with one or more unknown parameters, and its model updating formulation combines the two together:

It is the most comprehensive model updating formulation that includes all possible sources of uncertainty, and it requires the most effort to solve.

Selective methodologies for uncertainty quantification

Much research has been done to solve uncertainty quantification problems, though a majority of them deal with uncertainty propagation. During the past one to two decades, a number of approaches for inverse uncertainty quantification problems have also been developed and have proved to be useful for most small- to medium-scale problems.

Methodologies for forward uncertainty propagation

Existing uncertainty propagation approaches include probabilistic approaches and non-probabilistic approaches. There are basically five categories of probabilistic approaches for uncertainty propagation:

For non-probabilistic approaches, interval analysis, Fuzzy theory, possibility theory and evidence theory are among the most widely used.

The probabilistic approach is considered as the most rigorous approach to uncertainty analysis in engineering design due to its consistency with the theory of decision analysis. Its cornerstone is the calculation of probability density functions for sampling statistics. This can be performed rigorously for random variables that are obtainable as transformations of Gaussian variables, leading to exact confidence intervals.

Frequentist

In regression analysis and least squares problems, the standard error of parameter estimates is readily available, which can be expanded into a confidence interval.

Bayesian

Several methodologies for inverse uncertainty quantification exist under the Bayesian framework. The most complicated direction is to aim at solving problems with both bias correction and parameter calibration. The challenges of such problems include not only the influences from model inadequacy and parameter uncertainty, but also the lack of data from both computer simulations and experiments. A common situation is that the input settings are not the same over experiments and simulations.

Modular Bayesian approach

An approach to inverse uncertainty quantification is the modular Bayesian approach. The modular Bayesian approach derives its name from its four-module procedure. Apart from the current available data, a prior distribution of unknown parameters should be assigned.

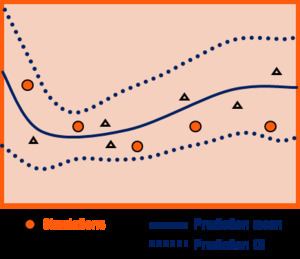

To address the issue from lack of simulation results, the computer model is replaced with a Gaussian Process (GP) model

where

Similarly with the first module, the discrepancy function is replaced with a GP model

where

Together with the prior distribution of unknown parameters, and data from both computer models and experiments, one can derive the maximum likelihood estimates for

Bayes' theorem is applied to calculate the posterior distribution of the unknown parameters:

where

Fully Bayesian approach

Fully Bayesian approach requires that not only the priors for unknown parameters

- Derive the posterior distribution

p ( θ , ϕ | d a t a ) ; - Integrate

ϕ out and obtainp ( θ | d a t a ) . This single step accomplishes the calibration; - Prediction of the experimental response and discrepancy function.

However, the approach has significant drawbacks:

The fully Bayesian approach requires a huge amount of calculations and may not yet be practical for dealing with the most complicated modelling situations.

Known issues

The theories and methodologies for uncertainty propagation are much better established, compared with inverse uncertainty quantification. For the latter, several difficulties remain unsolved:

- Dimensionality issue: The computational cost increases dramatically with the dimensionality of the problem, i.e. the number of input variables and/or the number of unknown parameters.

- Identifiability issue: Multiple combinations of unknown parameters and discrepancy function can yield the same experimental prediction. Hence different values of parameters cannot be distinguished/identified.