| ||

In the field of mathematical modeling, a radial basis function network is an artificial neural network that uses radial basis functions as activation functions. The output of the network is a linear combination of radial basis functions of the inputs and neuron parameters. Radial basis function networks have many uses, including function approximation, time series prediction, classification, and system control. They were first formulated in a 1988 paper by Broomhead and Lowe, both researchers at the Royal Signals and Radar Establishment.

Contents

- Network architecture

- Normalized architecture

- Theoretical motivation for normalization

- Local linear models

- Training

- Interpolation

- Function approximation

- Training the basis function centers

- Pseudoinverse solution for the linear weights

- Gradient descent training of the linear weights

- Projection operator training of the linear weights

- Logistic map

- Unnormalized radial basis functions

- Normalized radial basis functions

- Time series prediction

- Control of a chaotic time series

- References

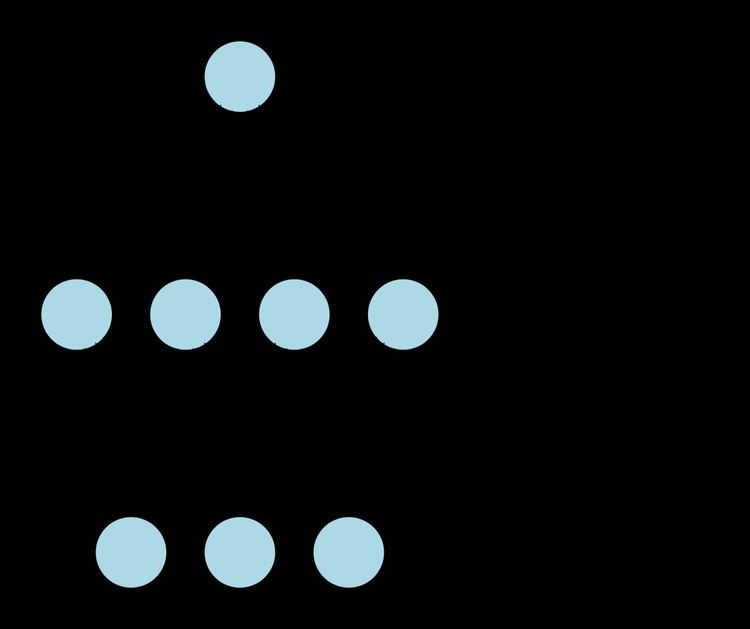

Network architecture

Radial basis function (RBF) networks typically have three layers: an input layer, a hidden layer with a non-linear RBF activation function and a linear output layer. The input can be modeled as a vector of real numbers

where

The Gaussian basis functions are local to the center vector in the sense that

i.e. changing parameters of one neuron has only a small effect for input values that are far away from the center of that neuron.

Given certain mild conditions on the shape of the activation function, RBF networks are universal approximators on a compact subset of

The parameters

Normalized architecture

In addition to the above unnormalized architecture, RBF networks can be normalized. In this case the mapping is

where

is known as a "normalized radial basis function".

Theoretical motivation for normalization

There is theoretical justification for this architecture in the case of stochastic data flow. Assume a stochastic kernel approximation for the joint probability density

where the weights

and

The probability densities in the input and output spaces are

and

The expectation of y given an input

where

is the conditional probability of y given

which yields

This becomes

when the integrations are performed.

Local linear models

It is sometimes convenient to expand the architecture to include local linear models. In that case the architectures become, to first order,

and

in the unnormalized and normalized cases, respectively. Here

This result can be written

where

and

in the unnormalized case and

in the normalized case.

Here

Training

RBF networks are typically trained by a two-step algorithm. In the first step, the center vectors

The second step simply fits a linear model with coefficients

where

We have explicitly included the dependence on the weights. Minimization of the least squares objective function by optimal choice of weights optimizes accuracy of fit.

There are occasions in which multiple objectives, such as smoothness as well as accuracy, must be optimized. In that case it is useful to optimize a regularized objective function such as

where

and

where optimization of S maximizes smoothness and

Interpolation

RBF networks can be used to interpolate a function

It can be shown that the interpolation matrix in the above equation is non-singular, if the points

Function approximation

If the purpose is not to perform strict interpolation but instead more general function approximation or classification the optimization is somewhat more complex because there is no obvious choice for the centers. The training is typically done in two phases first fixing the width and centers and then the weights. This can be justified by considering the different nature of the non-linear hidden neurons versus the linear output neuron.

Training the basis function centers

Basis function centers can be randomly sampled among the input instances or obtained by Orthogonal Least Square Learning Algorithm or found by clustering the samples and choosing the cluster means as the centers.

The RBF widths are usually all fixed to same value which is proportional to the maximum distance between the chosen centers.

Pseudoinverse solution for the linear weights

After the centers

where the entries of G are the values of the radial basis functions evaluated at the points

The existence of this linear solution means that unlike multi-layer perceptron (MLP) networks, RBF networks have a unique local minimum (when the centers are fixed).

Gradient descent training of the linear weights

Another possible training algorithm is gradient descent. In gradient descent training, the weights are adjusted at each time step by moving them in a direction opposite from the gradient of the objective function (thus allowing the minimum of the objective function to be found),

where

For the case of training the linear weights,

in the unnormalized case and

in the normalized case.

For local-linear-architectures gradient-descent training is

Projection operator training of the linear weights

For the case of training the linear weights,

in the unnormalized case and

in the normalized case and

in the local-linear case.

For one basis function, projection operator training reduces to Newton's method.

Logistic map

The basic properties of radial basis functions can be illustrated with a simple mathematical map, the logistic map, which maps the unit interval onto itself. It can be used to generate a convenient prototype data stream. The logistic map can be used to explore function approximation, time series prediction, and control theory. The map originated from the field of population dynamics and became the prototype for chaotic time series. The map, in the fully chaotic regime, is given by

where t is a time index. The value of x at time t+1 is a parabolic function of x at time t. This equation represents the underlying geometry of the chaotic time series generated by the logistic map.

Generation of the time series from this equation is the forward problem. The examples here illustrate the inverse problem; identification of the underlying dynamics, or fundamental equation, of the logistic map from exemplars of the time series. The goal is to find an estimate

for f.

Unnormalized radial basis functions

The architecture is

where

Since the input is a scalar rather than a vector, the input dimension is one. We choose the number of basis functions as N=5 and the size of the training set to be 100 exemplars generated by the chaotic time series. The weight

where the learning rate

Normalized radial basis functions

The normalized RBF architecture is

where

Again:

Again, we choose the number of basis functions as five and the size of the training set to be 100 exemplars generated by the chaotic time series. The weight

where the learning rate

Time series prediction

Once the underlying geometry of the time series is estimated as in the previous examples, a prediction for the time series can be made by iteration:

A comparison of the actual and estimated time series is displayed in the figure. The estimated times series starts out at time zero with an exact knowledge of x(0). It then uses the estimate of the dynamics to update the time series estimate for several time steps.

Note that the estimate is accurate for only a few time steps. This is a general characteristic of chaotic time series. This is a property of the sensitive dependence on initial conditions common to chaotic time series. A small initial error is amplified with time. A measure of the divergence of time series with nearly identical initial conditions is known as the Lyapunov exponent.

Control of a chaotic time series

We assume the output of the logistic map can be manipulated through a control parameter

The goal is to choose the control parameter in such a way as to drive the time series to a desired output

where

is an approximation to the underlying natural dynamics of the system.

The learning algorithm is given by

where