| ||

Lecture 10 linear prediction of speech

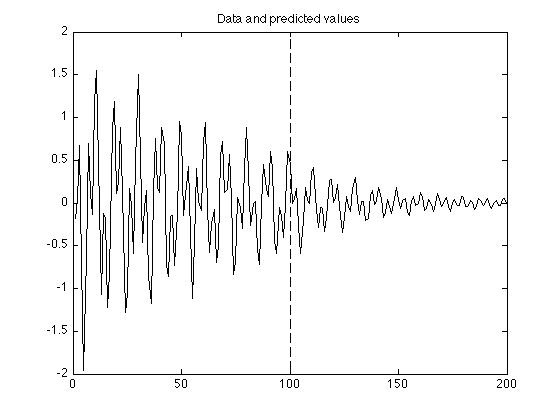

Linear prediction is a mathematical operation where future values of a discrete-time signal are estimated as a linear function of previous samples.

Contents

- Lecture 10 linear prediction of speech

- Machine learning linear prediction

- The prediction model

- Estimating the parameters

- References

In digital signal processing, linear prediction is often called linear predictive coding (LPC) and can thus be viewed as a subset of filter theory. In system analysis (a subfield of mathematics), linear prediction can be viewed as a part of mathematical modelling or optimization.

Machine learning linear prediction

The prediction model

The most common representation is

where

where

These equations are valid for all types of (one-dimensional) linear prediction. The differences are found in the way the predictor coefficients

For multi-dimensional signals the error metric is often defined as

where

Estimating the parameters

The most common choice in optimization of parameters

for 1 ≤ j ≤ p, where R is the autocorrelation of signal xn, defined as

and E is the expected value. In the multi-dimensional case this corresponds to minimizing the L2 norm.

The above equations are called the normal equations or Yule-Walker equations. In matrix form the equations can be equivalently written as

where the autocorrelation matrix

Another, more general, approach is to minimize the sum of squares of the errors defined in the form

where the optimisation problem searching over all

On the other hand, if the mean square prediction error is constrained to be unity and the prediction error equation is included on top of the normal equations, the augmented set of equations is obtained as

where the index i ranges from 0 to p, and R is a (p + 1) × (p + 1) matrix.

Specification of the parameters of the linear predictor is a wide topic and a large number of other approaches have been proposed. In fact, the autocorrelation method is the most common and it is used, for example, for speech coding in the GSM standard.

Solution of the matrix equation Ra = r is computationally a relatively expensive process. The Gaussian elimination for matrix inversion is probably the oldest solution but this approach does not efficiently use the symmetry of R and r. A faster algorithm is the Levinson recursion proposed by Norman Levinson in 1947, which recursively calculates the solution. In particular, the autocorrelation equations above may be more efficiently solved by the Durbin algorithm.

In 1986, Philippe Delsarte and Y.V. Genin proposed an improvement to this algorithm called the split Levinson recursion, which requires about half the number of multiplications and divisions. It uses a special symmetrical property of parameter vectors on subsequent recursion levels. That is, calculations for the optimal predictor containing p terms make use of similar calculations for the optimal predictor containing p − 1 terms.

Another way of identifying model parameters is to iteratively calculate state estimates using Kalman filters and obtaining maximum likelihood estimates within Expectation–maximization algorithms.