| ||

The Hammersley–Clifford theorem is a result in probability theory, mathematical statistics and statistical mechanics, that gives necessary and sufficient conditions under which a positive probability distribution can be represented as a Markov network (also known as a Markov random field). It is the fundamental theorem of random fields. It states that a probability distribution that has a positive mass or density satisfies one of the Markov properties with respect to an undirected graph G if and only if it is a Gibbs random field, that is, its density can be factorized over the cliques (or complete subgraphs) of the graph.

The relationship between Markov and Gibbs random fields was initiated by Roland Dobrushin and Frank Spitzer in the context of statistical mechanics. The theorem is named after John Hammersley and Peter Clifford who proved the equivalence in an unpublished paper in 1971. Simpler proofs using the inclusion-exclusion principle were given independently by Geoffrey Grimmett, Preston and Sherman in 1973, with a further proof by Julian Besag in 1974.

Proof Outline

It is a trivial matter to show that a Gibbs random field satisfies every Markov property. As an example of this fact, see the following:

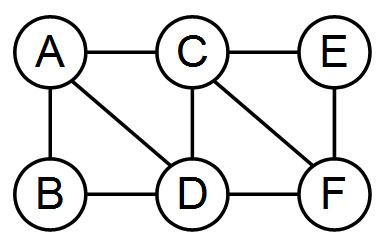

In the image to the right, a Gibbs random field over the provided graph has the form

With

To establish that every positive probability distribution that satisfies the local Markov property is also a Gibbs random field, the following lemma, which provides a means for combining different factorizations, needs to be proven:

Lemma 1

Let

If

for functions

In other words,

Lemma 1 provides a means of combining two different factorizations of

where

End of Proof