| ||

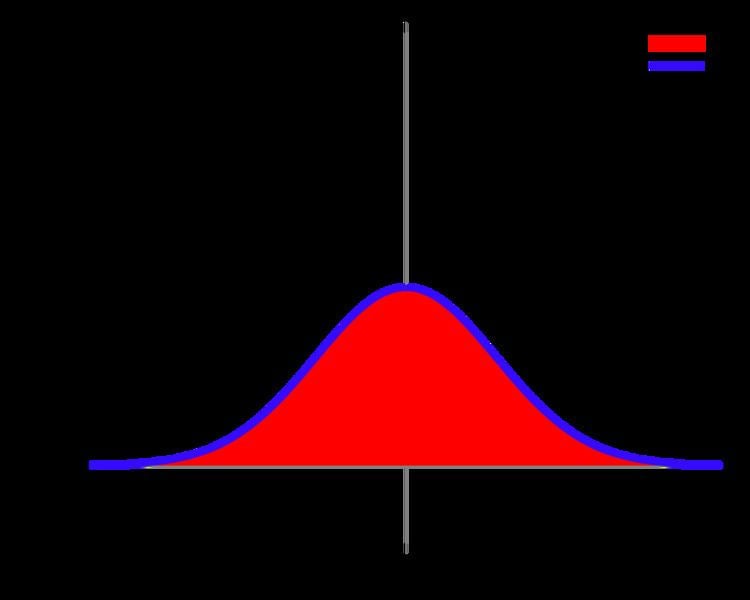

The Gaussian integral, also known as the Euler–Poisson integral is the integral of the Gaussian function e−x2 over the entire real line. It is named after the German mathematician and physicist Carl Friedrich Gauss. The integral is:

Contents

- By polar coordinates

- Careful proof

- By Cartesian coordinates

- Relation to the gamma function

- The integral of a Gaussian function

- n dimensional and functional generalization

- n dimensional with linear term

- Integrals of similar form

- Higher order polynomials

- References

This integral has a wide range of applications. For example, with a slight change of variables it is used to compute the normalizing constant of the normal distribution. The same integral with finite limits is closely related both to the error function and the cumulative distribution function of the normal distribution. In physics this type of integral appears frequently, for example, in quantum mechanics, to find the probability density of the ground state of the harmonic oscillator, also in the path integral formulation, and to find the propagator of the harmonic oscillator, we make use of this integral.

Although no elementary function exists for the error function, as can be proven by the Risch algorithm, the Gaussian integral can be solved analytically through the methods of multivariable calculus. That is, there is no elementary indefinite integral for

but the definite integral

can be evaluated.

The Gaussian integral is encountered very often in physics and numerous generalizations of the integral are encountered in quantum field theory.

By polar coordinates

A standard way to compute the Gaussian integral, the idea of which goes back to Poisson, is to make use of the property that:

- on the one hand, by double integration in the Cartesian coordinate system, its integral is a square:

- on the other hand, by shell integration (a case of double integration in polar coordinates), its integral is computed to be π.

Comparing these two computations yields the integral, though one should take care about the improper integrals involved.

where the factor of r comes from the transform to polar coordinates (r dr dθ is the standard measure on the plane, expressed in polar coordinates Wikibooks:Calculus/Polar Integration#Generalization), and the substitution involves taking s = −r2, so ds = −2r dr.

Combining these yields

so

Careful proof

To justify the improper double integrals and equating the two expressions, we begin with an approximating function:

If the integral

were absolutely convergent we would have that its Cauchy principal value, that is, the limit

would coincide with

To see that this is the case, consider that

so we can compute

by just taking the limit

Taking the square of

Using Fubini's theorem, the above double integral can be seen as an area integral

taken over a square with vertices {(−a, a), (a, a), (a, −a), (−a, −a)} on the xy-plane.

Since the exponential function is greater than 0 for all real numbers, it then follows that the integral taken over the square's incircle must be less than

(See to polar coordinates from Cartesian coordinates for help with polar transformation.)

Integrating,

By the squeeze theorem, this gives the Gaussian integral

By Cartesian coordinates

A different technique, which goes back to Laplace (1812), is the following. Let

Since the limits on s as y → ±∞ depend on the sign of x, it simplifies the calculation to use the fact that e−x2 is an even function, and, therefore, the integral over all real numbers is just twice the integral from zero to infinity. That is,

Thus, over the range of integration, x ≥ 0, and the variables y and s have the same limits. This yields:

Therefore,

Relation to the gamma function

The integrand is an even function,

Thus, after the change of variable

where Γ is the gamma function. This shows why the factorial of a half-integer is a rational multiple of

The integral of a Gaussian function

The integral of an arbitrary Gaussian function is

An alternative form is

This form is very useful in calculating mathematical expectations of some continuous probability distributions concerning normal distribution.

See, for example, the expectation of the log-normal distribution.

n-dimensional and functional generalization

Suppose A is a symmetric positive-definite (hence invertible) n × n precision matrix, which is the matrix inverse of the covariance matrix. Then,

where the integral is understood to be over Rn. This fact is applied in the study of the multivariate normal distribution.

Also,

where σ is a permutation of {1, ..., 2N} and the extra factor on the right-hand side is the sum over all combinatorial pairings of {1, ..., 2N} of N copies of A−1.

Alternatively,

for some analytic function f, provided it satisfies some appropriate bounds on its growth and some other technical criteria. (It works for some functions and fails for others. Polynomials are fine.) The exponential over a differential operator is understood as a power series.

While functional integrals have no rigorous definition (or even a nonrigorous computational one in most cases), we can define a Gaussian functional integral in analogy to the finite-dimensional case. There is still the problem, though, that

In the DeWitt notation, the equation looks identical to the finite-dimensional case.

n-dimensional with linear term

If A is again a symmetric positive-definite matrix, then (assuming all are column vectors)

Integrals of similar form

(n positive integer)

An easy way to derive these is by parameter differentiation.

One could also integrate by parts and find a recurrence relation to solve this.

Higher-order polynomials

Applying a linear change of basis shows that the integral of the exponential of a homogeneous polynomial in n variables may depend only on SL(n)-invariants of the polynomial. One such invariant is the discriminant, zeros of which mark the singularities of the integral. However, the integral may also depend on other invariants.

Exponentials of other even polynomials can numerically be solved using series. For example the solution to the integral of the exponential of a quartic polynomial is

The n + p = 0 mod 2 requirement is because the integral from −∞ to 0 contributes a factor of (−1)n+p/2 to each term, while the integral from 0 to +∞ contributes a factor of 1/2 to each term. These integrals turn up in subjects such as quantum field theory.