| ||

Beware online filter bubbles eli pariser

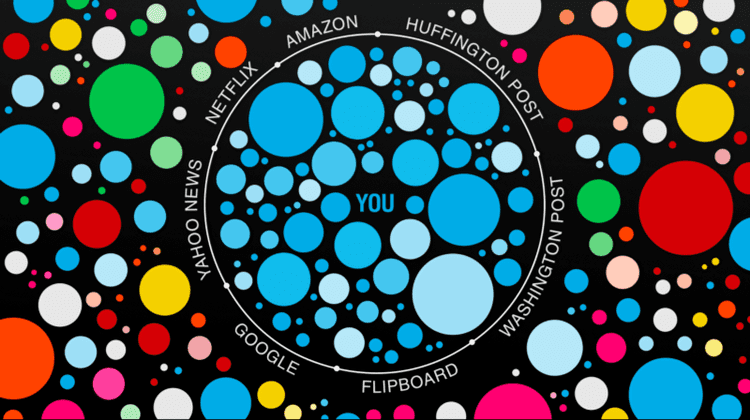

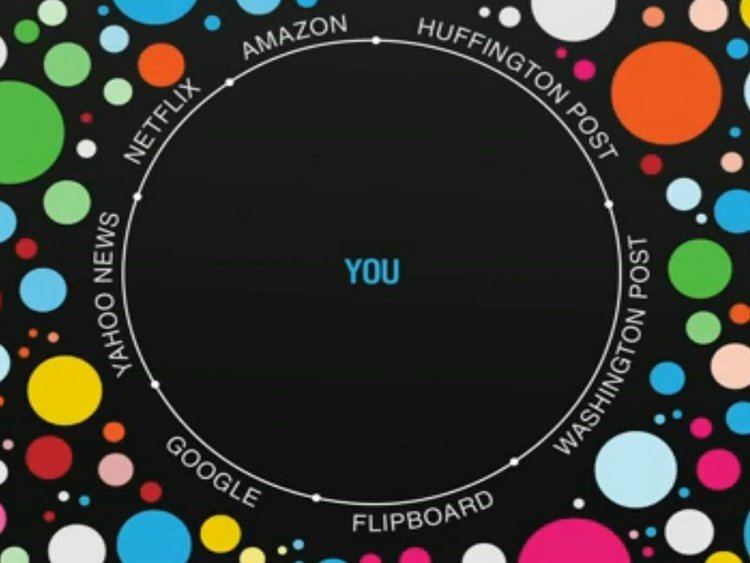

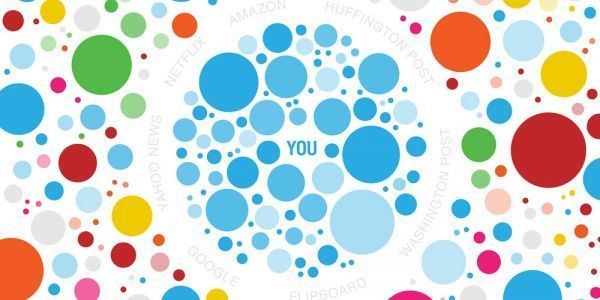

A filter bubble is a result of a personalized search in which a website algorithm selectively guesses what information a user would like to see based on information about the user (such as location, past click behavior and search history) and, as a result, users become separated from information that disagrees with their viewpoints, effectively isolating them in their own cultural or ideological bubbles. The choices made by the algorithms are not transparent. Prime examples are Google Personalized Search results and Facebook's personalized news stream. The bubble effect may have negative implications for civic discourse, according to Pariser, but there are contrasting views suggesting the effect is minimal and addressable. The surprising results of the U.S. presidential election in 2016, and its aftermath, has been blamed on the "filter bubble" phenomenon, spurring new interest in the term, with many concerned that the practice is harming democracy.

Contents

- Beware online filter bubbles eli pariser

- Donald trump the filter bubble fake news facebook and google bbc newsnight

- Concept

- Reactions

- Counter Measures

- In practice

- References

(Technologies such as social media) lets you go off with like-minded people, so you’re not mixing and sharing and understanding other points of view ... It’s super important. It’s turned out to be more of a problem than I, or many others, would have expected.

Donald trump the filter bubble fake news facebook and google bbc newsnight

Concept

The term was coined by internet activist Eli Pariser in his book by the same name; according to Pariser, users get less exposure to conflicting viewpoints and are isolated intellectually in their own informational bubble. He related an example in which one user searched Google for "BP" and got investment news about British Petroleum while another searcher got information about the Deepwater Horizon oil spill and that the two search results pages were "strikingly different".

Pariser defined his concept of filter bubble in more formal terms as "that personal ecosystem of information that's been catered by these algorithms". Other terms have been used to describe this phenomenon, including "ideological frames" or a "figurative sphere surrounding you as you search the Internet". The past search history is built up over time when an Internet user indicates interest in topics by "clicking links, viewing friends, putting movies in your queue, reading news stories" and so forth. An Internet firm then uses this information to target advertising to the user or make it appear more prominently in a search results query page. Pariser's concern is somewhat similar to one made by Tim Berners-Lee in a 2010 report in The Guardian along the lines of a Hotel California effect which happens when Internet social networking sites were walling off content from other competing sites—as a way of grabbing a greater share of all Internet users—such that the "more you enter, the more you become locked in" to the information within a specific Internet site. It becomes a "closed silo of content" with the risk of fragmenting the Worldwide Web, according to Berners-Lee.

Pariser’s idea of the ‘filter bubble’ was popularized after the Ted Talk he gave in May of 2011. These ‘bubbles’ are created by algorithms that use 57 different signals to determine search results. These signals include “[the] computer being used,” “where you’re sitting,” “the browser doing the surfing,” and more. Pariser gives examples of how ‘filter bubbles’ work and where they can be seen. In an attempt to test the validity of ‘filter bubbles’ Pariser asked two of his friends to search the word ‘Egypt’ on Google and send him the search results. What each of them found were two completely different search results, one focusing on the political tensions in the country at the time, and one with vacation advertisements.

In The Filter Bubble, Pariser warns that a potential downside to filtered searching is that it "closes us off to new ideas, subjects, and important information" and "creates the impression that our narrow self-interest is all that exists". It is potentially harmful to both individuals and society, in his view. He criticized Google and Facebook for offering users "too much candy, and not enough carrots". He warned that "invisible algorithmic editing of the web" may limit our exposure to new information and narrow our outlook. According to Pariser, the detrimental effects of filter bubbles include harm to the general society in the sense that it has the possibility of "undermining civic discourse" and making people more vulnerable to "propaganda and manipulation". He wrote:

A world constructed from the familiar is a world in which there’s nothing to learn ... (since there is) invisible autopropaganda, indoctrinating us with our own ideas.

A filter bubble has been described as exacerbating a phenomenon that has been called splinternet or cyberbalkanization, which happens when the Internet becomes divided up into sub-groups of like-minded people who become insulated within their own online community and fail to get exposure to different views; the term cyberbalkanization was coined in 1996.

Although his speech did not employ the adjective "filter", President Obama's farewell address identified a similar concept to filter bubbles as a "threat to [Americans'] democracy", i.e., the "retreat into our own bubbles, ...especially our social media feeds, surrounded by people who look like us and share the same political outlook and never challenge our assumptions... And increasingly we become so secure in our bubbles that we start accepting only information, whether it’s true or not, that fits our opinions, instead of basing our opinions on the evidence that is out there."

Reactions

There are conflicting reports about the extent to which personalized filtering is happening and whether such activity is beneficial or harmful. Analyst Jacob Weisberg writing in Slate did a small non-scientific experiment to test Pariser's theory which involved five associates with different ideological backgrounds conducting exactly the same search—the results of all five search queries were nearly identical across four different searches, suggesting that a filter bubble was not in effect, which led him to write that a situation in which all people are "feeding at the trough of a Daily Me" was overblown. A scientific study from Wharton that analyzed personalized recommendations also found that these filters can actually create commonality, not fragmentation, in online music taste. Consumers apparently use the filter to expand their taste, not limit it. Book reviewer Paul Boutin did a similar experiment among people with differing search histories, and found results similar to Weisberg's with nearly identical search results. Harvard law professor Jonathan Zittrain disputed the extent to which personalisation filters distort Google search results; he said "the effects of search personalization have been light". Further, there are reports that users can shut off personalisation features on Google if they choose by deleting the Web history and by other methods. A spokesperson for Google suggested that algorithms were added to Google search engines to deliberately "limit personalization and promote variety".

Nevertheless, there are reports that Google and other sites have vast information which might enable them to further personalise a user's Internet experience if they chose to do so. One account suggested that Google can keep track of user past histories even if they don't have a personal Google account or are not logged into one. One report was that Google has collected "10 years worth" of information amassed from varying sources, such as Gmail, Google Maps, and other services besides its search engine, although a contrary report was that trying to personalise the Internet for each user was technically challenging for an Internet firm to achieve despite the huge amounts of available web data. Analyst Doug Gross of CNN suggested that filtered searching seemed to be more helpful for consumers than for citizens, and would help a consumer looking for "pizza" find local delivery options based on a personalized search and appropriately filter out distant pizza stores. There is agreement that sites within the Internet, such as the Washington Post, The New York Times, and others are pushing efforts towards creating personalized information engines, with the aim of tailoring search results to those that users are likely to like or agree with.

Several designers developed tools to counteract the effects of filter bubbles. Swiss radio station SRF voted the word filterblase (the German translation of filter bubble) word of the year 2016.

Counter Measures

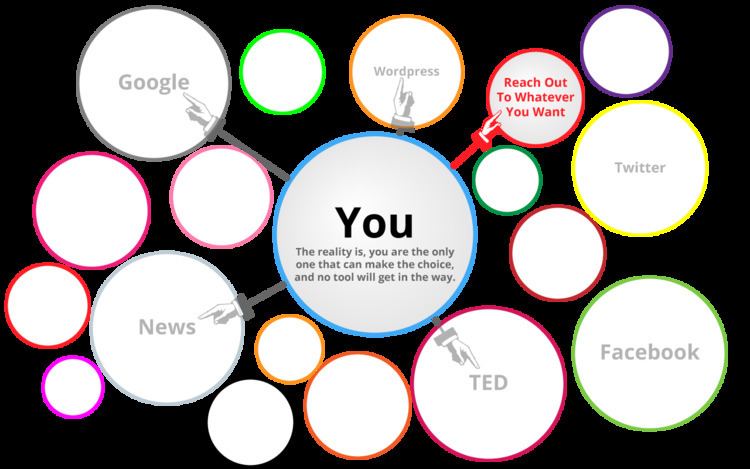

Users can take actions to burst through their filter bubbles. Some make a conscious effort to evaluate what information they are exposing themselves to, thinking critically about whether they are engaging with a broad range of content. Websites such as allsides.com and hifromtheotherside.com aim to expose readers to different perspectives with diverse content.

Some existing resources allow users to counteract the algorithms. For example, since web-based advertising can further the effect of the filter bubbles by exposing users to more of the same content, users can block much advertising by deleting their search history, turning off targeted ads, and downloading browser extensions. Extensions such as Escape your Bubble for Google Chrome aim to help curate content and prevent users from only being exposed to biased information, while Mozilla Firefox extensions such as Lightbeam and Self-Destructing Cookies enable users to visualize how their data is being tracked, and lets them remove some of the tracking cookies. Some use anonymous web browsers such as duckduckgo, StartPage, and Disconnect in order to prevent companies from gathering their web-search data. Swiss daily Neue Zürcher Zeitung is beta-testing a personalised news engine app which uses machine learning to guess what content a user is interested in, while "always including an element of surprise"; the idea is to mix in stories which a user is unlikely to have followed in the past.

The European Union is taking measures to lessen the impact of the filter bubble. The European Parliament is sponsoring inquiries into how filter bubbles affect people’s ability to access diverse news. Additionally, it introduced a program aimed to educate citizens about social media.

In practice

In January 2017, Facebook removed personalization from its Trending Topics list in response to problems with some users not seeing highly talked-about events there.