| ||

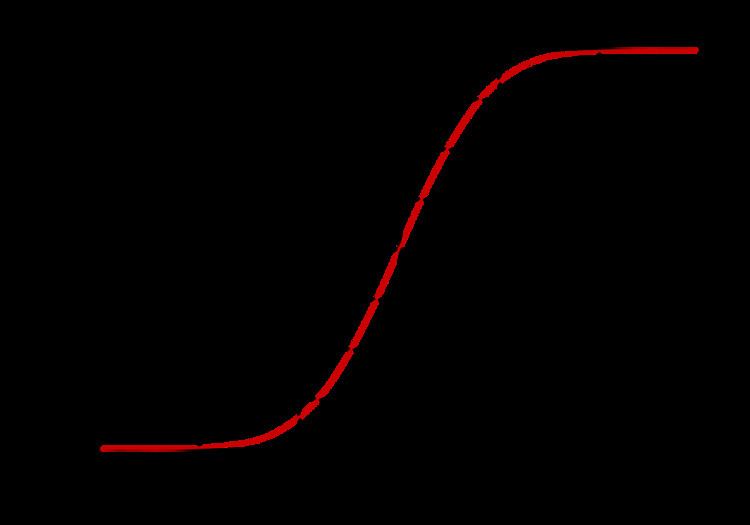

In mathematics, the error function (also called the Gauss error function) is a special function (non-elementary) of sigmoid shape that occurs in probability, statistics, and partial differential equations describing diffusion. It is defined as:

Contents

- The name error function

- Complementary error function

- Imaginary error function

- Cumulative distribution function

- Properties

- Taylor series

- Derivative and integral

- Brmann series

- Inverse functions

- Asymptotic expansion

- Continued fraction expansion

- Integral of error function with Gaussian density function

- Approximation with elementary functions

- Numerical approximations

- Applications

- Related functions

- Generalized error functions

- Iterated integrals of the complementary error function

- Implementations

- References

In statistics, for nonnegative values of x, the error function has the following interpretation: for a random variable X that is normally distributed with mean 0 and variance ½, erf(x) describes the probability of X falling in the range [−x, x].

The name 'error function'

The error function is used in measurement theory (using probability and statistics), and its use in other branches of mathematics is typically unrelated to the characterization of measurement errors.

In statistics, it is common to have a variable

The previous paragraph can be generalized to any variance: given a variable (such as an unbiased error variable)

Complementary error function

The complementary error function, denoted erfc, is defined as

which also defines erfcx, the scaled complementary error function (which can be used instead of erfc to avoid arithmetic underflow). Another form of

Imaginary error function

The imaginary error function, denoted erfi, is defined as

where D(x) is the Dawson function (which can be used instead of erfi to avoid arithmetic overflow).

Despite the name "imaginary error function",

When the error function is evaluated for arbitrary complex arguments z, the resulting complex error function is usually discussed in scaled form as the Faddeeva function:

Cumulative distribution function

The error function is related to the cumulative distribution

Properties

The property

For any complex number z:

where

The integrand ƒ = exp(−z2) and ƒ = erf(z) are shown in the complex z-plane in figures 2 and 3. Level of Im(ƒ) = 0 is shown with a thick green line. Negative integer values of Im(ƒ) are shown with thick red lines. Positive integer values of Im(f) are shown with thick blue lines. Intermediate levels of Im(ƒ) = constant are shown with thin green lines. Intermediate levels of Re(ƒ) = constant are shown with thin red lines for negative values and with thin blue lines for positive values.

The error function at +∞ is exactly 1 (see Gaussian integral). At the real axis, erf(z) approaches unity at z → +∞ and −1 at z → −∞. At the imaginary axis, it tends to ±i∞.

Taylor series

The error function is an entire function; it has no singularities (except that at infinity) and its Taylor expansion always converges.

The defining integral cannot be evaluated in closed form in terms of elementary functions, but by expanding the integrand e−z2 into its Maclaurin series and integrating term by term, one obtains the error function's Maclaurin series as:

which holds for every complex number z. The denominator terms are sequence A007680 in the OEIS.

For iterative calculation of the above series, the following alternative formulation may be useful:

because

The imaginary error function has a very similar Maclaurin series, which is:

which holds for every complex number z.

Derivative and integral

The derivative of the error function follows immediately from its definition:

From this, the derivative of the imaginary error function is also immediate:

An antiderivative of the error function, obtainable by integration by parts, is

An antiderivative of the imaginary error function, also obtainable by integration by parts, is

Higher order derivatives are given by

where

Bürmann series

An expansion, which converges more rapidly for all real values of

By keeping only the first two coefficients and choosing

Inverse functions

Given complex number z, there is not a unique complex number w satisfying

The inverse error function is usually defined with domain (−1,1), and it is restricted to this domain in many computer algebra systems. However, it can be extended to the disk |z| < 1 of the complex plane, using the Maclaurin series

where c0 = 1 and

So we have the series expansion (note that common factors have been canceled from numerators and denominators):

(After cancellation the numerator/denominator fractions are entries A092676/ A132467 in the OEIS; without cancellation the numerator terms are given in entry A002067.) Note that the error function's value at ±∞ is equal to ±1.

For |z| < 1, we have

The inverse complementary error function is defined as

For real x, there is a unique real number

For any real x, Newton's method can be used to compute

where ck is defined as above.

Asymptotic expansion

A useful asymptotic expansion of the complementary error function (and therefore also of the error function) for large real x is

where (2n – 1)!! is the double factorial: the product of all odd numbers up to (2n – 1). This series diverges for every finite x, and its meaning as asymptotic expansion is that, for any

where the remainder, in Landau notation, is

Indeed, the exact value of the remainder is

which follows easily by induction, writing

For large enough values of x, only the first few terms of this asymptotic expansion are needed to obtain a good approximation of erfc(x) (while for not too large values of x note that the above Taylor expansion at 0 provides a very fast convergence).

Continued fraction expansion

A continued fraction expansion of the complementary error function is:

Integral of error function with Gaussian density function

Approximation with elementary functions

Abramowitz and Stegun give several approximations of varying accuracy (equations 7.1.25–28). This allows one to choose the fastest approximation suitable for a given application. In order of increasing accuracy, they are:

where a1 = 0.278393, a2 = 0.230389, a3 = 0.000972, a4 = 0.078108

where p = 0.47047, a1 = 0.3480242, a2 = −0.0958798, a3 = 0.7478556

where a1 = 0.0705230784, a2 = 0.0422820123, a3 = 0.0092705272, a4 = 0.0001520143, a5 = 0.0002765672, a6 = 0.0000430638

where p = 0.3275911, a1 = 0.254829592, a2 = −0.284496736, a3 = 1.421413741, a4 = −1.453152027, a5 = 1.061405429

All of these approximations are valid for x ≥ 0. To use these approximations for negative x, use the fact that erf(x) is an odd function, so erf(x) = −erf(−x).

Another approximation is given by

where

This is designed to be very accurate in a neighborhood of 0 and a neighborhood of infinity, and the error is less than 0.00035 for all x. Using the alternate value a ≈ 0.147 reduces the maximum error to about 0.00012.

This approximation can also be inverted to calculate the inverse error function:

Exponential bounds and a pure exponential approximation for the complementary error function are given by

A single-term lower bound is

where the parameter β can be picked to minimize error on the desired interval of approximation.

Numerical approximations

Over the complete range of values, there is an approximation with a maximal error of

with

and

Also, over the complete range of values, the following simple approximation holds for

Applications

When the results of a series of measurements are described by a normal distribution with standard deviation

The error and complementary error functions occur, for example, in solutions of the heat equation when boundary conditions are given by the Heaviside step function.

The error function and its approximations can be used to estimate results that hold with high probability. Given random variable

where A and B are certain numeric constants. If L is sufficiently far from the mean, i.e.

so the probability goes to 0 as

Related functions

The error function is essentially identical to the standard normal cumulative distribution function, denoted Φ, also named norm(x) by software languages, as they differ only by scaling and translation. Indeed,

or rearranged for erf and erfc:

Consequently, the error function is also closely related to the Q-function, which is the tail probability of the standard normal distribution. The Q-function can be expressed in terms of the error function as

The inverse of

The standard normal cdf is used more often in probability and statistics, and the error function is used more often in other branches of mathematics.

The error function is a special case of the Mittag-Leffler function, and can also be expressed as a confluent hypergeometric function (Kummer's function):

It has a simple expression in terms of the Fresnel integral.

In terms of the regularized Gamma function P and the incomplete gamma function,

Generalized error functions

Some authors discuss the more general functions:

Notable cases are:

After division by n!, all the En for odd n look similar (but not identical) to each other. Similarly, the En for even n look similar (but not identical) to each other after a simple division by n!. All generalised error functions for n > 0 look similar on the positive x side of the graph.

These generalised functions can equivalently be expressed for x > 0 using the Gamma function and incomplete Gamma function:

Therefore, we can define the error function in terms of the incomplete Gamma function:

Iterated integrals of the complementary error function

The iterated integrals of the complementary error function are defined by

They have the power series

from which follow the symmetry properties

and

Implementations

double erf(double x) and double erfc(double x) in the header math.h. The pairs of functions {erff(),erfcf()} and {erfl(),erfcl()} take and return values of type float and long double respectively. For complex double arguments, the function names cerf and cerfc are "reserved for future use"; the missing implementation is provided by the open-source project libcerf, which is based on the Faddeeva package.erf() and erfc() in the header cmath. Both functions are overloaded to accept arguments of type float, double, and long double. For complex<double>, the Faddeeva package provides a C++ complex<double> implementation.erf, and the erfc functions, nonetheless both inverse functions are not in the current library.ERF, ERFC and ERFC_SCALED functions to calculate the error function and its complement for real arguments. Fortran 77 implementations are available in SLATEC.math.Erf() and math.Erfc() for float64 arguments.erf and erfc for real and complex arguments. Also has erfi for calculating

math.erf() and math.erfc() for real arguments. For previous versions or for complex arguments, SciPy includes implementations of erf, erfc, erfi, and related functions for complex arguments in scipy.special. A complex-argument erf is also in the arbitrary-precision arithmetic mpmath library as mpmath.erf()?pnorm), which is based on W. J. Cody's rational Chebyshev approximation algorithm.Math.erf() and Math.erfc() for real arguments.