| ||

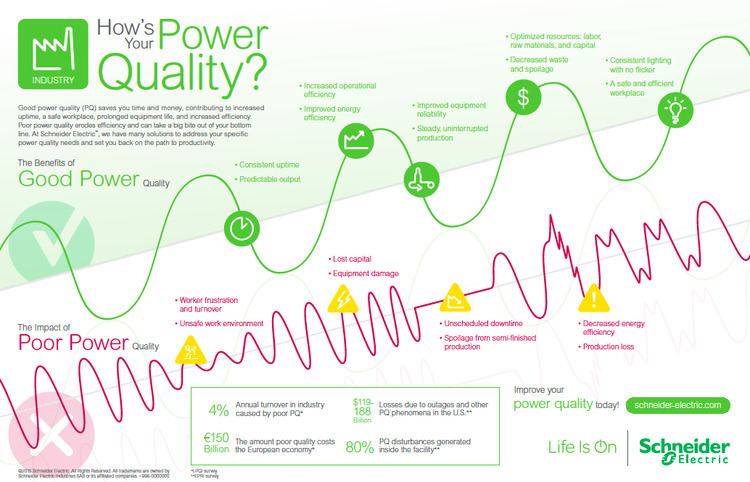

Electric power quality, or simply power quality, involves voltage, frequency, and waveform. Good power quality can be defined as a steady supply voltage that stays within the prescribed range, steady a.c. frequency close to the rated value, and smooth voltage curve waveform (resembles a sine wave). In general, it is useful to consider power quality as the compatibility between what comes out of an electric outlet and the load that is plugged into it. The term is used to describe electric power that drives an electrical load and the load's ability to function properly. Without the proper power, an electrical device (or load) may malfunction, fail prematurely or not operate at all. There are many ways in which electric power can be of poor quality and many more causes of such poor quality power.

Contents

- Introduction

- Power Quality Deviations

- Voltage

- Frequency

- Waveform

- Power conditioning

- Smart grids and power quality

- Power quality compression algorithm

- Power quality challenges

- Raw data compression

- Aggregated data compression

- References

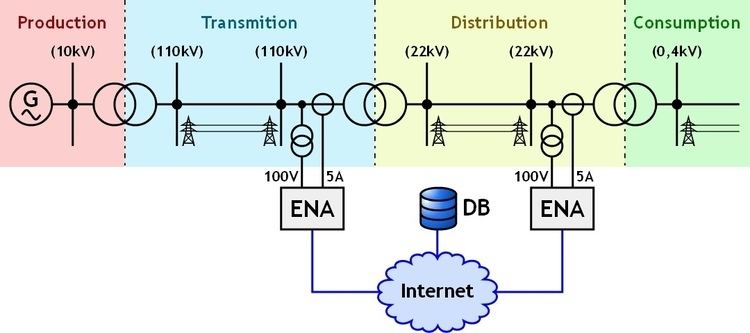

The electric power industry comprises electricity generation (AC power), electric power transmission and ultimately electric power distribution to an electricity meter located at the premises of the end user of the electric power. The electricity then moves through the wiring system of the end user until it reaches the load. The complexity of the system to move electric energy from the point of production to the point of consumption combined with variations in weather, generation, demand and other factors provide many opportunities for the quality of supply to be compromised.

While "power quality" is a convenient term for many, it is the quality of the voltage—rather than power or electric current—that is actually described by the term. Power is simply the flow of energy and the current demanded by a load is largely uncontrollable.

Introduction

The quality of electrical power may be described as a set of values of parameters, such as:

It is often useful to think of power quality as a compatibility problem: is the equipment connected to the grid compatible with the events on the grid, and is the power delivered by the grid, including the events, compatible with the equipment that is connected? Compatibility problems always have at least two solutions: in this case, either clean up the power, or make the equipment tougher.

The tolerance of data-processing equipment to voltage variations is often characterized by the CBEMA curve, which give the duration and magnitude of voltage variations that can be tolerated.

Ideally, AC voltage is supplied by a utility as sinusoidal having an amplitude and frequency given by national standards (in the case of mains) or system specifications (in the case of a power feed not directly attached to the mains) with an impedance of zero ohms at all frequencies.

Power Quality Deviations

No real-life power source is ideal and generally can deviate in at least the following ways:

Voltage

Frequency

Waveform

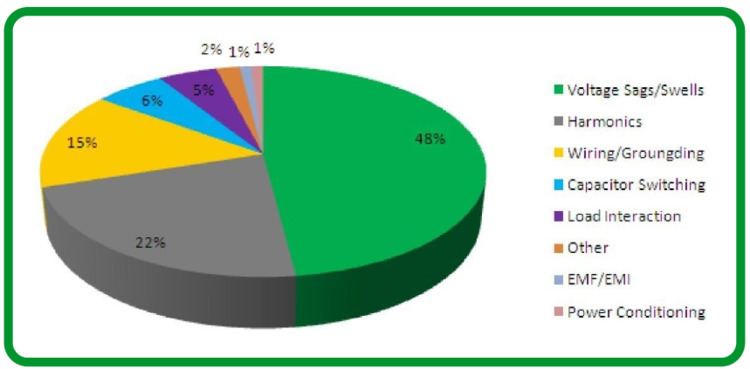

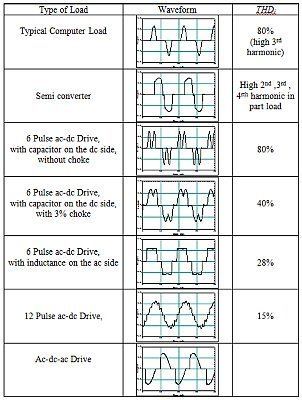

Each of these power quality problems has a different cause. Some problems are a result of the shared infrastructure. For example, a fault on the network may cause a dip that will affect some customers; the higher the level of the fault, the greater the number affected. A problem on one customer’s site may cause a transient that affects all other customers on the same subsystem. Problems, such as harmonics, arise within the customer’s own installation and may propagate onto the network and affect other customers. Harmonic problems can be dealt with by a combination of good design practice and well proven reduction equipment.

Power conditioning

Power conditioning is modifying the power to improve its quality.

An uninterruptible power supply can be used to switch off of mains power if there is a transient (temporary) condition on the line. However, cheaper UPS units create poor-quality power themselves, akin to imposing a higher-frequency and lower-amplitude square wave atop the sine wave. High-quality UPS units utilize a double conversion topology which breaks down incoming AC power into DC, charges the batteries, then remanufactures an AC sine wave. This remanufactured sine wave is of higher quality than the original AC power feed.

A surge protector or simple capacitor or varistor can protect against most overvoltage conditions, while a lightning arrester protects against severe spikes.

Electronic filters can remove harmonics.

Smart grids and power quality

Modern systems use sensors called phasor measurement units (PMU) distributed throughout their network to monitor power quality and in some cases respond automatically to them. Using such smart grids features of rapid sensing and automated self healing of anomalies in the network promises to bring higher quality power and less downtime while simultaneously supporting power from intermittent power sources and distributed generation, which would if unchecked degrade power quality.

Power quality compression algorithm

A power quality compression algorithm is an algorithm used in the analysis of power quality. To provide high quality electric power service, it is essential to monitor the quality of the electric signals also termed as power quality (PQ) at different locations along an electrical power network. Electrical utilities carefully monitor waveforms and currents at various network locations constantly, to understand what lead up to any unforeseen events such as a power outage and blackouts. This is particularly critical at sites where the environment and public safety are at risk (institutions such as hospitals, sewage treatment plants, mines, etc.).

Power quality challenges

Engineers have at their disposal many meters, that are able to read and display electrical power waveforms and calculating parameters of the waveforms. These parameters may include, for example, current and voltage RMS, phase relationship between waveforms of a multi-phase signal, power factor, frequency, THD, active power (kW), reactive power (kVAr), apparent power (kVA) and active energy (kWh), reactive energy (kVArh) and apparent energy (kVAh) and many more. In order to sufficiently monitor unforeseen events, Ribeiro et al. explains that it is not enough to display these parameters, but to also capture voltage waveform data at all times. This is impracticable due to the large amount of data involved, causing what is known the “bottle effect”. For instance, at a sampling rate of 32 samples per cycle, 1,920 samples are collected per second. For three-phase meters that measure both voltage and current waveforms, the data is 6-8 times as much. More practical solutions developed in recent years store data only when an event occurs (for example, when high levels of power system harmonics are detected) or alternatively to store the RMS value of the electrical signals. This data, however, is not always sufficient to determine the exact nature of problems.

Raw data compression

Nisenblat et al. proposes the idea of power quality compression algorithm (similar to lossy compression methods) that enables meters to continuously store the waveform of one or more power signals, regardless whether or not an event of interest was identified. This algorithm referred to as PQZip empowers a processor with a memory that is sufficient to store the waveform, under normal power conditions, over a long period of time, of at least a month, two months or even a year. The compression is performed in real time, as the signals are acquired; it calculates a compression decision before all the compressed data is received. For instance should one parameter remain constant, and various others fluctuate, the compression decision retains only what is relevant from the constant data, and retains all the fluctuation data. It then decomposes the waveform of the power signal of numerous components, over various periods of the waveform. It concludes the process by compressing the values of at least some of these components over different periods, separately. This real time compression algorithm, performed independent of the sampling, prevents data gaps and has a typical 1000:1 compression ratio.

Aggregated data compression

A typical function of a power analyzer is generation of data archive aggregated over given interval. Most typically 10 minute or 1 minute interval is used as specified by the IEC/IEEE PQ standards. A significant archive sizes are created during an operation of such instrument. As Kraus et al. have demonstrated the compression ratio on such archives using Lempel–Ziv–Markov chain algorithm, bzip or other similar lossless compression algorithms can be significant. By using prediction and modeling on the stored time series in the actual power quality archive the efficiency of post processing compression is usually further improved. This combination of simplistic techniques implies savings in both data storage and data acquisition processes.