| ||

Database normalization, or simply normalization, is the process of organizing the columns (attributes) and tables (relations) of a relational database to reduce data redundancy and improve data integrity.

Contents

- Objectives

- Free the database of modification anomalies

- Minimize redesign when extending the database structure

- Example

- List of Normal Forms

- References

Normalization involves arranging attributes in tables based on dependencies between attributes, ensuring that the dependencies are properly enforced by database integrity constraints. Normalization is accomplished through applying some formal rules either by a process of synthesis or decomposition. Synthesis creates a normalized database design based on a known set of dependencies. Decomposition takes an existing (insufficiently normalized) database design and improves it based on the known set of dependencies.

Edgar F. Codd, the inventor of the relational model (RM), introduced the concept of normalization and what is now known as the First normal form (1NF) in 1970. Codd went on to define the Second normal form (2NF) and Third normal form (3NF) in 1971, and Codd and Raymond F. Boyce defined the Boyce-Codd Normal Form (BCNF) in 1974. Informally, a relational database table is often described as "normalized" if it meets Third Normal Form. Most 3NF tables are free of insertion, update, and deletion anomalies.

Objectives

A basic objective of the first normal form defined by Codd in 1970 was to permit data to be queried and manipulated using a "universal data sub-language" grounded in first-order logic. (SQL is an example of such a data sub-language, albeit one that Codd regarded as seriously flawed.)

The objectives of normalization beyond 1NF (First Normal Form) were stated as follows by Codd:

- To free the collection of relations from undesirable insertion, update and deletion dependencies;

- To reduce the need for restructuring the collection of relations, as new types of data are introduced, and thus increase the life span of application programs;

- To make the relational model more informative to users;

- To make the collection of relations neutral to the query statistics, where these statistics are liable to change as time goes by.

The sections below give details of each of these objectives.

Free the database of modification anomalies

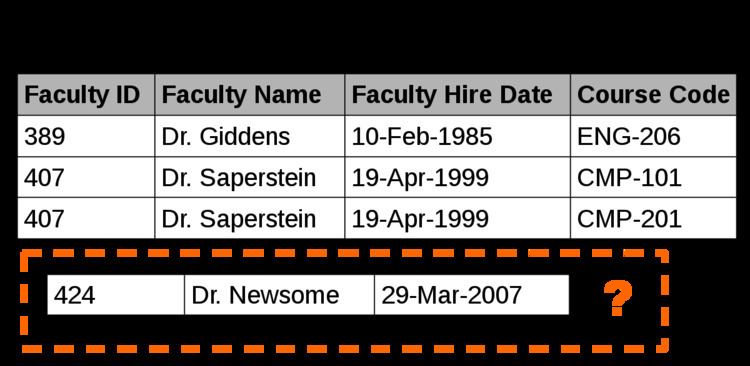

When an attempt is made to modify (update, insert into, or delete from) a table, undesired side-effects may arise in tables that have not been sufficiently normalized. An insufficiently normalized table might have one or more of the following characteristics:

Minimize redesign when extending the database structure

When a fully normalized database structure is extended to allow it to accommodate new types of data, the pre-existing aspects of the database structure can remain largely or entirely unchanged. As a result, applications interacting with the database are minimally affected.

Normalized tables, and the relationship between one normalized table and another, mirror real-world concepts and their interrelationships.

Example

Querying and manipulating the data within a data structure that is not normalized, such as the following non-1NF representation of customers, credit card transactions, involves more complexity than is really necessary:

To each customer corresponds a repeating group of transactions. The automated evaluation of any query relating to customers' transactions therefore would broadly involve two stages:

- Unpacking one or more customers' groups of transactions allowing the individual transactions in a group to be examined, and

- Deriving a query result based on the results of the first stage

For example, in order to find out the monetary sum of all transactions that occurred in October 2003 for all customers, the system would have to know that it must first unpack the Transactions group of each customer, then sum the Amounts of all transactions thus obtained where the Date of the transaction falls in October 2003.

One of Codd's important insights was that this structural complexity could always be removed completely, leading to much greater power and flexibility in the way queries could be formulated (by users and applications) and evaluated (by the DBMS). The normalized equivalent of the structure above would look like this:

In the modified structure, the keys are {Customer} and {Cust. ID} in the first table, {Cust. ID, Tr ID} in the second table.

Now each row represents an individual credit card transaction, and the DBMS can obtain the answer of interest, simply by finding all rows with a Date falling in October, and summing their Amounts. The data structure places all of the values on an equal footing, exposing each to the DBMS directly, so each can potentially participate directly in queries; whereas in the previous situation some values were embedded in lower-level structures that had to be handled specially. Accordingly, the normalized design lends itself to general-purpose query processing, whereas the unnormalized design does not. The normalized version also allows the user to change the customer name in one place and guards against errors that arise if the customer name is misspelled on some records.