| ||

In linear algebra, a basis for a vector space of dimension n is a set of n vectors (α1, …, αn), called basis vectors, with the property that every vector in the space can be expressed as a unique linear combination of the basis vectors. The matrix representations of operators are also determined by the chosen basis. Since it is often desirable to work with more than one basis for a vector space, it is of fundamental importance in linear algebra to be able to easily transform coordinate-wise representations of vectors and operators taken with respect to one basis to their equivalent representations with respect to another basis. Such a transformation is called a change of basis.

Contents

- Transformation matrix

- Uniqueness linear transformation

- Theorem

- Matrix of a set of vectors

- Change of coordinates of a vector

- Two dimensions

- Three dimensions

- General case

- The matrix of a linear transformation

- Change of basis

- The matrix of an endomorphism

- The matrix of a bilinear form

- Important instances

- References

Although the terminology of vector spaces is used below and the symbol R can be taken to mean the field of real numbers, the results discussed hold whenever R is a commutative ring and vector space is everywhere replaced with free R-module.

Transformation matrix

The standard basis for

If

In this case we have

Uniqueness linear transformation

We will also make use of the following simple observation.

Theorem

Let

This unique

Of course, if

Coordinate isomorphism

Now let

The unique linear map

Matrix of a set of vectors

A set of vectors can be represented by a matrix of which each column consists of the components of the corresponding vector of the set. As a basis is a set of vectors, a basis can be given by a matrix of this kind. Later it will be shown that the change of basis of any object of the space is related to this matrix. For example vectors change with its inverse (and they are therefore called contravariant objects).

Change of coordinates of a vector

First we examine the question of how the coordinates of a vector

Two dimensions

This means that given a matrix

Any finite set of vectors can be represented by a matrix in which its columns are the coordinates of the given vectors. As an example in dimension 2, a pair of vectors obtained by rotating the standard basis counterclockwise for 45°. The matrix whose columns are the coordinates of these vectors is

If we want to change any vector of the space to this new basis, we only need to left-multiply its components by the inverse of this matrix.

Three dimensions

For example, let R be a new basis given by its Euler angles. The matrix of the basis will have as columns the components of each vector. Therefore, this matrix will be (See Euler angles article):

Again, any vector of the space can be changed to this new basis by left-multiplying its components by the inverse of this matrix.

General case

Suppose

If

The matrix of a linear transformation

Now suppose T : V → W is a linear transformation, {α1, …, αn} is a basis for V and {β1, …, βm} is a basis for W. Let φ and ψ be the coordinate isomorphisms for V and W, respectively, relative to the given bases. Then the map T1 = ψ−1 ∘ T ∘ φ is a linear transformation from Rn to Rm, and therefore has a matrix t; its jth column is ψ−1(T(αj)) for j = 1, …, n. This matrix is called the matrix of T with respect to the ordered bases {α1, …, αn} and {β1, …, βm}. If η = T(ξ) and y and x are the coordinate tuples of η and ξ, then y = ψ−1(T(φ(x))) = tx. Conversely, if ξ is in V and x = φ−1(ξ) is the coordinate tuple of ξ with respect to {α1, …, αn}, and we set y = tx and η = ψ(y), then η = ψ(T1(x)) = T(ξ). That is, if ξ is in V and η is in W and x and y are their coordinate tuples, then y = tx if and only if η = T(ξ).

Theorem Suppose U, V and W are vector spaces of finite dimension and an ordered basis is chosen for each. If T : U → V and S : V → W are linear transformations with matrices s and t, then the matrix of the linear transformation S ∘ T : U → W (with respect to the given bases) is st.

Change of basis

Now we ask what happens to the matrix of T : V → W when we change bases in V and W. Let {α1, …, αn} and {β1, …, βm} be ordered bases for V and W respectively, and suppose we are given a second pair of bases {α′1, …, α′n} and {β′1, …, β′m}. Let φ1 and φ2 be the coordinate isomorphisms taking the usual basis in Rn to the first and second bases for V, and let ψ1 and ψ2 be the isomorphisms taking the usual basis in Rm to the first and second bases for W.

Let T1 = ψ1−1 ∘ T ∘ φ1, and T2 = ψ2−1 ∘ T ∘ φ2 (both maps taking Rn to Rm), and let t1 and t2 be their respective matrices. Let p and q be the matrices of the change-of-coordinates automorphisms φ2−1 ∘ φ1 on Rn and ψ2−1 ∘ ψ1 on Rm.

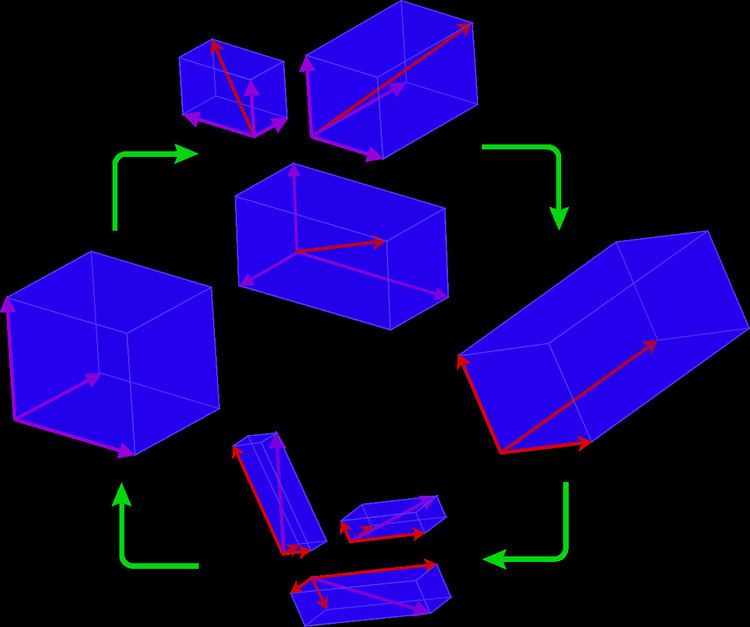

The relationships of these various maps to one another are illustrated in the following commutative diagram.

Since we have T2 = ψ2−1 ∘ T ∘ φ2 = (ψ2−1 ∘ ψ1) ∘ T1 ∘ (φ1−1 ∘ φ2), and since composition of linear maps corresponds to matrix multiplication, it follows that

Given that the change of basis has once the basis matrix and once its inverse, this objects are said to be 1-co, 1-contra-variant.

The matrix of an endomorphism

An important case of the matrix of a linear transformation is that of an endomorphism, that is, a linear map from a vector space V to itself: that is, the case that W = V. We can naturally take {β1, …, βn} = {α1, …, αn} and {β′1, …, β′m} = {α′1, …, α′n}. The matrix of the linear map T is necessarily square.

Change of basis

We apply the same change of basis, so that q = p and the change of basis formula becomes

t2 = p t1 p−1.In this situation the invertible matrix p is called a change-of-basis matrix for the vector space V, and the equation above says that the matrices t1 and t2 are similar.

The matrix of a bilinear form

A bilinear form on a vector space V over a field R is a mapping V × V → R which is linear in both arguments. That is, B : V × V → R is bilinear if the maps

are linear for each w in V. This definition applies equally well to modules over a commutative ring with linear maps being module homomorphisms.

The Gram matrix G attached to a basis

If

The matrix will be symmetric if the bilinear form B is a symmetric bilinear form.

Change of basis

If P is the invertible matrix representing a change of basis from

Important instances

In abstract vector space theory the change of basis concept is innocuous; it seems to add little to science. Yet there are cases in associative algebras where a change of basis is sufficient to turn a caterpillar into a butterfly, figuratively speaking: