Type tree O(log n) O(log n) | Invented 1962 O(n) O(n) | |

| ||

Invented by | ||

In computer science, an AVL tree is a self-balancing binary search tree. It was the first such data structure to be invented. In an AVL tree, the heights of the two child subtrees of any node differ by at most one; if at any time they differ by more than one, rebalancing is done to restore this property. Lookup, insertion, and deletion all take O(log n) time in both the average and worst cases, where n is the number of nodes in the tree prior to the operation. Insertions and deletions may require the tree to be rebalanced by one or more tree rotations.

Contents

- Balance factor

- Properties

- Operations

- Searching

- Traversal

- Insert

- Delete

- Set operations and bulk operations

- Rebalancing

- Simple rotation

- Double rotation

- Comparison to other structures

- References

The AVL tree is named after its two Soviet inventors, Georgy Adelson-Velsky and Evgenii Landis, who published it in their 1962 paper "An algorithm for the organization of information".

AVL trees are often compared with red–black trees because both support the same set of operations and take O(log n) time for the basic operations. For lookup-intensive applications, AVL trees are faster than red–black trees because they are more strictly balanced. Similar to red–black trees, AVL trees are height-balanced. Both are, in general, neither weight-balanced nor μ-balanced for any μ≤1⁄2; that is, sibling nodes can have hugely differing numbers of descendants.

Balance factor

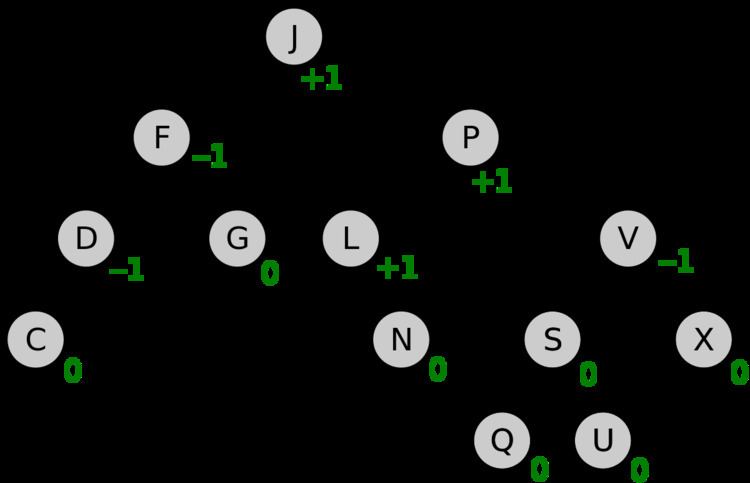

In a binary tree the balance factor of a node N is defined to be the height difference

BalanceFactor(N) := –Height(LeftSubtree(N)) + Height(RightSubtree(N))of its two child subtrees. A binary tree is defined to be an AVL tree if the invariant

BalanceFactor(N) ∈ {–1,0,+1}holds for every node N in the tree.

A node N with BalanceFactor(N) < 0 is called "left-heavy", one with BalanceFactor(N) > 0 is called "right-heavy", and one with BalanceFactor(N) = 0 is sometimes simply called "balanced".

In the sequel, because there is a one-to-one correspondence between nodes and the subtrees rooted by them, we sometimes leave it to the context whether the name of an object stands for the node or the subtree.

Properties

Balance factors can be kept up-to-date by knowing the previous balance factors and the change in height – it is not necessary to know the absolute height. For holding the AVL balance information, two bits per node are sufficient.

The height h of an AVL tree with n nodes lies in the interval:

log2(n+1) ≤ h < c log2(n+2)+bwith the golden ratio φ := (1+√5) ⁄2 ≈ 1.618, c := 1⁄ log2 φ ≈ 1.44, and b := c⁄2 log2 5 – 2 ≈ –0.328. This is because an AVL tree of height h contains at least Fh+2 – 1 nodes where {Fh} is the Fibonacci sequence with the seed values F1 = 1, F2 = 1.

Operations

Read-only operations of an AVL tree involve carrying out the same actions as would be carried out on an unbalanced binary search tree, but modifications have to observe and restore the height balance of the subtrees.

Searching

Searching for a specific key in an AVL tree can be done the same way as that of a normal unbalanced binary search tree. In order for search to work effectively it has to employ a comparison function which establishes a total order (or at least a total preorder) on the set of keys. The number of comparisons required for successful search is limited by the height h and for unsuccessful search is very close to h, so both are in O(log n).

Traversal

Once a node has been found in an AVL tree, the next or previous node can be accessed in amortized constant time. Some instances of exploring these "nearby" nodes require traversing up to h ∝ log(n) links (particularly when navigating from the rightmost leaf of the root’s left subtree to the root or from the root to the leftmost leaf of the root’s right subtree; in the AVL tree of figure 1, moving from node P to the next but one node Q takes 3 steps). However, exploring all n nodes of the tree in this manner would visit each link exactly twice: one downward visit to enter the subtree rooted by that node, another visit upward to leave that node’s subtree after having explored it. And since there are n−1 links in any tree, the amortized cost is found to be 2×(n−1)/n, or approximately 2.

Insert

When inserting an element into an AVL tree, you initially follow the same process as inserting into a Binary Search Tree. After inserting a node, it is necessary to check each of the node’s ancestors for consistency with the invariants of AVL trees: this is called "retracing". This is achieved by considering the balance factor of each node.

Since with a single insertion the height of an AVL subtree cannot increase by more than one, the temporary balance factor of a node after an insertion will be in the range [–2,+2]. For each node checked, if the temporary balance factor remains in the range from –1 to +1 then only an update of the balance factor and no rotation is necessary. However, if the temporary balance factor becomes less than –1 or greater than +1, the subtree rooted at this node is AVL unbalanced, and a rotation is needed. The various cases of rotations are described in section Rebalancing.

By inserting the new node Z as a child of node X the height of that subtree Z increases from 0 to 1.

The height of the subtree rooted by Z has increased by 1. It is already in AVL shape.

In order to update the balance factors of all nodes, first observe that all nodes requiring correction lie from child to parent along the path of the inserted leaf. If the above procedure is applied to nodes along this path, starting from the leaf, then every node in the tree will again have a balance factor of −1, 0, or 1.

The retracing can stop if the balance factor becomes 0 implying that the height of that subtree remains unchanged.

If the balance factor becomes ±1 then the height of the subtree increases by one and the retracing needs to continue.

If the balance factor temporarily becomes ±2, this has to be repaired by an appropriate rotation after which the subtree has the same height as before (and its root the balance factor 0).

The time required is O(log n) for lookup, plus a maximum of O(log n) retracing levels (O(1) on average) on the way back to the root, so the operation can be completed in O(log n) time.

Delete

When deleting an element from an AVL tree, swap the desired element with the minimum element in the right subtree, or maximum element in the left subtree. Once this has been completed delete the element from the new position (the process may need to be repeated). If the element is now a leaf node, remove it completely. Make sure to perform rotations to maintain the AVL property.

Steps to consider when deleting a node D in an AVL tree are the following:

- If D is a leaf or has only one child F, skip to step 6 with N:=D, G as the parent of D and dir the child direction of D at G.

- Otherwise, determine node E by traversing to the leftmost (smallest) node in D’s right subtree which is the in-order successor of D and does not have a left child (see figure 3).

- Remember node G, the parent of E, and dir, the child direction of E at G (which is left if G≠D else right).

- Copy E’s key and possibly data to D, thus inserting a duplicate. The original E will be removed in step 7.

- Let N:=E and F be the right child of E (it may be null).

- If node N was the root (its parent G is null), update root.

- Otherwise, replace N at the child position dir of G by F, which is null or a leaf. In the latter case set F’s parent to G.

(Now the last trace of D has been taken out of the tree, so D may be removed from memory as well.)

The height of the dir subtree of G has decreased by 1, either from 1 to 0 or from 2 to 1. So, let X:=G and N be the dir child of X in the code piece below, in order to retrace the path back up the tree to the root, thereby adjusting the balance factors (including possible rotations) as needed.

Since with a single deletion the height of an AVL subtree cannot decrease by more than one, the temporary balance factor of a node will be in the range from −2 to +2.

If the balance factor becomes ±2 then the subtree is unbalanced and needs to be rotated. The various cases of rotations are described in section Rebalancing.

By removing node N the height of the that subtree N of X has decreased by 1, either from 1 to 0 or from 2 to 1.

The height of the subtree rooted by N has decreased by 1. It is already in AVL shape.

The retracing can stop if the balance factor becomes ±1 meaning that the height of that subtree remains unchanged.

If the balance factor becomes 0 then the height of the subtree decreases by one and the retracing needs to continue.

If the balance factor temporarily becomes ±2, this has to be repaired by an appropriate rotation. It depends on the balance factor of the sibling Z (the higher child tree) whether the height of the subtree decreases by one or does not change (the latter, if Z has the balance factor 0).

The time required is O(log n) for lookup, plus a maximum of O(log n) retracing levels (O(1) on average) on the way back to the root, so the operation can be completed in O(log n) time.

Set operations and bulk operations

In addition to the single-element insert, delete and lookup operations, several set operations have been defined on AVL trees: union, intersection and set difference. Then fast bulk operations on insertions or deletions can be implemented based on these set functions. These set operations rely on two helper operations, Split and Join. With the new operations, the implementation of AVL trees can be more efficient and highly-parallelizable.

The union of two AVLs t1 and t2 representing sets A and B, is an AVL t that represents A ∪ B. The following recursive function computes this union:

function union(t1, t2): if t1 = nil: return t2 if t2 = nil: return t1 t<, t> ← split t2 on t1.root return join(t1.root,union(left(t1), t<),union(right(t1), t>))Here, Split is presumed to return two trees: one holding the keys less its input key, one holding the greater keys. (The algorithm is non-destructive, but an in-place destructive version exists as well.)

The algorithm for intersection or difference is similar, but requires the Join2 helper routine that is the same as Join but without the middle key. Based on the new functions for union, intersection or difference, either one key or multiple keys can be inserted to or deleted from the AVL tree. Since Split calls Join but does not deal with the balancing criteria of AVL trees directly, such an implementation is usually called the "join-based" implementation.

The complexity of each of union, intersection and difference is

Rebalancing

If during a modifying operation (e.g. insert, delete) a (temporary) height difference of more than 2 arises between two child subtrees, the parent subtree has to be "rebalanced". The given repair tools are the so-called tree rotations, because they move the keys only "vertically", so that the ("horizontal") in-order sequence of the keys is fully preserved (which is essential for a binary-search tree).

Let Z be the child higher by 2 (see figures 4 and 5). Two flavors of rotations are required: simple and double. Rebalancing can be accomplished by a simple rotation (see figure 4) if the inner child of Z, that is the child with a child direction opposite to that of Z, (t23 in figure 4, Y in figure 5) is not higher than its sibling, the outer child t4 in both figures. This situation is called "Right Right" or "Left Left" in the literature.

On the other hand, if the inner child (t23 in figure 4, Y in figure 5) of Z is higher than t4 then rebalancing can be accomplished by a double rotation (see figure 5). This situation is called "Right Left" because X is right- and Z left-heavy (or "Left Right" if X is left- and Z is right-heavy). From a mere graph-theoretic point of view, the two rotations of a double are just single rotations. But they encounter and have to maintain other configurations of balance factors. So, in effect, it is simpler – and more efficient – to specialize, just as in the original paper, where the double rotation is called Большое вращение (russ. for big turn) as opposed to the simple rotation which is called Малое вращение (russ. for little turn). But there are alternatives: one could e.g. update all the balance factors in a separate walk from leaf to root.

The cost of a rotation, both simple and double, is constant.

For both flavors of rotations a mirrored version, i.e. rotate_Right or rotate_LeftRight, respectively, is required as well.

Simple rotation

Figure 4 shows a Right Right situation. In its upper half, node X has two child trees with a balance factor of +2. Moreover, the inner child t23 of Z is not higher than its sibling t4. This can happen by a height increase of subtree t4 or by a height decrease of subtree t1. In the latter case, also the pale situation where t23 has the same height as t4 may occur.

The result of the left rotation is shown in the lower half of the figure. Three links (thick edges in figure 4) and two balance factors are to be updated.

As the figure shows, before an insertion, the leaf layer was at level h+1, temporarily at level h+2 and after the rotation again at level h+1. In case of a deletion, the leaf layer was at level h+2, where it is again, when t23 and t4 were of same height. Otherwise the leaf layer reaches level h+1, so that the height of the rotated tree decreases.

Double rotation

Figure 5 shows a Right Left situation. In its upper third, node X has two child trees with a balance factor of +2. But unlike figure 4, the inner child Y of Z is higher than its sibling t4. This can happen by a height increase of subtree t2 or t3 (with the consequence that they are of different height) or by a height decrease of subtree t1. In the latter case, if may also occur that t2 and t3 are of same height.

The result of the first, the right, rotation is shown in the middle third of the figure. (With respect to the balance factors, this rotation is not of the same kind as the other AVL single rotations, because the height difference between Y and t4 is only 1.) The result of the final left rotation is shown in the lower third of the figure. Five links (thick edges in figure 5) and three balance factors are to be updated.

As the figure shows, before an insertion, the leaf layer was at level h+1, temporarily at level h+2 and after the double rotation again at level h+1. In case of a deletion, the leaf layer was at level h+2 and after the double rotation it is at level h+1, so that the height of the rotated tree decreases.

Comparison to other structures

Both AVL trees and red–black trees are self-balancing binary search trees and they are related mathematically. Indeed, every AVL tree can be colored red–black. The operations to balance the trees are different; both AVL trees and red-black require O(1) rotations in the worst case, while both also require O(log n) other updates (to colors or heights) in the worst case (though only O(1) amortized). AVL trees require storing 2 bits (or one trit) of information in each node, while red-black trees require just one bit per node. The bigger difference between the two data structures is their height limit.

For a tree of size n ≥ 1

AVL trees are more rigidly balanced than red–black trees, leading to faster retrieval but slower insertion and deletion.